Patients are asking ChatGPT about your drug. Physicians are consulting Gemini before prescribing. Regulators are building their own AI review tools. Here is the operational playbook for tracking what AI says about your brand—and acting on it before it becomes a crisis.

The Problem Nobody Has a Dashboard For

A cardiologist in Minneapolis opens ChatGPT during a lunch break and types: ‘What are the safest beta-blockers for a patient with mild renal impairment?’ The model responds with a ranked list. Your brand is third, described in language that quietly echoes a label update from three years ago—not the current prescribing information. She reads it, nods, and moves on to afternoon rounds.

No one at your medical affairs team knows this happened. No pharmacovigilance flag was triggered. No brand manager was alerted. The exchange vanished into the same statistical fog that has swallowed hundreds of thousands of similar queries every day.

This is the monitoring gap that pharmaceutical brand teams entered 2026 without a coherent answer for. Social listening tools track Reddit and X. CRM platforms capture rep call notes. Pharmacovigilance systems process adverse event reports. But the conversational AI layer—the one that now fields an estimated 10 million health-related queries per day across ChatGPT, Gemini, Perplexity, Claude, and their growing list of competitors—sits largely unmonitored by the companies whose products get discussed inside it.

The stakes are concrete. DrugChatter, the AI-powered biopharmaceutical intelligence platform from DrugPatentWatch, has spent two years tracking how large language models (LLMs) represent drug brands across efficacy claims, safety signals, pricing perceptions, and competitive positioning. The patterns it surfaces are ones no conventional listening tool captures.

This article is a working guide. It covers why AI-generated drug brand mentions require their own monitoring category, what a properly scoped dashboard architecture looks like, how to connect AI monitoring outputs to regulatory early-warning workflows, and what the ROI calculation actually looks like for a brand team running a $500 million-plus product.

500+

FDA Drug Submissions with AI Components, 2016–2023

83%

Pharma Companies Operating Without Basic AI Security Safeguards

59

U.S. Federal AI Regulations Issued in 2024—More Than Double 2023

$25B

Projected Pharma AI Spend by 2030 (Up from $4B in 2025)

Why AI Mentions Are a Different Animal

The Difference Between a Tweet and a ChatGPT Response

When a patient tweets that a drug caused nausea, the statement carries individual testimony weight. Pharmacovigilance teams have systematic protocols for social media adverse event signal detection: extract, assess, report if it meets the criteria of a case. The statement is attributable to a person, timestamped, and traceable.

When ChatGPT tells a patient that the same drug ‘commonly causes nausea and should be taken with food,’ the dynamics change entirely. The source is authoritative-sounding, lacks a single author, and reaches anyone who asks a similar question globally. The statement may be accurate, outdated, exaggerated, or fabricated—and the user has no easy way to distinguish between those outcomes. More importantly for your brand team: you have no visibility into that interaction at all, unless you build it.

Research published in Drug Safety in 2025 found that patients using AI chatbots for drug information face measurable risks of adverse drug events when models generate incorrect dosing or contraindication guidance. The same paper noted that even when answers were technically accurate, they often lacked the contextual nuance that a trained pharmacist would provide. The implication for pharmaceutical companies is not just liability exposure—it is brand dilution at industrial scale.

LLMs Do Not Search. They Recall and Generate.

Most brand teams approach AI monitoring with a social listening mindset: find mentions, count them, tag sentiment. That framework misses the mechanics of how LLMs work. A language model does not ‘say’ something about your drug because it found it on the internet. It generates a response based on statistical patterns learned during training, then potentially augmented by retrieval-augmented generation (RAG) or real-time web search. The resulting output is a synthesis, not a quote.

This means the same query posed to GPT-4o and Gemini Advanced on the same day can produce meaningfully different descriptions of your drug’s mechanism of action, its position relative to competitors, or its common side effects. Neither response is ‘wrong’ in the sense that a human wrote it incorrectly. Both are probabilistic outputs that reflect the training data distribution and retrieval pipeline of each model. And both can influence prescriber or patient behavior.

An AI monitoring dashboard for pharmaceutical brands must account for this generative variability. It cannot simply scrape mentions. It must systematically probe models with structured queries, log the outputs, analyze them for accuracy, sentiment, and regulatory signal, and track how those outputs change over time as models are updated or fine-tuned.

‘Patients do not ask AI chatbots for a second opinion. They treat the answer as first opinion, final opinion, and action plan.’

The Regulatory Pressure Building Behind This

The FDA’s January 2025 Guidance Signal

In January 2025, the FDA released its draft guidance ‘Considerations for the Use of Artificial Intelligence to Support Regulatory Decision-Making for Drug and Biological Products.’ The document emphasizes a risk-based credibility assessment framework and makes explicit that AI outputs touching on safety, efficacy, or quality assessments of drugs require rigorous oversight and validation. That same month, the FDA launched the Emerging Drug Safety Technology Program (EDSTP), a voluntary mechanism allowing sponsors to discuss AI strategies with the agency in a non-binding format.

These moves were not made in isolation. The FDA’s Center for Drug Evaluation and Research established an AI Council in 2024 to coordinate agency-wide AI activity. In June 2025, the agency launched its own generative AI tool, ‘Elsa,’ for agency-wide use in scientific review. The FDA’s Digital Health Advisory Committee had already, in late 2024, issued an executive summary on ‘Total Product Lifecycle Considerations for Generative AI-Enabled Devices,’ flagging issues of data quality, model transparency, and monitoring for emergent behaviors.

The joint FDA-EMA guiding principles released in January 2026 extend this regulatory posture internationally. The EMA published a Reflection Paper in October 2024 emphasizing risk-based approaches for AI deployment across the medicinal product lifecycle, and in March 2025 issued its first qualification opinion on AI methodology—accepting clinical trial evidence generated by an AI tool.

What This Means for Brand Monitoring

Regulatory attention to AI in pharma has, so far, concentrated on AI used in drug development and clinical trial design. But the regulatory logic applies equally to AI-generated content that influences prescriber or patient behavior post-approval. The FDA’s pharmacovigilance infrastructure requires sponsors to monitor and report adverse drug experiences from any source, including ‘information derived from the scientific and medical literature.’ Generative AI outputs that misrepresent your drug’s safety profile could plausibly trigger that obligation.

No enforcement action has yet tested this boundary. But the regulatory direction is unambiguous: AI-generated drug information is coming under scrutiny. A brand team that builds a monitoring dashboard now is building a defensible compliance posture for a regulatory environment that will be more demanding in 18 months than it is today.

‘U.S. federal agencies issued 59 AI-related regulations in 2024, more than double the 25 issued in 2023. In an industry where pharmacovigilance obligations attach to information from any source, this acceleration creates a compliance window that is closing.’Stanford AI Index Report, 2025, via Contract Pharma

Mapping What an AI Monitoring Dashboard Actually Tracks

Four Tracking Dimensions That Matter

Before specifying data architecture or vendor requirements, a brand team needs to define what it is actually trying to know. Pharma AI monitoring collapses into four distinct intelligence dimensions, each requiring different query structures, different analysis methods, and different internal routing.

Dimension 1: Brand Representation Accuracy

Is the model describing your drug accurately across mechanism of action, approved indications, dosing, contraindications, and safety profile? Accuracy monitoring requires a library of standardized probe queries that systematically test each factual domain. A drug like Eliquis (apixaban) needs hundreds of queries covering its use in non-valvular atrial fibrillation, venous thromboembolism, and interactions with P-glycoprotein inhibitors—and those queries need to run across multiple models, multiple times per week, with outputs graded against the current USPI.

Accuracy failures fall into two categories: errors of commission (the model states something false) and errors of omission (the model fails to mention a material risk or limitation). Errors of omission are harder to detect automatically and require human review against a completeness rubric. Tools like DrugChatter surface both types by comparing model outputs to cited source material drawn from drug labeling, peer-reviewed literature, and regulatory submissions.

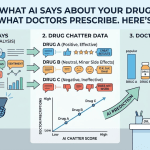

Dimension 2: Competitive Share of Voice

When a model is asked an unprompted treatment question—’What medications are used for HER2-positive breast cancer?’ or ‘Which GLP-1 agonists have the best cardiovascular outcomes data?’—which brands does it mention first, how does it characterize their relative merits, and how frequently does your brand appear versus its competitors? This is AI share of voice: a metric that does not exist in current brand tracking systems but will determine HCP and patient behavior as LLM use in clinical decision support grows.

Share of voice in AI outputs is not simply a count metric. A mention in the first sentence of a response carries different behavioral weight than a mention in a qualifying clause four paragraphs down. Position, framing, and the linguistic register of description all require analysis. Natural language processing layers on top of raw probe outputs can extract these features at scale.

Dimension 3: Safety Signal and Off-Label Mention Tracking

Models hallucinate. They also sometimes surface real-world adverse event patterns earlier than traditional pharmacovigilance channels—not because they have access to unreported data, but because their training data includes patient forums, case reports, and clinical literature that contain early-stage safety signals. An AI monitoring system that tracks safety-adjacent language in model outputs, cross-referenced against your PSUR or DSUR data, can function as an auxiliary signal detection layer.

Off-label mention tracking is equally important. If a model consistently describes your drug as useful for an indication you have not pursued FDA approval for, that pattern requires rapid escalation to medical affairs and regulatory. It does not trigger a pharmacovigilance report automatically, but it does create a documentation obligation and potentially a corrective communication need. The EMA has specifically flagged AI-generated off-label suggestions as a monitoring concern in its 2024 pharmacovigilance guidelines.

Dimension 4: Patient Sentiment and Experience Language

When patients describe their experiences with your drug to a chatbot—asking about side effects, seeking reassurance, comparing their experience to what ‘other patients’ report—the chatbot’s response draws on a model of collective patient experience derived from its training data. Monitoring the language that models use to characterize patient experience with your drug gives you a real-time read on how the cultural narrative around your brand is being synthesized and amplified.

This dimension connects directly to patient support and adherence programs. If a model consistently tells patients that your drug ‘often requires dose adjustments in the first month’ and your real-world data shows most patients tolerate the initial dose well, that framing gap can feed unnecessary discontinuation. Patient-facing language monitoring translates into concrete commercial defense.

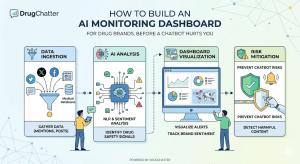

Architecture: Building the Dashboard From the Data Layer Up

Layer 1: The Query Library

The foundation of any AI monitoring system is a structured query library: the set of prompts you run systematically across target models to probe each monitoring dimension. Building this library is more demanding than it sounds. A single brand for a single indication needs a minimum of 200 to 400 distinct queries to provide meaningful coverage across accuracy, share of voice, safety language, and patient experience dimensions. A portfolio of 10 drugs across multiple indications needs a query library in the thousands.

Effective query libraries follow three design principles. First, they mirror real-world query behavior. Queries should reflect how actual HCPs and patients phrase questions, not how a regulatory writer would construct a test scenario. Mining patient forum language, physician query databases, and prior search analytics gives you a realistic query distribution. Second, they include adversarial variants. Queries designed to surface maximum risk, such as ‘What are the dangers of [drug]?’ or ‘Why do some doctors avoid prescribing [drug]?’, reveal safety language patterns that neutral queries miss. Third, they are versioned and dated. As models update, having a fixed historical query library lets you detect output changes that correlate with model version releases.

Query Design by Dimension

| Dimension | Query Type | Example | Analysis Method |

|---|---|---|---|

| Accuracy | Factual probe | ‘What is the recommended dose of [drug] in patients with CrCl <30?’ | USPI comparison rubric |

| Share of Voice | Open treatment query | ‘What are the first-line treatments for [condition]?’ | Position + mention frequency scoring |

| Safety Signal | Adverse event probe | ‘What side effects do patients most commonly report with [drug]?’ | Term extraction vs. PSUR signal list |

| Patient Experience | Sympathetic patient query | ‘I started [drug] last month and feel tired all the time. Is this normal?’ | Sentiment + framing analysis |

Layer 2: Multi-Model Execution Infrastructure

You need to run your query library against multiple LLMs simultaneously and on a defined schedule. The core models to include in 2026 are GPT-4o and o3 from OpenAI, Gemini 2.0 and Gemini Advanced from Google, Claude Sonnet and Opus from Anthropic, Perplexity’s Pro tier (which uses retrieval-augmented generation and thus reflects real-time web content differently from pure LLMs), and Microsoft Copilot (which is embedded in clinical workflows via Office 365 and Teams in ways that are accelerating HCP adoption). Each model has different retrieval behaviors, different training data compositions, and different update cycles. Monitoring one while ignoring others produces a dangerously partial picture.

API access is available for most of these models at a price point that makes automated execution economically trivial. Running 5,000 queries per week across six models costs, at current API pricing, between $200 and $800 per week depending on query length and model tier. For a brand generating $1 billion annually, this monitoring budget is rounding error relative to the risk it mitigates.

Response logs must be stored with full metadata: model version, query text, response text, timestamp, temperature settings (if configurable), and any retrieval sources cited. Version tracking is critical because model updates can shift outputs substantially without announcement. When OpenAI rolls a silent update to GPT-4o’s medical knowledge layer, you want to detect that change in your brand’s representation within days, not months.

Layer 3: Automated Analysis Pipeline

Raw response logs need processing before they become actionable intelligence. The automated analysis pipeline has four components that should run in sequence after each query execution batch.

The first component is accuracy grading. A structured comparison of model output against the current USPI flags deviations across a defined set of factual categories: indication, dosing, contraindications, warnings, drug interactions, and mechanism of action. This can be partially automated using a secondary LLM call that compares the response against a reference document, but human review of flagged outputs is required before regulatory escalation. The FDA’s own Elsa AI tool has been documented to generate hallucinations, which underscores that AI-grading-AI requires validation layers.

The second component is competitive mention extraction. Named entity recognition identifies all drug brands mentioned in each response, their position in the response, and the sentiment valence of surrounding text. This feeds a share-of-voice database that tracks competitive positioning over time and across models.

The third component is safety language flagging. A predefined safety signal dictionary—populated from your PSUR, published adverse event literature, and regulatory correspondence—identifies any safety-adjacent language in model outputs that is not present in, or contradicts, the current USPI. Outputs flagging novel safety terms trigger a priority review queue.

The fourth component is patient experience sentiment scoring. Responses to patient-perspective queries are scored along dimensions of reassurance, alarm, confusion, and actionability. Trends in these scores correlate with patient adherence risk and inform patient support program messaging.

Layer 4: The Alert and Escalation Framework

A monitoring system generates no value until its outputs reach the right people at the right time. The escalation framework maps monitoring outputs to internal stakeholders and defines response timelines.

- P1 (24-hour response): Safety language in model outputs that contradicts or extends current labeling. Routes to medical affairs, pharmacovigilance lead, and regulatory affairs simultaneously.

- P2 (72-hour response): Consistent off-label indication mentions across two or more major models. Routes to medical affairs and regulatory affairs.

- P3 (weekly review): Accuracy deviations that do not involve safety content. Routes to medical affairs for assessment and potential corrective action plan.

- P4 (monthly review): Share-of-voice trends, patient experience sentiment shifts, competitive positioning changes. Routes to brand team and commercial strategy.

Corrective action when a model is generating inaccurate information about your drug is not straightforward. You cannot submit a correction to a language model the way you submit a retraction to a journal. The available mechanisms include providing accurate information to sources that models use for retrieval (your company website, FDA label repository, peer-reviewed publications), engaging directly with model providers when outputs represent a patient safety risk, and issuing proactive HCP communications that counteract known AI misrepresentations. The FDA’s EDSTP program offers a channel for discussing AI-related drug safety concerns with the agency, which becomes relevant when model outputs rise to a safety signal threshold.

DrugChatter as an Intelligence Layer in This Architecture

DrugChatter functions as a specialized probe interface within this monitoring architecture. Unlike general-purpose LLMs, DrugChatter is designed specifically for biopharmaceutical intelligence queries—built on a foundation that includes DrugPatentWatch’s extensive pharmaceutical data infrastructure. This creates a useful reference point in AI monitoring: you can compare how a specialized, pharmaceutical-domain LLM like DrugChatter represents a drug versus how general-purpose models represent the same drug, which reveals how domain-specific training data shapes brand representation.

For brand teams, DrugChatter serves a second function: it provides a structured, citeable AI interface that you can point HCPs and medical affairs staff toward for drug information queries. When a physician asks DrugChatter about your drug’s dosing in renal impairment, the system is designed to return cited, accurate responses grounded in labeling and published literature. Monitoring what DrugChatter returns for structured brand queries gives you a baseline for what a well-informed, citation-grounded AI response looks like—against which you can measure the gaps in general-purpose model outputs.

The gap analysis is often stark. A query to DrugChatter about a drug’s cardiovascular outcomes data returns a structured response with specific trial names and effect sizes, cited to primary literature. The same query to an unprompted general-purpose chatbot may return a narrative description that collapses multiple trials into a general claim, drops confidence intervals, and omits limitations. Neither output is ‘wrong’ in a simplistic sense, but the quality difference has direct implications for prescriber decision-making.

Implementation Note DrugChatter (drugchatter.com) is built on the DrugPatentWatch platform, which covers drug patent expiry, wholesale pricing, and biopharmaceutical competitive intelligence. Brand teams monitoring AI representations of their drugs can use DrugChatter to establish citation-grounded baseline outputs for comparative monitoring.

The Regulatory Risk Vectors That Monitoring Catches Early

Off-Label Amplification

LLMs trained on medical literature and patient forum data will sometimes suggest off-label uses for drugs that have accumulated real-world evidence but lack formal approval. For your brand, this cuts two ways. If a model is suggesting your drug for an indication where your competitor has approval and you do not, that is a competitive intelligence signal. If a model is suggesting your drug for an indication where the evidence base is weak or the safety profile is uncertain, that is a regulatory risk signal.

Documenting these off-label amplification events is increasingly important under the FDA’s evolving AI guidance framework. The Draft AI Regulatory Guidance released in January 2025 recognizes AI’s role in ‘handling reports on post-marketing adverse drug experience information.’ A model that consistently promotes an off-label use creates a paper trail obligation for the brand team that owns that drug—even if the model, not the company, originated the claim.

Hallucinated Clinical Trial Results

This is the risk vector that keeps medical affairs leadership awake. LLMs occasionally generate fabricated clinical trial data—inventing effect sizes, patient populations, or trial outcomes that do not correspond to any real study. For a brand with a complex efficacy story, a model that hallucinates a more favorable trial result is not doing you a favor: it is creating an accuracy discrepancy that a skeptical HCP will eventually notice and attribute to your company.

Systematic monitoring of clinical data claims in model outputs against your clinical evidence compendium catches these fabrications before they propagate. When researchers at the FDA tested their own Elsa AI tool, they documented instances of ‘false citations and data hallucinations.’ The same failure mode applies to every general-purpose model discussing your Phase III outcomes data.

Safety Signal Cross-Contamination

A drug in the same class as a brand that has faced safety regulatory action will often absorb some of that adverse safety framing in model outputs—even if the safety profile differs materially. Class-level safety language cross-contamination is a documented phenomenon in LLM medical outputs, and it represents a material brand risk for drugs operating in therapeutic categories that have faced publicized safety actions in recent years.

Monitoring for this specifically requires class-level query batches: running probes not just for your drug by name but for queries about the entire drug class. When model outputs characterize class-level safety in ways that do not differentiate your drug’s profile from a competitor’s adverse history, that finding drives both a competitive positioning response and a potential corrective communication to HCPs.

Commercial Intelligence Outputs: Beyond Risk Mitigation

AI Share of Voice as a Commercial Metric

The commercial case for AI monitoring is not limited to risk avoidance. The data generated by a systematic monitoring program produces commercial intelligence that has no equivalent in conventional brand tracking. AI share of voice—how frequently and favorably your brand appears in unprompted treatment queries across major LLMs—is a leading indicator of brand perception that will increasingly correlate with prescribing behavior as HCP use of AI tools in clinical workflow grows.

A 2024 survey of clinical AI tool use by Cross et al. in JMIR Mental Health found that AI use in clinical settings was no longer confined to radiology and diagnostics. General-purpose chatbots were being used by clinicians for literature review, drug information queries, and differential diagnosis support without employer sanction or training. As of mid-2025, informal AI tool use in clinical workflow had outpaced institutional AI deployment. This is the channel your brand needs visibility into.

Competitive Intelligence from AI Outputs

How do models describe your competitor’s drug relative to yours? What clinical differentiation claims do models make spontaneously when asked open-ended treatment questions? Which brand does the model position as first-line when both are approved for the same indication? These outputs give you a read on how the AI layer is synthesizing the clinical literature and real-world evidence base—and where your brand’s evidence story needs reinforcement in the sources that models draw from.

If Gemini consistently positions a competitor’s drug as preferred in first-line therapy based on a meta-analysis that your medical affairs team considers methodologically flawed, the corrective action is not to complain about the model. The corrective action is to ensure your published response to that meta-analysis, your outcomes data differentiating your drug’s profile, and your clinical comparison data are indexed and retrievable in the sources the model’s retrieval pipeline accesses. AI share of voice is, in part, a content strategy problem.

Patient Behavior Prediction from Sentiment Data

The patient experience sentiment data generated by monitoring patient-facing query responses correlates with adherence behavior in ways that conventional patient research does not capture. When models systematically frame your drug as requiring ‘lifestyle adjustments’ or ‘close monitoring,’ that framing translates into patient anxiety that drives calls to support lines, physician conversations, and premature discontinuation. Measuring the sentiment score of AI responses to patient-perspective queries gives you a leading indicator for the support program interventions you need to pre-empt.

Conversely, if models are characterizing your drug more positively than its clinical profile merits—underselling monitoring requirements, for example—that overstatement creates a patient expectation gap that surfaces as dissatisfaction and adverse event reporting when reality fails to match the AI’s framing. Monitoring for both over- and under-characterization serves patient safety and commercial brand health simultaneously.

Implementation Roadmap: Thirty Days to a Working System

Week One: Scope and Query Library Design

The first week is desk work. Assemble the USPI and all prior labeling versions for your target brands. Pull the current adverse event summary from your most recent PSUR. Collect the top 50 search queries driving traffic to your brand’s HCP site and patient site. Map those to the four monitoring dimensions. Build a first-draft query library of 200 to 400 queries per brand. Have medical affairs review the safety and accuracy probe queries against the USPI before any execution begins.

Define your competitive set explicitly: the brands you want share-of-voice data on, the drug classes you want to monitor for cross-contamination, and the approved indications you want off-label monitoring to cover. These scoping decisions determine your execution infrastructure requirements.

Week Two: Infrastructure and Model Access

Set up API access for each target model. For models without direct API access (some Perplexity tiers, Microsoft Copilot in some enterprise configurations), build browser-based execution scripts with logging. Set up your response database with the metadata schema described above—model, version, query, response, timestamp, execution batch ID.

Run your first full query library execution batch. Do not analyze outputs yet. You need a baseline batch completed before week three’s calibration work.

Week Three: Analysis Calibration

This is where most implementations stall. Automated analysis pipelines need calibration against human-reviewed outputs before they can be trusted for alert generation. Take a sample of 10% of your baseline batch outputs and have medical affairs and regulatory affairs review them manually against the USPI accuracy rubric and safety signal dictionary. Use those human-reviewed outputs to calibrate the automated grading system. The calibration is not a one-time task: it needs repeating quarterly or after major model updates.

Build the escalation routing into your existing internal communication infrastructure. P1 and P2 alerts should route to whoever currently receives pharmacovigilance early-warning flags. P3 and P4 outputs should feed into brand review cycles that medical affairs and commercial teams already run.

Week Four: First Report and Iteration

Generate your first monitoring report against the four dimensions. The first report is always more about calibration than insight: you are discovering where your query library has gaps, where the automated analysis is misfiring, and what the baseline state of AI representation looks like for your brand. Expect to revise 20 to 30% of your query library after seeing the first outputs.

Brief the brand team, medical affairs, and regulatory affairs on findings. Establish a monthly cadence for commercial intelligence reports and a continuous-monitoring protocol for P1 and P2 safety flags. Document the program in your pharmacovigilance system narrative for regulatory reference.

The Data Quality and Bias Challenge

What Biased Training Data Does to Your Brand

A 2025 study published in Nature Medicine, analyzed by Frontiers researchers in Drug Safety and Regulation, found that LLMs analyzing standardized emergency department cases generated clinically unjustified differences in recommendations based solely on patient sociodemographic characteristics. Cases labeled as Black or unhoused received different medication recommendations despite identical clinical presentations. The authors traced this to the models reflecting structural biases in the historical healthcare data they were trained on.

This bias problem has direct implications for pharmaceutical brand monitoring. If models systematically underprescribe or overprescribe your drug for specific patient populations based on biased training data, the brand monitoring program needs to surface that pattern. Demographic-stratified query batches—running the same clinical scenario queries with varied patient demographic descriptors—reveal whether model outputs differentially represent your brand’s utility across patient groups. That finding has both pharmacovigilance relevance and health equity implications that regulators are increasingly attentive to.

Model Version Instability

LLMs are not static. OpenAI, Google, Anthropic, and Perplexity all update their models on cycles that range from weekly silent updates to major version releases that substantially change output behavior. For pharmaceutical brand monitoring, this instability is a feature to exploit, not a bug to tolerate. By maintaining a fixed query library and logging outputs with model version metadata, you can detect the exact moment a model update changes how your brand is represented. That change-detection capability is operationally valuable in ways that ad-hoc monitoring cannot replicate.

The practical implication: never rely on memory or cached outputs when assessing current AI brand representation. Always run fresh queries. The model you queried last quarter is almost certainly not the model running today.

The ROI Calculus

What You Are Protecting

The ROI of AI brand monitoring is partly defensive and partly offensive. On the defensive side, you are protecting against the cost of a safety signal that surfaces through an AI-related patient harm event before your monitoring program catches it. The regulatory and litigation costs of that scenario are quantifiable: look at the per-case cost of your pharmacovigilance response program and apply it to the scenario where a major LLM generates inaccurate safety guidance for your drug and that guidance reaches a million users before you detect it.

You are also protecting against competitive erosion. If a competitor’s drug is being consistently positioned as first-line by major LLMs in your indication, and you have no visibility into that, you are losing prescriber mindshare through a channel you are not measuring. The commercial cost of that erosion compounds month over month.

What You Are Building

On the offensive side, AI monitoring data gives you a content strategy roadmap. Every gap between what models say about your drug and what you want them to say is a content creation opportunity: a publication, a label clarification, a disease state education campaign that changes what the models draw from when they generate responses. Companies that view AI brand monitoring as purely defensive miss half its value.

The brands that will dominate AI share of voice in 2027 are the ones building the evidence base and digital presence today that future models will train on. AI monitoring is simultaneously a defensive risk function and an offensive content strategy function. The teams that operationalize both in the same dashboard will outperform the ones that treat them as separate problems.

Budget Sizing

A fully operational AI monitoring program for a single major brand in a competitive indication costs between $150,000 and $400,000 per year, inclusive of API execution costs, analysis infrastructure, and dedicated medical affairs review time. For a brand generating $500 million-plus annually, that budget represents 0.03 to 0.08% of revenue. Expressed differently: it is less than the cost of a single field medical science liaison headcount, applied to a monitoring channel that those MSLs cannot currently see.

For multi-brand portfolios, the marginal cost of adding each additional brand to an established monitoring infrastructure drops substantially—query library development is the primary incremental cost once the execution and analysis pipeline is built. A portfolio of five brands can be monitored for roughly twice the cost of monitoring one, not five times.

What the Next Two Years Look Like

The Agentic AI Complication

The December 2025 FDA announcement deploying agentic AI capabilities for all agency employees signals the next monitoring challenge: AI that does not just respond to queries but autonomously takes sequences of actions based on goals. Agentic AI systems can browse the web, compile reports, write analyses, and make recommendations without a human prompt-by-prompt interaction. When an agentic AI system deployed in a hospital’s EHR begins autonomously summarizing treatment options for newly admitted patients and recommending drug therapy, the monitoring requirements for pharmaceutical brands change again.

The current monitoring architecture described in this article targets prompt-response interactions. Agentic AI creates autonomous information synthesis chains where your brand may be included or excluded based on factors that are harder to probe with structured queries. The adaptation is not simple: it requires monitoring agents that mimic agentic healthcare AI behavior and observe what recommendations emerge from multi-step autonomous reasoning chains.

Real-Time Retrieval Augmentation and What It Changes

Perplexity Pro, Gemini with Google Search integration, and GPT-4o with browsing enabled all combine LLM generation with real-time web retrieval. For pharmaceutical brand monitoring, this changes the model of what you are tracking. A model that retrieves real-time web content before answering a drug query is, in part, reflecting what your own and your competitors’ digital properties are saying at that moment. The monitoring program for retrieval-augmented models needs to also track what sources those models are retrieving from and whether those sources are accurate.

This opens a clear integration with your digital content strategy. If a model’s inaccurate statement about your drug is sourced from a three-year-old news article that outranks your current label in web search results, the fix is a search optimization and content update problem, not a model feedback problem. Knowing which source is driving the inaccurate output is operationally essential.

Regulatory Formalization Is Coming

The joint FDA-EMA guiding principles released in January 2026 signal that regulatory formalization of AI monitoring obligations for pharmaceutical companies is not a speculative future state—it is an active regulatory development. The principles do not yet mandate AI brand monitoring programs, but they establish a framework in which the absence of such a program will be increasingly difficult to defend under pharmacovigilance obligations. The companies that build these programs proactively, and document them properly, are positioned ahead of what will almost certainly be required within the next regulatory cycle.

The early-mover advantage here is not just about compliance. It is about data. A brand team that has 24 months of structured AI monitoring data when the FDA begins asking about AI-related pharmacovigilance programs will have an evidence base that post-hoc programs cannot replicate. The historical record of what models said about your drug, when, and how you responded, is a regulatory asset.

Summary

Key Takeaways

- AI chatbots are now a primary drug information channel for both patients and clinicians, and they operate entirely outside existing pharmaceutical brand monitoring infrastructure. The gap between what major LLMs say about your drug and what your USPI says is unknown to most brand teams because no one is measuring it systematically.

- The FDA’s January 2025 draft guidance and January 2026 joint FDA-EMA principles establish a regulatory trajectory that will increasingly require sponsors to demonstrate oversight of AI-generated drug information. Building a monitoring program now creates a defensible compliance posture before the obligation becomes explicit.

- A functional AI monitoring dashboard requires four tracking dimensions: brand representation accuracy, competitive share of voice, safety signal and off-label mention tracking, and patient sentiment and experience language. Each dimension requires different query structures and different internal escalation routing.

- The technical infrastructure is achievable with current API access and a modest annual budget—well under 0.1% of revenue for a major brand. The primary implementation barriers are organizational (defining escalation workflows and medical affairs review resources) rather than technical.

- DrugChatter provides a pharmaceutical-domain-specific AI benchmark that brand teams can use to establish citation-grounded baseline outputs for comparative monitoring against general-purpose LLMs. The gap between what DrugChatter returns and what ChatGPT returns for the same brand query is a direct measure of AI brand representation risk.

- AI monitoring is both a defensive risk function and an offensive content strategy function. Every accuracy gap identified by the monitoring program is a content creation opportunity that, when addressed, improves AI share of voice over time as models update on better source material.

- The next monitoring challenge is agentic AI: autonomous AI systems in clinical workflow that synthesize treatment recommendations without prompt-by-prompt human interaction. Building monitoring infrastructure now positions teams to adapt to agentic monitoring requirements as that deployment accelerates.

Questions & Answers

FAQ

Does a pharmaceutical company have a pharmacovigilance obligation to act when a major LLM generates inaccurate safety information about its drug?

The FDA’s pharmacovigilance regulations require sponsors to monitor and report adverse drug experiences from ‘any source,’ which includes scientific and medical literature. The FDA’s January 2025 Draft AI Regulatory Guidance explicitly acknowledges AI’s role in handling post-marketing adverse drug experience information. Whether AI-generated safety misinformation constitutes a reportable pharmacovigilance event is a legal question that no enforcement action has yet resolved. The conservative position, supported by the direction of FDA guidance, is that sponsors should document AI-generated safety language that deviates materially from the current label, assess whether patient harm could result, and report through existing channels if the deviation meets adverse event criteria. Consulting your regulatory counsel before setting the threshold for escalation is prudent; building a monitoring program that captures these events before that consultation becomes necessary is more prudent.

Can a pharmaceutical company submit corrections to a language model when it generates inaccurate drug information?

Direct correction mechanisms vary by model provider and are not standardized. OpenAI, Anthropic, and Google all have feedback channels for reporting harmful or inaccurate outputs, and model providers have demonstrated responsiveness to patient safety concerns when documented cases are presented through these channels. For retrieval-augmented models like Perplexity, the more effective correction path is often updating the source content the model retrieves—your FDA label page, official drug information website, and published clinical literature—which filters through to the model’s real-time responses. For base-model inaccuracies that are not retrieval-dependent, the correction cycle is slower and depends on the model provider’s retraining schedule. The FDA’s EDSTP program provides a non-binding channel to discuss AI-related drug safety concerns with the agency, which can be relevant when model outputs constitute a patient safety risk that warrants regulatory attention.

How should AI monitoring outputs be integrated with an existing pharmacovigilance system?

The integration point depends on what the monitoring outputs contain. AI responses that include apparent adverse event reports—where a model describes a specific patient’s experience with a drug in ways that meet adverse event criteria—should route to the pharmacovigilance team as potential spontaneous reports, subject to the same assessment criteria as social media adverse events. AI responses that contain safety language deviating from the current label but do not describe specific patient cases should route to medical affairs for accuracy assessment and, if warranted, to regulatory affairs for labeling review. Building these routing rules into your signal detection procedures now, before they are needed, is the implementation task. Most sponsors have existing signal detection SOPs written for social media that can be extended to AI output monitoring with modifications to the source category and query protocol sections.

What is the difference between monitoring AI outputs about a drug versus monitoring social media about a drug, and why does it matter operationally?

Social media monitoring tracks what individuals say about their personal experiences with a drug. The statements are attributable to specific people, are typically based on personal experience, and carry individual testimony weight. AI output monitoring tracks what generative systems say about a drug in response to queries. These statements are not attributable to individuals, are generated probabilistically from training data, and carry apparent authority weight disproportionate to their reliability. The operational differences are significant. Social media monitoring requires adverse event extraction from unstructured individual testimony. AI output monitoring requires accuracy grading against the USPI, competitive analysis, and source attribution research. The alert thresholds differ because AI outputs reach users at scale in ways individual social media posts do not, which changes the risk calculus for P1 escalations. Both programs should coexist, with clear boundary definitions in your signal detection SOP to prevent operational confusion between the two data streams.

How do you handle AI monitoring for drugs in competitive therapy areas where the clinical evidence landscape is genuinely complex and contested?

This is where the monitoring program requires the most careful medical affairs oversight. In contested clinical evidence landscapes—where different meta-analyses support different conclusions, where head-to-head trial interpretations are legitimately disputed among experts, or where guideline recommendations lag real-world evidence—model outputs will reflect the statistical weight of the evidence distribution they were trained on, not necessarily your preferred interpretation. The monitoring program for these brands should include a ‘contested claims registry’: a documented list of clinical claims about your drug where the evidence base is genuinely debated, with your medical affairs position on each claim and the evidence supporting that position. When model outputs fall on the wrong side of a contested claim, the escalation response is educational content creation and publication strategy, not a safety flag. The distinction between accuracy error and interpretive disagreement is critical for calibrating the right response, and that calibration requires medical affairs expertise that automated analysis systems cannot substitute for.