Every morning, thousands of pharmaceutical field reps walk into physician offices carrying approved messaging, printed leave-behinds, and CRM notes from their last visit. What they don’t carry — and almost none of them know they need — is a transcript of what ChatGPT, Claude, Gemini, or Perplexity said about their drug to that same physician the night before.

That gap is becoming a competitive liability.

AI chatbots now field an estimated tens of millions of health-related queries every day. Physicians use them to cross-reference prescribing decisions. Patients use them to validate what their doctor said. Hospital pharmacists use them to screen formulary additions. In each of those conversations, your drug either shows up accurately, shows up with errors, or doesn’t show up at all. Each outcome carries a distinct commercial consequence that your field team is currently flying blind to.

This article explains how pharmaceutical companies can — and increasingly must — deploy systematic AI output monitoring to support field sales operations, protect regulatory standing, and convert what the chatbots say into a genuine competitive intelligence layer.

The Problem Nobody Has Named Correctly

The pharmaceutical industry has spent the last two years obsessing over how AI can improve sales rep performance: better call planning, next-best-action recommendations, smarter territory segmentation, automated CRM notes. That work is real and valuable. But it addresses only one side of the AI equation.

The other side is this: AI systems are now making assertions about your branded and unbranded drugs to the people your reps are trying to influence — and those assertions are frequently wrong. <blockquote> A 2023 study published in <em>JAMA Internal Medicine</em> found that ChatGPT provided inaccurate or incomplete information in approximately 47% of drug-interaction queries. <br>— <em>JAMA Internal Medicine, 2023</em> </blockquote>

That 47% figure predates the widespread adoption of Perplexity, Gemini, and the dramatically expanded consumer use of Claude. The underlying problem hasn’t gotten better; the surface area has expanded enormously. And the regulatory stakes attached to that surface area are now explicit.

The FDA has explicitly committed to leveraging ‘AI and other tech-enabled tools’ to proactively surveil promotional activities, with a specific focus on AI-generated health content and chatbot interactions. In September 2025, that commitment turned operational: the FDA issued a flurry of enforcement letters, signaling a departure from the ‘overly cautious approach’ of previous years and a return to the aggressive enforcement paradigms of the late 1990s.

The regulatory frame matters here. When ChatGPT tells a patient that your drug is ‘highly effective for weight loss’ — even though it is only approved for type 2 diabetes — that is effectively off-label promotion happening at scale. But it is not your promotion. You did not write it, approve it, or distribute it. The gray zone that creates is real. So is the commercial damage to a rep walking in the next morning to discuss the approved indication.

Why Field Reps Are the Canary — and Nobody Is Listening

Pharmaceutical field teams sit at the only point in the commercial chain where company messaging meets HCP reality in real time. When a drug’s profile is miscommunicated in the market — by a competitor, a journal letter, a social media post — reps typically surface it within a few call cycles. They hear it in objections, questions, or the look a physician gives before saying “I read something different.”

AI-generated misinformation moves faster and sticks harder than any of those prior channels, and the current sales infrastructure has no systematic mechanism to catch it.

Physicians still find only one-third of sales calls valuable, more than 20% of physicians restrict access to representatives, and nearly 90% of interactions last less than two minutes. A rep who walks in with a pitch that contradicts what the physician just read in a chatbot has just made an already hard job harder. The physician doesn’t say “the AI said something different.” They just disengage.

This is a tractable problem. It requires building a monitoring infrastructure that gives field teams three specific intelligence inputs before each call cycle:

- What AI systems are currently saying about your drug in the therapeutic context most relevant to that HCP’s patient population

- Where those statements diverge from approved labeling

- What competitive drugs are being favored or recommended by AI in the same clinical scenario

None of those inputs currently flow to the field through standard CRM or SFE platforms. They require a dedicated AI output monitoring capability.

The Anatomy of an AI Drug Mention

Before building a monitoring system, it helps to understand how chatbots arrive at drug-related outputs in the first place.

Large language models like GPT-4o, Gemini 1.5, and Claude generate text by predicting the most statistically likely continuation of a prompt based on their training corpus. That corpus — scraped from the public web, PubMed, clinical trial registries, formulary databases, patient forums, Reddit threads, and news archives — reflects what the internet says about your drug, weighted by how often and how authoritatively it’s said.

The pharmaceutical brands that appear most frequently and authoritatively in that data are the ones AI mentions. Pfizer.com receives approximately 30 million monthly visits. The company has millions of mentions across news outlets, PubMed, FDA databases, and patient forums. A typical mid-size biotech with $1–3B in revenue receives 50,000–500,000 monthly visits to its corporate site.

The practical implication: a smaller or mid-size drug company with a well-differentiated product is likely underrepresented in AI outputs relative to its clinical merits. Your drug may be more efficacious in a specific population than what the chatbot recommends, but the chatbot doesn’t weight clinical superiority — it weights corpus frequency.

Pharmaceutical websites heavy on regulatory disclaimers and light on structured, citable data are essentially invisible to AI extraction. That’s a content strategy problem with a direct field sales consequence.

When AI does mention your drug, it draws from several data types that vary in accuracy:

The training data snapshot. Models have knowledge cutoffs. A drug that received a label update, a new indication approval, or a black box warning revision after that cutoff may be described with outdated information. Retrieval-augmented generation (RAG) systems, used by Perplexity and some configurations of other chatbots, can pull from live web sources — which means real-time misinformation from unvetted sites can enter the output stream.

Hallucination. AI models generate confident-sounding text even when their training data provides no reliable foundation for a specific claim. Drug interaction data, dosing instructions, and comparative efficacy statements are particularly prone to hallucination. This isn’t a bug the industry will fix on a timeline that matches your commercial cycle; it’s a structural feature of how these systems work.

Aggregation artifacts. When a chatbot synthesizes information across dozens of sources, it can inadvertently blend findings from different formulations, dosing regimens, or patient populations in ways that produce technically plausible but clinically inaccurate statements.

The Regulatory Exposure Is Real and Growing

The FDA’s annual intake of Individual Case Safety Reports (ICSRs) has surged from approximately 700,000 in 2010 to more than 2.1 million today. Much of that growth reflects expanded digital reporting channels. The FDA is now explicitly applying AI tools to scan the same digital environment your chatbot mentions live in.

The FDA’s focus has expanded to ‘closing digital loopholes,’ explicitly mentioning algorithm-driven targeted advertising and chatbot interactions as areas of renewed scrutiny. The agency’s own internal AI capability, a generative tool called ‘Elsa’ launched in June 2025, is being used to accelerate scientific review. The same capability applied to surveillance creates a detection environment where the probability of detection for non-compliant chatbot outputs is approaching 100%.

What does this mean for pharma companies monitoring AI mentions of their drugs?

First, adverse event signals may travel through AI systems before they reach traditional pharmacovigilance channels. A patient who experiences an unexpected reaction and queries an AI chatbot for context — before calling their physician or filing an MedWatch report — creates an adverse event signal in a channel that no current pharmacovigilance workflow captures. Many drug sponsors are considering how technologies like AI, machine learning, and natural language processing can improve existing pharmacovigilance, Medical Information, and lifecycle management workflows. Monitoring AI mentions is part of closing that gap.

Second, the content of AI outputs about your drug constitutes a form of de facto promotion that your company neither controls nor currently reviews. If a chatbot consistently recommends your drug for an off-label use because your clinical data from that indication showed up in the training corpus, that’s a regulatory exposure the FDA could theoretically trace back to the source material — your own publications.

Third, the January 2025 FDA draft guidance on AI in regulatory decision-making introduced a risk-based credibility framework. The draft guidance outlines the FDA’s preliminary recommendations for the application of AI in generating data and information pertinent to regulatory submissions for drugs and biological products. Companies that demonstrate active monitoring of AI-generated outputs about their products will be better positioned when this guidance matures into enforceable requirements.

What Systematic Monitoring Actually Looks Like

Building an AI output monitoring capability for pharmaceutical field sales support doesn’t require building a new technology platform from scratch. It requires combining several existing intelligence capabilities in a workflow designed specifically around this problem. DrugChatter is one purpose-built platform in this space, designed to track how AI systems describe specific drugs and surface those descriptions to commercial teams in actionable formats.

The core architecture of any serious monitoring program has four components.

Prompt library construction. The starting point is building a library of queries that mirror how physicians, patients, pharmacists, and formulary reviewers actually query AI systems about your drug. These aren’t brand-awareness queries like ‘Tell me about [Drug Name].’ They’re clinical-context queries: ‘What’s the first-line treatment for [condition] in patients with [comorbidity]?’ or ‘How does [Drug Name] compare to [Competitor] in [specific patient population]?’ The rep who knows which clinical scenarios her drug shows up in — and which ones it doesn’t — has a material advantage in HCP conversations.

Multi-model query execution. ChatGPT, Gemini, Claude, Perplexity, and Copilot produce meaningfully different outputs for the same clinical query. A drug that appears prominently in GPT-4o responses may be absent from Perplexity because Perplexity’s real-time web retrieval pulls from different source sets. Monitoring a single model gives you a partial and potentially misleading picture. A serious monitoring program queries all major consumer-facing AI platforms on a recurring cycle.

Output taxonomy and flagging. Raw AI outputs need to be classified before they’re useful to field teams. The taxonomy that matters commercially includes: accurate approved-indication mentions, inaccurate or outdated clinical claims, competitive drug mentions and sentiment, off-label mentions (regardless of accuracy), and safety information omissions. Each category carries a different response protocol — legal review, medical affairs follow-up, field team briefing, or regulatory notification.

Field-ready intelligence packaging. The output of the monitoring program that actually reaches field teams needs to be rep-usable in the 90 seconds before a call. That means therapeutic-area-specific AI mention summaries, competitor AI share-of-voice data by query category, and specific objection-handling language for AI-generated claims that contradict your messaging. Embedding this into existing CRM pre-call planning workflows, rather than creating a separate tool, is what drives adoption.

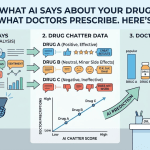

AI Share of Voice: The New Brand Metric

The pharmaceutical industry has measured share of voice for decades — what percentage of promotional spending in a category is yours. The metric has direct correlations to market share that every brand manager understands.

AI share of voice is a newer construct, but it maps to the same commercial logic. When a physician queries an AI system about treatment options for a condition your drug addresses, your drug either appears or it doesn’t, appears first or it doesn’t, and appears with favorable or unfavorable clinical context. That appearance pattern across millions of queries is your AI share of voice — and it’s currently unmeasured by almost every pharma commercial team in the market.

VPs of sales operations are moving beyond Next Best Action nudges in a CRM toward systems that synthesize HCP prescribing signals, digital engagement history, and patient flow data. That creates dynamic territory intelligence. The rep walks in knowing why this conversation, why this physician, and why now. AI share of voice data belongs in that synthesis.

The measurement itself requires several sub-metrics:

Mention frequency by query type. How often does your drug appear when AI systems are queried about the conditions it treats?

Recommendation position. When your drug appears, is it mentioned first, as an alternative, or as a last resort?

Clinical framing accuracy. Does the AI describe your drug using its actual approved indications, or has it drifted toward off-label uses, outdated trial data, or competitor-favorable comparisons?

Safety language completeness. Does the AI include appropriate risk information, or does it present your drug’s efficacy profile without the required safety context? The latter creates regulatory exposure; the former, if done selectively relative to competitors, represents a competitive disadvantage.

AI builds dynamic HCP profiles by combining prescribing history, specialty, patient data, and digital behavior — helping sales teams prioritize the right physicians at the right moment and reducing wasted rep time. Layering AI share of voice data into that HCP profile creates a fundamentally more complete picture of the information environment shaping each physician’s prescribing decisions.

The Competitive Intelligence Angle

AI monitoring isn’t only defensive. It’s one of the richest competitive intelligence sources available to pharma commercial teams, and most companies aren’t using it at all.

When you query AI systems with the clinical scenarios your competitors’ drugs address, the outputs reveal several commercially useful signals.

Which competitor drugs have high AI share of voice in which therapeutic contexts. If a competitor’s drug is being consistently recommended first in a clinical scenario where your drug has comparable or superior data, that’s an evidence of a content strategy gap — the competitor’s clinical narrative is better represented in the AI training corpus, and the gap will widen as AI use among HCPs grows.

Where competitor drugs are being described inaccurately. AI hallucinations about competitors’ safety profiles, dosing requirements, or patient population restrictions can create HCP misconceptions that work in your favor — or create false expectations that damage category credibility. Monitoring competitor AI mentions lets you prepare field teams to address either scenario factually.

Early signals of competitor pipeline positioning. Before a competitor drug receives approval, the clinical trial data, journal publications, and conference presentations that will eventually influence AI training data are already accumulating. Monitoring how AI systems describe competitor pipeline assets — even before approval — gives commercial teams early warning of the narrative they’ll be competing against.

Robust pharma sales analytics competitive intelligence enables insights such as: quantifying territory or regional gains and losses for key brands, determining which products are disrupting competitors’ blockbusters, and tracking positive or negative impacts from safety issues. AI monitoring adds a new signal source to each of those intelligence objectives.

Voice of the Customer at Scale

Patient forums, social media, and HCP professional networks have long served as voice of the customer (VoC) sources for pharmaceutical companies. AI monitoring extends VoC in a specific and valuable direction.

When patients and physicians query AI chatbots about drugs, they reveal what they’re actually uncertain about, what safety concerns they’re trying to resolve, and what trade-offs they’re evaluating between treatment options. That query data — even without user-level identification — is a structured, high-volume VoC dataset that reflects real clinical decision points.

A patient asking ‘Can I take [Drug Name] if I have kidney disease?’ reveals an access barrier your field team may be able to address with nephrologist-targeted data. A physician asking ‘How does [Drug Name] compare to [Competitor] in patients over 75?’ reveals a clinical question where your medical affairs team may need to produce targeted evidence. An AI that consistently answers ‘The data in elderly patients is limited’ — even when your label includes relevant subgroup data — is creating a barrier to prescribing that a field team briefed on the issue can directly counter.

Natural language processing can be applied to analyze scientific literature to identify and report adverse effects, and NLP-based sentiment analysis techniques can assist in recommending drugs. Applying the same NLP capability to AI chatbot outputs — treating them as a structured corpus of patient and HCP intent signals — is a logical extension of existing pharmacovigilance infrastructure.

The practical workflow connects three functions that currently operate in silos. Medical affairs, which holds the clinical evidence and the ability to produce field-usable data summaries. Regulatory affairs, which needs to know when AI outputs create off-label promotion exposure or adverse event signal risk. Commercial operations, which needs query-type intelligence to brief field teams and inform promotional content strategy. AI output monitoring is the connective tissue between all three.

Field Team Applications: What Reps Actually Do With This

Abstract intelligence is worthless in pharmaceutical field sales. The question isn’t whether AI monitoring can generate interesting data — it’s whether field teams can act on it in the context of a 90-second pre-call preparation window or a two-minute physician conversation.

The answer requires that AI monitoring outputs be translated into specific, rep-usable formats.

Pre-call AI context briefs. Before visiting an oncologist who manages a relevant patient population, a rep should have a single-page summary of how AI systems are currently describing her drug in the specific cancer type that physician treats. If the AI is recommending a competitor first and citing a specific trial, the rep needs to know which trial, whether the comparison is current, and what the approved-labeling response is. That’s a 30-second brief, not a literature review.

Objection pattern libraries. AI-generated claims create predictable objection patterns. If Perplexity consistently tells physicians that Drug A has better renal safety than Drug B based on a 2021 trial that has since been superseded, physicians across a territory will surface that objection in similar terms. A field team that has catalogued the most common AI-generated objections in their therapeutic area — and prepared evidence-based responses to each — converts a systemic information problem into a sales tool.

Medical information escalation triggers. When AI monitoring surfaces a specific factual error that’s appearing across multiple AI platforms and query types, that’s a signal for a medical information response, not just a field team briefing. Medical affairs can develop rapid communications, update published data summaries, or engage with AI platform developers through emerging data correction channels. The field team’s role is surfacing the signal quickly; the response requires institutional coordination.

Territory-level AI share of voice reporting. Not all AI outputs are uniform across geographies. Perplexity’s real-time web retrieval means its outputs in a market with a dominant regional hospital system publishing critical data about your drug may differ from outputs in a market where that system’s publications don’t dominate local search. Territory-level AI monitoring gives regional sales managers a new lens on why certain territories are seeing consistent HCP resistance.

The true value lies in reps leveraging generative AI technology in a CRM bot using data private to their company so they can answer unique business questions without sacrificing accuracy or compliance. AI monitoring data, fed back into a rep-facing CRM intelligence layer, is how that value gets realized in the specific context of what the AI is saying about their drug.

The Compliance Architecture

Building an AI monitoring program in a regulated pharmaceutical environment requires addressing compliance at two levels: the legal framework governing the monitoring activity itself, and the compliance constraints on how monitoring outputs are used in field communications.

On the monitoring side, the primary concern is data handling. AI chatbot outputs are not patient data and don’t implicate HIPAA. But if the monitoring program also captures user query data from platforms where user information is available, that creates privacy obligations. The standard architecture — querying public AI interfaces with synthetic prompts that mirror real-world query patterns, rather than capturing actual user queries — avoids this exposure while still generating representative data.

On the field communications side, the constraint is that AI monitoring outputs can inform rep preparation but cannot become promotional materials. A rep who tells a physician “I know the AI said X, but our label says Y, and here’s the data” is having a factual conversation grounded in approved information. A rep who uses AI monitoring data to claim “AI recommends our drug” — even if that’s what the monitoring showed — has crossed into a promotional use that requires MLR review.

Veeva’s ‘Free Text Agent,’ launching in late 2025, analyzes text entry in real-time. It flags non-compliant phrases and prompts the rep to revise the note before saving. Integrating AI monitoring outputs into the same CRM environment where compliance guardrails like this operate creates a coherent system rather than a bolt-on intelligence layer.

The FDA’s draft guidance centers on a risk-based credibility model. This means the level of scrutiny applied to an AI system depends on how influential it is in regulatory decision-making. Monitoring AI outputs about your drugs — and documenting that monitoring — is evidence of the responsible AI governance posture the FDA is signaling it expects from sponsors.

Implementation: Where to Start

Most pharmaceutical commercial teams trying to build AI monitoring capability should resist the urge to start with technology. The right starting sequence is:

Define the query taxonomy first. Work with medical affairs and regulatory to build the library of clinical queries that matter most in your therapeutic area. Prioritize the scenarios most likely to drive HCP prescribing decisions, the queries where inaccurate AI outputs carry the highest safety risk, and the competitive scenarios where AI share of voice directly affects market dynamics. A well-constructed query library of 50–100 queries per therapeutic area gives you the foundation everything else runs on.

Run a baseline audit. Before building any recurring monitoring capability, conduct a point-in-time audit across the four or five AI platforms with the highest HCP user penetration. Document what each platform currently says about your drug across each query category. This baseline tells you where your most urgent concerns are, which platforms carry the highest risk, and what the current competitive landscape looks like in AI outputs. It also gives you a before-state against which to measure the impact of any content or medical education interventions.

Establish a cross-functional response team. AI monitoring only generates value if there’s an organizational mechanism to act on what it surfaces. That requires a standing team with representatives from commercial, medical affairs, regulatory, and legal — with defined response protocols for each class of monitoring output. Adverse event signals go to pharmacovigilance within a defined window. Off-label promotion risks go to regulatory for legal review. Competitive intelligence goes to commercial and field leadership. Factual errors go to medical affairs for content response development.

Integrate with existing field intelligence infrastructure. For most large pharma companies, that means Veeva CRM and IQVIA. In 2025, IQVIA began allowing its data to flow natively into Veeva’s CRM, cementing its status as the industry’s data backbone. AI monitoring outputs structured to flow into the same data layer as IQVIA’s prescription and engagement data will reach field teams through the pre-call planning workflows they already use.

Set a monitoring cadence. AI models update their knowledge through fine-tuning cycles, retrieval corpus updates, and new model releases. A monitoring program that runs quarterly won’t catch the impact of a major model update or a new competitor publication that changes AI outputs mid-quarter. Weekly monitoring of high-priority queries with monthly comprehensive audits is a reasonable starting cadence for most therapeutic areas.

The Content Strategy Corollary

Monitoring what AI says about your drug is one side of the equation. Influencing what AI will say about your drug in the future is the other, and it belongs in a companion strategy.

The pharmaceutical brands that appear most frequently and authoritatively in that data are the ones AI mentions. That means a content strategy designed to increase the frequency, authority, and accuracy of drug-related information in the sources AI systems use for training and retrieval is a direct lever on future AI outputs.

For pharmaceutical companies, this means:

Publishing clinical data in formats and outlets that feed AI training corpora. PubMed-indexed publications carry more weight than press releases. FDA submission documents, once public, become training data. Structured data in clinical trial registries is more parseable by AI systems than narrative descriptions.

Building product information pages with structured, factual content that AI extraction systems can parse. Pharmaceutical websites heavy on regulatory disclaimers and light on structured, citable data are essentially invisible to AI extraction. Reformatting existing label information and clinical summaries in machine-readable structures is a low-cost, high-impact content intervention.

Ensuring patient education materials, FAQ pages, and medical professional resources are indexed and citable in the formats that retrieval-augmented AI systems pull from. Perplexity and similar platforms that use live web retrieval weight source authority — an FDA-approved patient medication guide from your company’s domain will carry more authority than an unvetted health information site.

None of this is gaming the AI system. It’s ensuring that the accurate, approved information about your drug is present in the sources AI relies on, rather than leaving the field to whatever the internet has already written.

Where DrugChatter Fits

DrugChatter is purpose-built for this specific operational need: tracking AI mentions of pharmaceutical products across major chatbot platforms and converting those mentions into actionable intelligence for commercial and regulatory teams.

The platform’s core value is reducing the gap between what AI systems say about a drug and what the commercial organization knows about what AI systems say. That gap is currently wide — most pharma companies have no systematic view into their drug’s AI presence — and it’s commercially and regulatorily costly.

For field sales support specifically, DrugChatter’s monitoring outputs feed into pre-call briefings that give reps therapeutic-area-specific AI context without requiring them to run their own AI queries or interpret raw chatbot outputs. The commercial intelligence layer — AI share of voice by query type, competitive drug mention tracking, and trending claim analysis — gives sales operations a new signal source for territory planning and HCP targeting prioritization.

For regulatory teams, the platform’s flagging of off-label AI mentions, safety information omissions, and factual inaccuracies creates an early warning system that complements existing pharmacovigilance workflows. The documentation it generates also supports the audit trail that FDA’s evolving AI governance guidance will increasingly require.

The Adoption Curve Is Shorter Than It Looks

Pharmaceutical companies that have evaluated AI monitoring for field sales support frequently encounter internal resistance framed as “this is too new” or “we don’t know how to measure the ROI.” Both objections are weaker than they appear.

On newness: the AI in pharmaceutical market is expected to grow from $1.94B in 2025 to $16.49B by 2034, accelerating at a CAGR of 27% from 2025 to 2034. The market is not early-stage. AI chatbot use by physicians for clinical reference is documented and growing. The question isn’t whether AI is shaping HCP decision-making; it’s whether your commercial team knows what those AI systems are saying.

On ROI: the measurement framework is straightforward. Baseline the frequency of AI-related objections in post-call notes before deploying monitoring-informed briefings. Measure the change after deployment. Track territory-level prescription trends in markets where reps receive AI monitoring context against matched markets where they don’t. These are standard A/B measurement approaches that pharma companies apply to every other promotional intervention.

Early adopters of AI-driven sales strategies report 20% improvements in marketing effectiveness. AI monitoring adds a specific intelligence input to that strategy at relatively low incremental cost compared to the field force infrastructure it supports.

The companies that move first on systematic AI monitoring will build something more durable than a technology advantage. Organisational learning and the confidence to act on AI-generated insight compound over time. The first mover advantage here is operational fluency — knowing how to interpret AI monitoring signals, how to route them to the right functions, and how to translate them into field actions. That fluency takes time to build, and the companies that start building it now will be harder to catch.

Key Takeaways

AI chatbots are already shaping how physicians and patients think about your drug. Approximately half of AI-generated responses to drug queries contain inaccurate or incomplete information — and your field team has no current view into what those inaccuracies are.

The FDA has deployed its own AI surveillance tools and issued enforcement letters targeting AI-generated health content. The regulatory exposure from unmonitored AI mentions of your drug is real, not theoretical.

AI share of voice is the new brand metric that predicts field sales friction. A drug that’s absent from or misrepresented in AI outputs faces an invisible headwind in every HCP conversation.

Systematic AI output monitoring requires four components: a clinical query taxonomy built with medical affairs and regulatory, multi-platform query execution, output classification by regulatory and commercial risk category, and field-ready intelligence packaging that fits into existing CRM pre-call workflows.

Monitoring is one side of the equation. A companion content strategy — ensuring accurate, structured drug information is present in the sources AI systems use — is what changes the AI outputs over time.

Cross-functional ownership is non-negotiable. AI monitoring data flows to pharmacovigilance (adverse event signals), regulatory (off-label risk), medical affairs (factual error response), and commercial (field briefings and competitive intelligence). No single function owns it alone.

DrugChatter is designed specifically for this operational need — tracking AI mentions across platforms, classifying outputs by risk and commercial relevance, and packaging intelligence for field team use.

The companies that build this capability now will develop organizational fluency in AI commercial intelligence that compounds as AI use among HCPs continues to grow. The companies that wait will spend years chasing a gap that started small and widened while they deliberated.

FAQ

Q: If AI-generated drug misinformation isn’t our company’s speech, why does it create a regulatory exposure for us?

A: The FDA is applying a substance-over-form analysis. If AI outputs about your drug consistently describe off-label uses — even if those outputs are generated entirely by the AI from public sources — the agency can examine whether company-generated publications, press materials, or digital content contributed to the training data that produced those outputs. The closer the AI’s off-label claim traces to something your company published, the more exposure you have. Monitoring lets you identify those traces before the FDA does.

Q: How do you prevent field reps from using AI monitoring data in promotional conversations in ways that create compliance problems?

A: The cleanest approach is to surface monitoring intelligence through the pre-call planning layer of the CRM rather than as a separate rep-facing tool. When AI monitoring data is integrated into the same environment where compliance guardrails already operate — flagging non-compliant free-text entries, enforcing approved messaging — the information becomes a prep input rather than a promotional claim. The rep knows the AI context going in; they don’t quote the monitoring data to the physician.

Q: How often do major AI platforms update their outputs about specific drugs?

A: It varies by platform architecture. Models with fixed training cutoffs (like older versions of GPT-4) update only when a new model version is released. Retrieval-augmented platforms like Perplexity update effectively in real-time based on what their web scraping surfaces. This means a single monitoring cadence applied uniformly across platforms will miss real-time changes on RAG-based systems. A robust program monitors RAG platforms more frequently — weekly or even daily for high-priority queries — while auditing fixed-knowledge-cutoff models on a monthly cadence.

Q: Can a pharmaceutical company submit corrections to AI platforms when their drug is misrepresented?

A: The major AI developers have feedback mechanisms, but they are not structured for pharmaceutical correction requests and do not operate on a timeline aligned with commercial urgency. The more reliable lever is the content strategy approach — increasing the presence and authority of accurate drug information in the sources that feed AI systems, so that future model updates reflect that information. Some platforms that use RAG can respond faster to corrections on high-authority source domains. The FDA’s evolving relationship with AI developers may eventually create a formal pharmaceutical correction channel, but it doesn’t exist at scale today.

Q: Does the volume of AI chatbot use by physicians actually justify building a dedicated monitoring capability?

A: The data on physician AI adoption has moved past the ’emerging trend’ framing. A 2024 survey by the American Medical Association found that a majority of physicians were using AI tools in clinical practice. The question isn’t whether your physicians are using AI chatbots — they are — it’s whether you know what those chatbots are telling them. In a sales environment where fewer than one-third of rep calls are rated valuable by physicians and nearly 90% of interactions last under two minutes, any intelligence input that helps a rep address the specific information environment shaping that physician’s thinking is worth the investment.