Every time ChatGPT answers a drug question, it may be doing something no FDA-approved ad campaign ever could — and your compliance team has no idea it’s happening.

The morning of September 9, 2025 was not a good day to be a pharma brand manager. Before noon, the U.S. Department of Health and Human Services and FDA had jointly announced a sweeping crackdown on direct-to-consumer drug advertising, and approximately 100 cease-and-desist letters were on their way to pharmaceutical companies. What grabbed regulatory attorneys’ attention was not the volume of the enforcement action — it was the method. FDA explicitly stated it had used ‘AI and other tech-enabled tools to proactively surveil and review drug advertisements.’

The regulator had deployed the same technology that drug companies were rushing to adopt — and pointed it in the opposite direction.

That symmetry is the defining feature of pharma’s current compliance reality. AI is simultaneously the surveillance tool, the promotional channel, the source of uncontrolled drug claims, and the instrument of regulatory enforcement. Drug companies that treat those four roles as unrelated problems are operating with a dangerously incomplete picture.

This article maps what AI-generated drug content actually looks like from a regulatory standpoint, where the liability lines fall, and what monitoring systems like DrugChatter are doing to help drug companies track AI-generated brand mentions before a regulator does it for them.

Part One: The Enforcement Landscape Has Already Shifted

FDA’s September 2025 Blitz and What It Signals

The September 9 enforcement wave was not routine. In a single day, FDA issued what ProPharma Group described as the highest annual total of enforcement letters in nearly 25 years — and then kept going. On September 16, FDA posted 40 Untitled Letters and 8 Warning Letters. On September 25, another 11 letters from the same batch appeared. By early 2026, OPDP had issued eight additional Untitled Letters within a single month, focused on DTC television advertising.

The letters themselves were familiar in substance: omitted or minimized risk information, unsubstantiated efficacy claims, misleading net impressions, and visual presentations that buried the major statement under upbeat music and fast-paced imagery. What differed was scale, speed, and method.

For the first time, enforcement letters were signed at the director level — by CDER Director Dr. Tidmarsh and CBER Director Dr. Prasad directly — rather than through OPDP’s standard chain. And FDA committed explicitly to ‘aggressively deploying its available enforcement tools,’ with a stated plan to scale enforcement to ‘hundreds of letters each year’ if initial actions failed to change behavior.

The mechanism behind that scale commitment is AI surveillance. With OPDP’s Policy Division eliminated in April 2025 and senior leadership departing in the same restructuring, the agency faced a peculiar situation: intensifying enforcement with a smaller review staff. AI tools fill that gap, at least partially. Whether those tools introduce their own error rate into FDA’s enforcement letters remains an open question — regulatory attorneys have noted the simultaneous issuance of dozens of letters requiring 15-business-day responses makes the possibility of errors in FDA’s own assessments more than theoretical.

What is not theoretical: the agency is now doing systematically what any regulator with access to a web scraper and a large language model can do — reading everything, at scale, continuously.

OPDP’s New Surveillance Scope

FDA’s enforcement posture has expanded well past broadcast television. The September 2025 letters cited violations across HCP websites, corporate webpages, influencer content, earned media placements, and patient testimonials. A subsequent fact sheet indicated FDA intends to ‘close digital loopholes’ by expanding oversight to encompass ‘all social media promotional activities,’ including influencer partnerships and sponsored content.

That expansion matters because it signals the regulatory perimeter is no longer defined by format or channel. What matters is whether the content constitutes promotion of an FDA-regulated drug, and whether that promotion meets the requirements of truthfulness, fair balance, and consistency with approved labeling.

Apply that logic to AI-generated drug content, and the regulatory exposure expands considerably.

Part Two: What AI Actually Says About Your Drug

The Hallucination Problem Is Not a Future Risk

A 2023 study published in JAMA Internal Medicine found that ChatGPT provided inaccurate or incomplete information in approximately 47% of drug-interaction queries. A separate Stanford evaluation found AI chatbots hallucinated non-existent drug interactions roughly 18% of the time — fabricating contraindications that appear in no medical literature. The Vectara Hallucination Index measured factual accuracy across major LLMs and found error rates ranging from 3% to 27%, with medical and pharmaceutical content consistently at the higher end.

More directly: when a patient asks ChatGPT about your drug, there is roughly a one-in-three chance the response contains at least one clinically meaningful error.

Those errors cluster in predictable categories. AI frequently provides outdated or incorrect dosage information — if FDA approves a dosage change, that change may not appear accurately in chatbot responses for months or years after approval. AI commonly overstates or understates approved indications; a drug cleared for three specific cancer subtypes may be described as effective for a broader category, based on early-stage trial speculation that the model treated as established fact. New indications may not appear at all. In the biosimilar space — where the U.S. market reached $13.2 billion in 2023 per IQVIA — AI systems frequently confuse biosimilars with their reference biologics, merge pricing information across products, or misstate interchangeability designations.

The floor case for AI drug information is fabricated clinical trial results: plausible-sounding but entirely invented efficacy percentages, trial sizes, and endpoint data, stated with the confidence of a reviewed label. <blockquote> ‘People have a very high degree of trust in ChatGPT, which is interesting, because AI hallucinates. It should be the tech that you don’t trust that much.’ — Sam Altman, CEO of OpenAI, cited in PMC research on AI drug information limitations (2025) </blockquote>

The Training Data Lag Problem

AI training data has a lag of months to years relative to real-world events. A drug approved by FDA in 2025 may not appear accurately in ChatGPT’s responses until 2026 or later — and that assumes the model encounters sufficient post-approval content during a future training cycle. During that gap, patients and clinicians asking about a new treatment receive either silence or information sourced from pre-approval speculation — clinical trial interim results, analyst projections, or advocacy group estimates, none of which carry the legal weight of an approved label.

For recently launched products — exactly the drugs that benefit most from patient awareness — this gap directly undermines market access. The mechanism is structural, not correctable through better press releases.

What AI Does Not Include

FDA’s promotional framework requires that any drug claim be accompanied by fair balance: material risks must accompany material benefits, the major statement of risks must be clear, conspicuous, and neutral, and all claims must be consistent with approved labeling. AI responses comply with none of these requirements, because AI responses are not promotional materials — they are generated content from a training corpus.

When ChatGPT describes a drug as ‘highly effective’ for a condition for which it has received only exploratory trial data, that is not a regulatory violation by the manufacturer. It is something more complicated: off-label promotion happening at scale, generated by a third party, reaching millions of patients, with no fair balance, no major statement, no MLR review, and no Form FDA-2253 submission.

The adverse event reporting chain is equally unclear. If a patient takes a drug based on AI-generated information that omits a boxed warning, experiences a serious adverse event, and reports it — what is the manufacturer’s obligation? FDA’s MedWatch framework does not account for AI-intermediated drug information. That gap will close through enforcement or rulemaking, not through inaction.

Part Three: The Regulatory Gray Zone

When AI Becomes Promotion — and When It Might Become Your Problem

The FD&C Act’s prohibition on misbranding applies to ‘labeling’ — materials that accompany a drug or are used in connection with its distribution. Courts and FDA have interpreted ‘in connection with’ broadly, to encompass materials that are functionally promotional even if not directly produced by the sponsor.

The harder question is whether AI-generated content about a drug, produced by a general-purpose model with no relationship to the sponsor, can ever trigger sponsor liability.

Two scenarios carry genuine risk. First: a manufacturer’s own AI-powered tools — chatbots on branded websites, HCP engagement platforms, or patient support applications — generate content about approved drugs. If that content hallucinates a clinical result or suggests efficacy in an unapproved indication, the manufacturer faces strict liability for misbranding under the FD&C Act. Per analysis from Zelthy’s compliance research, OPDP has committed to using AI tools to proactively surveil digital promotional content, explicitly including ‘AI-generated health content and chatbot interactions.’ The regulatory arms race runs in both directions.

Second: manufacturer-sponsored content that feeds AI models — white papers, press releases, disease-awareness materials, key opinion leader presentations — shapes what AI systems say about drugs downstream. If that upstream content makes off-label claims, and those claims propagate into AI responses, the connection between sponsor activity and AI output is traceable. The regulatory theory is not settled, but it is not implausible.

What is clear from Pharmaphorum’s analysis of the current compliance environment: ‘When a surgeon asks ChatGPT about a surgical robotics platform, or a patient queries Perplexity about medication interactions, those AI systems generate responses by paraphrasing, compressing, and redistributing published information. The organisation has no visibility into whether these AI-generated representations are faithful to approved labelling, whether clinical claims are accurately stated, or whether required safety information is included.’

Prescription Drug Use-Related Software and the PDURS Framework

FDA’s 2023 draft guidance on Prescription Drug Use-Related Software established a specific regulatory category for software disseminated by or on behalf of a drug sponsor that generates end-user output related to the sponsor’s prescription drugs. That output — screen displays, sounds, other presented content — is regulated as prescription drug labeling, either promotional or FDA-required.

Generative AI applications that a sponsor deploys to support patient adherence, medication information, or HCP education fall squarely within this definition. The regulatory implications are substantial: a generative AI tool deployed by a drug sponsor is not a general-purpose chatbot. It is labeling. Its outputs require the same review, the same fair balance, and the same consistency with approved indications that any other promotional material requires.

Sponsors building AI-powered patient engagement tools who have not accounted for the PDURS framework are carrying unquantified regulatory exposure.

The Social Media Loophole — and Its AI Equivalent

The structural problem that AI creates for pharmaceutical promotion is not new in kind, only in scale. Pharmaphorum’s March 2026 analysis of PPC advertising documented an earlier version: drug companies running paid search campaigns on keywords related to off-label use — keywords like ‘weight loss’ for semaglutide — without making direct claims. Because the ad contained only a brand name and a link, it did not technically constitute off-label promotion, even when appearing alongside searches explicitly about unapproved uses.

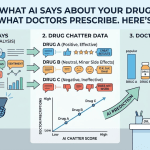

That structural gap — promotional effect without promotional content — defines AI drug mentions. The top 10 pharmaceutical companies by revenue account for approximately 90% of all AI brand mentions in treatment-related queries, per analysis across hundreds of queries to ChatGPT, Perplexity, Gemini, Claude, and Grok. Mid-size biotechs with FDA-approved drugs treating millions of patients are effectively invisible. Specialty pharma and emerging biotech receive no meaningful AI representation.

That invisibility gap has direct patient consequences, but the promotional risk cuts the other way for large brands: high AI visibility means high AI inaccuracy exposure. The same volume of mentions that creates market presence creates the statistical likelihood of a significant percentage of clinically wrong responses.

Part Four: What a Monitoring Framework Actually Requires

Tracking Brand Mentions at Scale

The first requirement for managing AI-related promotional risk is visibility. A company cannot respond to what it cannot see — and most pharmaceutical companies currently have no systematic mechanism for monitoring what AI systems say about their branded and generic drugs.

That monitoring problem is structurally different from traditional brand surveillance. Search engine optimization and social media monitoring capture human-generated content. AI outputs are generative — they are not stored anywhere and do not appear in standard web crawls. Every response is unique, ephemeral, and context-dependent. The same query asked to ChatGPT with slightly different phrasing may produce meaningfully different clinical claims.

Effective AI brand monitoring requires a systematic querying approach: structured sets of patient-intent and HCP-intent prompts directed at major AI platforms — ChatGPT, Perplexity, Google’s AI Overviews, Gemini, Claude, Grok — at regular intervals, with output capture and comparison against approved labeling. DrugChatter was built specifically for this use case, tracking AI-generated drug mentions across platforms to give pharmaceutical companies visibility into what AI is saying about their drugs, what is accurate, what is inaccurate, and where claims drift from label-approved language.

The monitoring goal is twofold: identify inaccuracies before a regulator or plaintiff does, and track off-label mentions as part of ongoing pharmacovigilance.

What Accurate AI Drug Information Requires

AI training data quality is the upstream variable that determines downstream AI response accuracy. AI systems learn from patterns in their training corpus — medical journals, regulatory filings, news articles, patient forums, and health websites. If the highest-quality sources for a drug are the FDA label, clinical trial publications, and peer-reviewed reviews, and those sources are accurately indexed, a well-trained model will produce more accurate responses.

The inverse is equally true. If the dominant web content about a drug consists of off-label forum discussions, pre-approval speculation, and incorrect third-party summaries, the model will learn from those patterns.

Pharmaceutical companies that understand this mechanism can take structural action: publishing high-quality, accurate, FDA-consistent content across authoritative channels, ensuring that approved labeling is accessible and machine-readable, and monitoring what third-party sources say about their drugs so that persistent errors can be addressed at the source. Fixing the source fixes the AI, eventually — because AI models retrain on updated data.

The lag remains real. But proactive content management at least shortens the error window.

Pharmacovigilance and the Adverse Event Reporting Gap

FDA’s MedWatch system was designed for a world where patients received drug information from prescribers, pharmacists, and manufacturer-controlled materials. It has no framework for adverse events that follow from AI-generated drug information — the dose the AI recommended, the interaction the AI missed, the contraindication the AI invented.

Pharma companies need to anticipate the regulatory resolution of this gap, because it will arrive through enforcement or rulemaking, and the terms will be set by whoever shapes the early cases. Companies that have already built AI monitoring infrastructure will be better positioned to respond to adverse event reports that mention AI as an information source — and to demonstrate due diligence if a report reaches litigation.

The liability exposure here is not symmetric. If a patient experiences a serious adverse event and the plaintiff’s attorney can demonstrate that an AI system owned or sponsored by the manufacturer provided inaccurate safety information, the legal argument does not require proving the AI’s output was promotional in the traditional sense. It requires proving the manufacturer had responsibility for the tool’s outputs. That is a significantly lower bar.

Part Five: The FDA’s Own AI Problem

Elsa, the Agency’s Internal LLM

In June 2025, FDA deployed an agency-wide large language model assistant called ‘Elsa,’ built on Anthropic’s Claude, to support internal scientific review and inspection planning. Elsa summarizes adverse events, performs label comparisons, assists with clinical protocol review, and generates code for internal databases. It operates in a secure GovCloud environment without training on sponsor submissions.

FDA’s use of AI for internal review is separate from its use of AI for promotional surveillance — but both carry implications for pharmaceutical companies. On the internal side, Elsa may shorten review timelines, which incrementally favors well-structured, machine-readable submission materials. On the surveillance side, FDA’s AI tools review promotional content at a scale no human workforce could sustain — which means the volume of potential violations that reach enforcement level is growing.

The Bipartisan Policy Center’s November 2025 analysis noted that FDA staffing levels were down approximately 2,500 employees — nearly 15% — from 2023, while enforcement activity was accelerating. AI is compensating for headcount reductions in a regulatory context where the stated enforcement posture is expanding, not contracting.

Regulatory attorneys at Latham & Watkins raised the specific concern that simultaneous issuance of dozens of enforcement letters may increase the risk that those letters contain errors and factual inaccuracies — and that companies receiving letters will face resource constraints in responding within 15 business days. The current environment creates legal uncertainty in both directions: FDA may enforce incorrectly, and companies may not have enough time to respond substantively.

OPDP in Flux

OPDP’s elimination of its Policy Division in April 2025 and the subsequent departure of senior leadership created what ProPharma Group described as an environment where ‘enforcement is intensifying even as advisory clarity is declining.’ Companies face more unpredictable regulatory scrutiny with less advanced guidance on expectations.

That uncertainty changes the compliance calculus for medical, legal, and regulatory teams. When guidance is stable, MLR teams can build review processes around documented agency expectations. When guidance is in flux and the same OPDP that issues enforcement letters has also lost the staff who wrote the policy frameworks underlying those letters, the compliance standard becomes harder to apply consistently.

The practical implication: companies need tighter internal governance, more conservative claim review, and greater documentation of their MLR process — specifically to demonstrate, in the event of an enforcement action, that review was thorough and good-faith.

Part Six: Advertising in AI — The Coming Commercial Dimension

OpenAI’s Ad Plans and What They Mean for Pharma

In March 2026, OpenAI formally announced it would introduce advertising into ChatGPT. The cultural and business implications became immediately apparent when Anthropic responded with Super Bowl spots for Claude specifically positioning an ad-free experience as a competitive advantage. Claude’s daily active users rose 11% following the game, and site visits jumped 6.5%.

That dynamic — one major AI platform introducing advertising while another positions its absence as a differentiator — defines the commercial landscape pharma companies are entering.

Traditional DTC advertising depends on a framework FDA built for broadcast television and print: a major statement, fair balance, clear risk disclosure, submission on Form FDA-2253. None of those requirements exist in the advertising frameworks being developed by AI platforms. A pharmaceutical ad appearing in a ChatGPT response operates in the same regulatory gray zone that Pharmaphorum documented for PPC advertising: promotional effect, unclear content standards.

The FDA enforcement framework has consistently held that all promotional channels are within scope. The September 2025 letters cited violations across television, websites, influencer content, and social media. There is no logical basis on which AI advertising would be exempt — but the specific guidance that would define what compliant AI advertising looks like does not yet exist.

Gartner projects a 25% drop in traditional search volume by 2026. Rock Health’s 2024 Digital Health Consumer Survey found that 27% of U.S. consumers had already used generative AI tools for health-related questions. Deloitte projects AI-powered health assistants will handle 35% of initial patient treatment queries by 2028. The channel is real, growing, and currently unregulated for promotional content — which means early movers face both opportunity and compliance uncertainty simultaneously.

The Zero-Click Problem

As Will Reese, Chief Innovation Officer at Inizio Evoke, noted at Fierce Pharma Week 2025, ‘zero-click’ searches — where users receive AI-generated summaries without clicking through to original sources — make it harder for brands to maintain visibility and control over their messaging. Patients receive synthesized drug information without ever visiting a manufacturer’s website, accessing full prescribing information, or encountering the fair balance language that appears on approved promotional materials.

From a brand perspective, zero-click AI responses represent a fundamental disruption of the DTC content funnel. From a regulatory perspective, they represent millions of drug information interactions that occur entirely outside the supervised promotional framework.

The drug company cannot audit what it cannot see. An AI Overview on Google, a Perplexity summary, a ChatGPT response to a patient’s question about their new prescription — none of these appear in any monitoring system currently used by pharmaceutical brand or compliance teams.

Part Seven: What Pharma Companies Should Be Doing Now

Building an AI Brand Monitoring Protocol

The starting point is systematic monitoring of what AI platforms say about your drugs. That means structured querying — not occasional manual checks, but regular, documented queries across major AI platforms using patient-intent and HCP-intent prompts, with outputs captured and compared against approved labeling.

The monitoring protocol needs to cover four categories of content: approved indications (are they accurate?), off-label mentions (what does the AI say the drug treats that it is not approved for?), safety information (is the risk profile accurately represented, including boxed warnings?), and dosing information (are dose, frequency, and administration route correct?).

DrugChatter provides this infrastructure — automated, cross-platform AI monitoring specifically built for pharmaceutical brand teams and regulatory affairs. The tool captures AI-generated drug mentions, flags discrepancies from approved labeling, and tracks sentiment and context around brand mentions. For compliance teams, the output creates an audit trail: documented evidence of monitoring activity and of steps taken when inaccuracies were identified.

Content Strategy as Regulatory Infrastructure

The second priority is upstream content quality. AI models learn from the web. If the authoritative, accurate sources about your drug are well-indexed and consistently represent label-consistent information, the model’s outputs will trend toward accuracy over time.

That means pharmaceutical medical affairs and communications teams need to think of their published content — clinical data summaries, label-consistent disease awareness materials, peer-reviewed publications — as inputs to AI training, not just as standalone communications. Clean, accurate, label-consistent content published at authoritative sources (medical journals, FDA.gov, the drug’s official prescribing information) shapes downstream AI responses in ways that no amount of monitoring can substitute for.

Companies that discovered errors in AI’s drug information and traced them back to an outdated or inaccurate third-party database entry, a stale review article, or incorrect information on a popular health website found that correcting the source led to correction of AI outputs — eventually. The lag remains. But source correction is the only mechanism that produces durable AI accuracy improvement.

MLR Teams Need New Review Categories

Medical, legal, and regulatory review processes were designed for deterministic content — materials that MLR can read, approve, and file. AI-generated outputs are non-deterministic. The same question produces different answers at different times, on different platforms, for different users.

That does not mean AI outputs are unmanageable, but it does mean that MLR needs new review categories and new workflows. Specifically:

For manufacturer-owned AI tools (patient chatbots, HCP engagement platforms, medical information services), MLR review should apply to the system prompt, the guardrails, and the knowledge base — the deterministic elements that shape non-deterministic outputs. Hallucination testing should be a standard component of any AI tool’s validation process before deployment.

For third-party AI platforms (ChatGPT, Perplexity, Gemini, Grok), MLR does not review outputs — but regulatory affairs and pharmacovigilance teams should monitor them. The monitoring process is the proxy for oversight.

The 2025 Pharmaphorum analysis put the governance challenge clearly: ‘A governed messaging library — the same infrastructure that prevents internal content drift — becomes the baseline against which AI-mediated representations can be monitored and measured. Organisations that build this internal governance capability will be the first to extend it outward, tracking how the broader AI ecosystem represents their products and taking corrective action when representations drift from approved language.’

Adverse Event Reporting Protocols for AI-Mediated Harms

Pharmacovigilance teams should update their adverse event intake procedures to capture information about AI as an information source when patients or providers report adverse events. Current MedWatch reporting does not require this information — but collecting it proactively positions a company to demonstrate due diligence when the regulatory framework eventually catches up with the exposure.

The specific information worth capturing: which AI platform did the patient or provider use? What drug question did they ask? What did the AI tell them? This data, systematically collected, creates the evidence base for both internal safety monitoring and, if needed, regulatory engagement.

Part Eight: The Brand Share Dimension

AI Share of Voice Is Already a Real Metric

Beyond compliance, AI-generated drug content creates a new competitive dimension: AI share of voice. When a patient asks ChatGPT ‘What medication is used for type 2 diabetes?’, or a physician asks Perplexity ‘What are the options for second-line treatment in non-small cell lung cancer?’, the drugs mentioned, the order in which they appear, and the framing of each option directly affect prescribing and patient behavior — without any advertising spend.

The top 10 pharmaceutical companies by revenue account for approximately 90% of AI brand mentions in treatment-related queries. Mid-size biotechs with approved drugs treating millions of patients are essentially absent. That concentration has market share implications that are independent of the quality of the drugs themselves, and largely disconnected from traditional DTC or HCP promotional investment.

A company with strong clinical data and a mid-sized commercial footprint may receive negligible AI representation not because its drug is inferior but because AI training data is dominated by large-brand web presence. The correction for this is content quality and distribution, not advertising spend — and it requires monitoring to even identify the gap.

The Sentiment Layer

AI does not just mention drugs — it frames them. The same monitoring infrastructure that captures accuracy errors captures tone, context, and competitive positioning. DrugChatter’s monitoring approach tracks not just what AI says about a drug but how it characterizes the drug relative to therapeutic alternatives: is it described as a preferred option, a second-line treatment, appropriate for specific patient subgroups, or associated primarily with its side effects?

That framing information is commercially valuable for brand teams and strategically relevant for market access teams. If AI consistently characterizes a drug as expensive relative to alternatives — even if that characterization is based on outdated pricing data — it shapes payer and patient conversations in ways that slow market access. Identifying the error and correcting the upstream source is a commercial intervention with measurable impact.

Part Nine: The Regulatory Future

Where FDA Is Going

OPDP’s commitment to continued AI surveillance, combined with its stated plan to scale enforcement to hundreds of letters annually, sets a trajectory that pharmaceutical companies should treat as a permanent feature of the promotional environment, not a temporary political posture.

The January 2025 draft guidance on AI in drug development addressed AI in regulatory submissions — a separate domain from promotional AI, but part of the same institutional process of building AI regulatory frameworks. The FDA’s External Policy Council and Internal Use Council, established in 2025 to coordinate AI policy across the agency, will eventually produce guidance on AI in promotion. When that guidance arrives, companies with established monitoring and governance practices will have a structural compliance advantage over those building from zero.

The EU AI Act, which entered into force in August 2024 with enforcement of high-risk AI provisions phasing in through 2025-2026, adds a parallel regulatory dimension for companies operating in European markets. The EU’s approach — more prescriptive and explicit in its risk categorization than FDA’s current posture — may preview where FDA eventually goes.

The Liability Question Will Get Tested

The legal theory under which a pharmaceutical manufacturer faces liability for AI-generated content about its drugs has not been tested in litigation. That will change. The combination of high AI hallucination rates in pharmaceutical content, growing patient reliance on AI for drug information (27% of U.S. consumers, per Rock Health, as of mid-2024), and an increasingly adversarial plaintiff bar creates the conditions for a test case.

The most likely fact pattern: a patient with a serious adverse event can demonstrate they used an AI tool — potentially one deployed by or associated with the manufacturer — that provided inaccurate dosing or safety information, and that inaccurate information influenced their behavior. Whether that constitutes manufacturer liability under the FD&C Act’s misbranding provisions, products liability theory, or negligence will be for courts to determine.

Companies that have built monitoring and governance infrastructure before that case is filed will be in a materially better position than those that have not.

Conclusion

The pharmaceutical industry has spent decades building compliance infrastructure for a promotional landscape defined by broadcast advertising, print detailing, HCP speaker programs, and social media. AI has created a fifth channel that operates entirely outside that infrastructure: non-deterministic, non-audited, potentially inaccurate, and already reaching more patients than most DTC campaigns.

FDA’s September 2025 enforcement wave confirmed that the agency is using AI to watch what the industry says about its drugs. It is only a matter of time before that surveillance extends to what AI says about drugs — whether generated by the manufacturer’s own tools or by general-purpose models trained on the broader web.

The companies that respond to this environment by building systematic AI monitoring — tracking brand mentions, auditing for inaccuracies, managing upstream content quality, and updating MLR and pharmacovigilance workflows — will be positioned for the regulatory regime that is forming. The companies that wait for final guidance will be responding to enforcement letters instead of shaping what compliance looks like.

DrugChatter exists precisely in this window: the gap between the AI-generated promotional reality that already exists and the regulatory framework that will eventually govern it. The monitoring capability is available now. The regulatory requirement is coming.

Key Takeaways

- FDA used AI surveillance tools to identify violations in its September 2025 enforcement wave — the agency is now using the same technology as the industry, aimed in the opposite direction.

- AI chatbots hallucinate drug information at meaningful rates: approximately 47% of drug-interaction queries contain inaccurate or incomplete information (JAMA Internal Medicine, 2023), with medical content consistently at the higher end of documented hallucination ranges.

- AI-generated drug content almost never includes the fair balance, major statement of risks, or label-consistent claims required for promotional materials under FDA regulations — but it reaches patients and providers at a scale comparable to DTC campaigns.

- Manufacturer-owned AI tools that generate content about a sponsor’s drugs are regulated as prescription drug labeling under FDA’s PDURS framework — MLR review applies to the system prompt, guardrails, and knowledge base.

- AI share of voice is already a real competitive metric. The top 10 pharmaceutical companies by revenue account for approximately 90% of AI brand mentions in treatment-related queries, creating a visibility gap for mid-size and specialty pharma that is not correctable through traditional advertising spend.

- The adverse event reporting framework does not yet account for AI-intermediated drug information. Companies should capture AI-source information in adverse event intake processes now, before the regulatory requirement arrives.

- Systematic AI brand monitoring — structured querying, output comparison against approved labeling, and upstream content management — is available now through tools like DrugChatter and represents the minimum viable compliance posture for this environment.

FAQ

Q1: If a general-purpose AI chatbot makes an off-label claim about my drug, does my company have regulatory exposure?

Not automatically, and not under current FDA enforcement practice. The FD&C Act’s misbranding provisions apply to the drug’s sponsor and to materials disseminated ‘in connection with’ the drug’s distribution. A general-purpose model has no relationship with the sponsor. However, two scenarios do create exposure: if the AI’s inaccurate content traces back to off-label claims in the sponsor’s own published materials (white papers, KOL presentations, press releases), the upstream content may be the violation. And if the sponsor operates an AI tool — a patient chatbot, an HCP engagement platform, a medical information service — any hallucinated off-label claim that tool generates triggers strict misbranding liability under the FD&C Act.

Q2: FDA issued enforcement letters using AI surveillance in September 2025. What should pharma companies do differently as a result?

Three things immediately. First, audit all DTC materials currently in market for compliance with the specific issues cited in the September 2025 letters: distracting visual presentations during the major statement, omitted or minimized risk information, and unsubstantiated quality-of-life claims. Second, submit any promotional materials for OPDP advisory comment that have not been reviewed recently, and take prior OPDP comments seriously — the letters repeatedly cited violations on materials where the agency had already provided advisory feedback. Third, assume that any publicly accessible promotional material — television ads, paid search, influencer content, branded websites — is now subject to AI-assisted review by OPDP. Design accordingly.

Q3: How does AI share of voice differ from traditional brand share of voice, and why does it matter commercially?

Traditional share of voice measures the proportion of promotional spend or media impressions attributable to a brand within a therapeutic category. AI share of voice measures the proportion of AI-generated treatment responses that mention or recommend a brand. The mechanisms that determine each metric are entirely different. Traditional share of voice is driven by advertising spend and media placement. AI share of voice is driven by the quality, volume, and authority of content in the AI’s training data — which correlates with large-brand web presence and established medical literature, not with current promotional investment. A company that increases its DTC budget will not predictably increase its AI share of voice. A company that improves its content quality, expands its peer-reviewed publication record, and ensures accurate third-party coverage of its drugs may.

Q4: What does a compliant AI-powered patient engagement tool look like under FDA’s PDURS framework?

Under FDA’s 2023 draft guidance on Prescription Drug Use-Related Software, any software disseminated by or on behalf of a drug sponsor that generates end-user output related to the sponsor’s prescription drug is regulated as prescription drug labeling. A compliant AI patient engagement tool would need to: (a) limit its outputs to information consistent with approved labeling, (b) include appropriate risk information whenever benefit information is presented, (c) avoid generating content about unapproved indications, and (d) undergo MLR review of its prompting architecture, guardrails, and knowledge base before deployment. Regular hallucination testing — specifically testing whether the tool produces off-label claims or omits boxed warning information under adversarial prompting — should be part of both pre-deployment validation and ongoing monitoring.

Q5: Deloitte projects AI health assistants will handle 35% of initial patient treatment queries by 2028. What regulatory framework should pharma expect by then?

FDA’s current trajectory suggests two parallel developments. First, specific guidance on AI in pharmaceutical promotion — addressing what compliant AI advertising looks like, what disclosure requirements apply to AI-generated drug information, and how the PDURS framework applies to patient-facing generative AI tools. Second, an expanded adverse event reporting framework that captures AI as an information source when patients report events following AI-mediated drug guidance. The EU AI Act’s enforcement of high-risk AI provisions, phasing in through 2025-2026, may preview FDA’s direction: more explicit risk categorization, lifecycle governance requirements, and mandatory human-in-the-loop provisions for high-consequence AI outputs. Companies that build AI governance infrastructure now — monitoring, MLR workflow adaptation, pharmacovigilance update — will be working from a defensible compliance posture when those frameworks arrive. Companies that wait will be retrofitting.

DrugChatter tracks AI-generated drug mentions across major AI platforms, providing pharmaceutical brand teams and regulatory affairs with visibility into what AI says about their drugs — before a regulator does. Learn more at DrugChatter.com.