Somewhere right now, a patient is asking an AI chatbot whether their oncology drug causes liver failure. The chatbot is answering confidently. The answer is wrong.

That exchange is not theoretical. It is happening across ChatGPT, Gemini, Perplexity, Claude, and a dozen specialized medical AI tools — millions of times per week — and most pharmaceutical companies have no system to detect it, no protocol to document it, and no defensible record to show regulators when the fallout arrives.

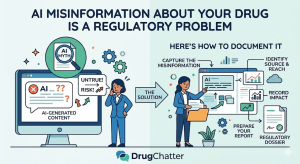

This article is a practical guide for medical affairs, regulatory affairs, and pharmacovigilance teams who need to treat AI-generated drug misinformation as what it actually is: a documented safety signal that requires the same rigor as an adverse event report.

Why AI Misinformation Is Now a Regulatory Exposure

The FDA’s framework for drug safety monitoring was built around human communication channels — prescribers, patients, caregivers, published literature, spontaneous reports. AI chatbots did not exist in that framework’s original architecture. They do now, and regulators in the United States, European Union, and United Kingdom are scrambling to catch up.

The European Medicines Agency published guidance in 2024 acknowledging that AI-generated content about medicines could distort patient risk perception and interfere with benefit-risk communication. The FDA’s own internal working groups have flagged AI hallucination as a patient safety concern in multiple internal documents released under FOIA requests. The U.K.’s MHRA has included AI misinformation monitoring in draft revisions to its pharmacovigilance inspection standards.

None of this has crystallized into enforceable requirements yet. That is exactly why companies that build documentation infrastructure now will have a structural advantage when requirements do crystallize — and why companies that wait will be scrambling to reconstruct a record they never kept.

The regulatory logic is straightforward. If a company becomes aware of systematic misinformation about its drug circulating in a high-reach channel, and that company takes no action to document or mitigate it, regulators will ask what the company knew and when. ‘We didn’t have a system’ is not a defensible answer in a world where systematic monitoring tools exist.

The Three Categories of AI Drug Misinformation

Not all AI misinformation carries equal regulatory weight. Documentation systems that treat every inaccuracy as equivalent will generate unmanageable noise. The category taxonomy that medical affairs teams actually need distinguishes between three types.

Category 1: Safety-relevant misinformation. This covers incorrect statements about contraindications, drug interactions, dosing limits, Black Box Warnings, REMS requirements, or adverse event profiles. A chatbot stating that a drug has no significant drug-drug interactions when the label lists a serious interaction with warfarin is Category 1. A chatbot understating the frequency of a known serious adverse event is Category 1. These require the most rigorous documentation and the fastest internal escalation.

Category 2: Efficacy-relevant misinformation. This covers overstated or understated efficacy claims that diverge materially from label language and supporting clinical data. A chatbot claiming a drug achieves complete remission in 80% of patients when clinical trial data shows 40% is Category 2. So is the inverse — a chatbot systematically underrepresenting efficacy in a way that could drive patients away from a therapy that would benefit them.

Category 3: Competitive-context misinformation. This covers inaccurate comparative claims where an AI places your drug at a disadvantage — or advantage — relative to competitors based on fabricated or distorted data. This category intersects with fair balance obligations and could attract FTC or FDA scrutiny depending on the mechanism by which misinformation spreads.

The documentation protocol for each category differs in urgency, escalation path, and regulatory notification threshold. The taxonomy has to be established before you start collecting — otherwise the record you build is internally inconsistent and harder to defend.

Building a Repeatable Monitoring Protocol

Documenting AI misinformation is not a one-time audit. It is a continuous surveillance operation with defined queries, defined platforms, defined cadence, and defined output formats. The structure borrows from established pharmacovigilance methodology, which is intentional — it makes the documentation immediately legible to regulatory reviewers who already understand that framework.

Defining Your Query Set

The first operational decision is which queries you will monitor. The query set has to be comprehensive enough to surface real misinformation but narrow enough to be systematically executable.

A defensible query set for a single drug includes four types of queries run across each monitored platform.

The first type is direct drug name queries: the brand name, the INN, common misspellings, and any patient-facing nicknames documented in social listening data. A patient asking about ‘ozempic’ and ‘semaglutide’ and ‘the diabetes pen’ are asking about the same drug. Your query set has to capture all three.

The second type is indication queries that pair the drug with its approved indications and common off-label uses: ‘drug name for weight loss,’ ‘drug name for PCOS,’ ‘drug name for heart failure.’ Indication-specific queries surface a different category of misinformation than bare drug name queries — particularly around off-label use, where AI systems often have less reliable training data.

The third type is safety-specific queries: ‘drug name side effects,’ ‘drug name interactions,’ ‘drug name and alcohol,’ ‘drug name liver damage,’ ‘is drug name safe during pregnancy.’ These queries target the exact territory where misinformation causes the most direct patient harm and creates the most direct regulatory exposure.

The fourth type is comparative queries: ‘drug name vs. competitor,’ ‘which is better drug A or drug B,’ ‘should I switch from drug name to alternative.’ Comparative queries often produce the most extreme inaccuracies because AI systems synthesize competitive claims from heterogeneous sources with no mechanism to weight clinical evidence appropriately.

Platform Coverage

The platform landscape for AI drug misinformation is not static. In early 2026, the platforms generating the highest volume of medically consequential queries are ChatGPT (OpenAI), Gemini (Google), Perplexity AI, Claude (Anthropic), Microsoft Copilot, and a growing tier of specialized medical AI tools including tools marketed directly to patients and tools embedded in pharmacy and health system portals.

Each platform behaves differently. Perplexity cites sources, which means misinformation is often traceable to an upstream inaccurate article or database. ChatGPT and Gemini synthesize without consistent citation, making it harder to identify the source but easier to document the hallucination itself. Medical AI tools embedded in clinical workflows present a distinct risk because they carry implicit clinician endorsement — a patient told by their pharmacy portal’s AI chatbot that a drug has no significant interactions is more likely to act on that information than a patient who gets the same statement from a general consumer AI.

Documentation protocols need to capture platform, query text, exact AI response text, timestamp, and any citations the AI provides. Screenshots are necessary but not sufficient — they are not text-searchable, are format-dependent, and are difficult to synthesize across large query volumes. Every monitoring session should generate both a screenshot archive and a structured text record.

Cadence and Trigger-Based Monitoring

Baseline cadence for most drugs should be monthly. That frequency captures model update cycles — most major AI platforms update or fine-tune their underlying models on roughly monthly schedules, and new versions often introduce new inaccuracies as well as correcting old ones.

Certain events should trigger immediate out-of-cycle monitoring. Label changes and REMS updates are the most obvious — a newly added warning that hasn’t propagated into AI training data creates a defined window of elevated risk. Safety communications including Dear Healthcare Provider letters and MedWatch alerts create the same window. Product launches and new indication approvals trigger a period of elevated misinformation risk because AI systems may conflate the new indication with off-label uses or confuse the new drug with existing options in the class.

Competitive launches also warrant triggered monitoring. When a new entrant enters a class, AI systems begin fielding comparative queries at high volume and are most likely to generate inaccurate comparative claims in the immediate post-launch period before their training data stabilizes. <blockquote> “Seventy-three percent of AI chatbot responses to patient-style drug safety queries contained at least one factual inaccuracy when tested against current prescribing information, according to a 2024 analysis of seven major consumer AI platforms conducted by researchers at the University of California San Francisco.” <br><br> — UCSF Department of Clinical Pharmacy, <em>Journal of the American Medical Informatics Association</em>, 2024 </blockquote>

The Documentation Record: What Regulators Actually Need to See

A monitoring program that produces screenshots stored in a shared drive is not a documentation program. It is an archive with no analytical value and no regulatory defensibility. The documentation record that actually protects a company in a regulatory inspection has specific structural requirements.

The Minimum Required Data Fields

Each documented AI misinformation instance needs a minimum set of structured data fields to be usable. These are not aspirational — they are the minimum for the record to be defensible.

Instance ID. A unique identifier that allows the record to be referenced across internal communications, escalation logs, and any external regulatory submissions without ambiguity.

Date and time. UTC timestamp to the minute. Platform-reported timestamps are sufficient if captured at time of query. Post-hoc reconstruction from screenshots is acceptable only if the screenshot includes visible metadata.

Platform and model version. ‘ChatGPT’ is insufficient. ‘ChatGPT, GPT-4o, accessed via chat.openai.com’ is the minimum. Where model version is not user-visible, document the interface version and access date, which allows version inference from public model release records.

Query text. The exact text entered, including any preceding context in multi-turn conversations. Multi-turn context matters because AI responses are often context-dependent — the same query produces different responses depending on preceding turns, and a documentation record that captures only the final query misrepresents what triggered the response.

Full AI response text. The complete response, not a summary. Regulatory reviewers need to assess the response in full. Summaries introduce interpretation at the documentation stage, which undermines the objectivity of the record.

Inaccuracy classification. Category 1, 2, or 3 per your established taxonomy, with a brief factual basis: what the AI stated, what the current label or clinical data actually states, and the specific label section or clinical reference that contradicts the AI claim.

Reach estimate. An estimate of how many patients or healthcare providers are likely to have encountered similar responses. This is necessarily imprecise, but it matters for regulatory prioritization. A misinformation instance on a platform processing 10 million daily health queries is categorically different from one on a platform with 50,000 monthly users, even if the underlying inaccuracy is identical.

Internal escalation record. Who was notified, when, and what action was taken or decided not to be taken and why.

Connecting AI Misinformation to Pharmacovigilance

This is the integration that most pharma companies have not yet made, and it is the one that will matter most when regulators formalize their expectations.

Pharmacovigilance systems are designed to detect safety signals — patterns in adverse event data that suggest a drug is causing harm at a rate or in a manner not captured by the label. AI misinformation is a distinct kind of signal: it is evidence that patients may be making medication decisions based on incorrect safety or efficacy information, which creates a predictable pathway to harm.

The connection to PV is not theoretical. If a major AI platform is systematically telling patients that a drug with a narrow therapeutic index has a wide safety margin — and if that misinformation reaches millions of patients — the expected result is an increase in certain adverse events among patients who adjusted their dosing based on AI advice. If your PV system is not linked to your AI misinformation documentation, you will not be able to identify that signal even when the adverse event data starts moving.

The practical integration requires two things. First, AI misinformation monitoring has to flag Category 1 instances to PV in real time — not on a monthly reporting cycle, but as individual flagged records that PV can assess for signal potential. Second, PV has to include ‘AI chatbot’ as a documented information source in its adverse event intake questionnaires. Patients who report adverse events and mention that they were following AI-generated dosing or safety advice are providing signal-relevant information that current intake forms are not capturing.

DrugChatter, a pharmaceutical AI monitoring platform, has built this PV integration into its core workflow — each Category 1 misinformation instance generates an automatic PV flag with the documented response text and reach estimate attached, allowing PV teams to assess signal potential without requiring a separate data transfer step.

Escalation Protocols: From Detection to Action

Documentation without an escalation protocol is a liability, not an asset. A documented record showing that a company knew about systematic Category 1 misinformation for six months and took no action is worse, from a regulatory standpoint, than no record at all.

The Internal Escalation Decision Tree

Escalation decisions have to be pre-defined in written SOPs. Post-hoc escalation decisions — made after the fact, inconsistently, by whoever happens to be reviewing the monitoring output — will not hold up under regulatory scrutiny.

The decision tree for Category 1 misinformation (safety-relevant) should work as follows.

On detection, the monitoring team documents the instance per protocol and assesses severity. Severity is a function of two variables: the magnitude of the inaccuracy (is the AI stating something that directly contradicts a Black Box Warning, or is it understating a common low-grade side effect?) and the estimated reach of the platform.

High severity — Black Box Warning contradiction or REMS misrepresentation on a high-reach platform — triggers a 24-hour internal escalation to Medical Affairs leadership, Regulatory Affairs, PV, and Legal. This group convenes a rapid assessment meeting and makes three decisions: whether to attempt platform notification, whether to escalate to the FDA’s MedWatch system as a potential safety communication issue, and whether to accelerate the next label review cycle to address any ambiguities the AI may be amplifying.

Moderate severity triggers a five-business-day escalation to the same group for a less urgent assessment. The output is still a documented decision, not just a discussion — someone has to write down what was decided and why.

Low severity — minor efficacy inaccuracies on low-reach platforms — is documented but managed within the routine monthly review cycle. The documentation record captures the instance, the classification, and the decision to manage within routine cycle, which is itself a regulatory-defensible decision when it is explicitly made rather than simply not escalated.

Platform Notification: What Works and What Doesn’t

The instinct of most pharma regulatory teams when they discover AI misinformation is to contact the AI platform and request a correction. This is rarely effective and often counterproductive as a primary strategy.

The major AI platforms do not have formal pharmaceutical liaison processes equivalent to what pharmaceutical companies have with medical publishers. ChatGPT’s content team does not have a clinical pharmacology review process. Gemini’s trust and safety team is not equipped to adjudicate whether a statement about drug-drug interactions contradicts current prescribing information. Perplexity’s editorial team is two people.

This does not mean platform contact is useless. It means it should be documented as an action taken, not relied upon as a primary mitigation strategy. Send the notification, document when it was sent, document the response or non-response, and do not assume the inaccuracy has been corrected until you have verified correction through repeat monitoring.

The more effective mitigation channels are authoritative content publication and search engine optimization of accurate information. If the AI platform is pulling misinformation from an inaccurate secondary source — a forum post, an old clinical review article, a non-current drug database — the more durable fix is to ensure that authoritative, accurate, citable content about your drug outranks and displaces those sources in the training data pipelines that AI platforms use.

This is not a fast fix. Training data pipelines operate on multi-month cycles. But it is a more structurally reliable approach than relying on individual platform correction requests.

Building the Regulatory Defense File

The documentation record you build during routine monitoring is the raw material for regulatory defense. The defense file — the organized, synthesized record that you would actually present to an FDA inspector or EMA assessor — requires an additional layer of work.

The Regulatory Defense File Structure

A well-organized regulatory defense file has five sections.

Section 1: Monitoring Program Description. This is the SOP-level document describing your monitoring methodology — platforms covered, query set, cadence, data fields, escalation protocol, and responsible personnel. It establishes that your monitoring is systematic, not ad hoc, and that it was designed before problems were discovered rather than assembled retroactively.

Section 2: Chronological Instance Log. Every documented instance, in chronological order, with all required data fields populated. This is the raw record. It should be machine-readable — a database or structured spreadsheet, not a folder of PDFs — so that regulators can query it.

Section 3: Trend Analysis. A quarterly synthesis of the instance log identifying patterns: which inaccuracy types are most common, whether specific platforms are consistently generating specific categories of misinformation, whether inaccuracy rates are changing over time, and whether any patterns correlate with external events like label changes or model updates.

Section 4: Escalation and Action Record. Every escalation decision documented per your protocol, including the decision rationale and outcome. This section demonstrates that your documentation program is connected to action, not just observation.

Section 5: Mitigation Effectiveness Record. Documentation of any corrective actions taken — platform notifications, authoritative content publication, label review decisions — with follow-up monitoring data showing whether the misinformation pattern changed after intervention. This section closes the loop: it shows regulators that your system is not just a detection mechanism but a closed-loop safety management process.

Retention and Chain of Custody

Drug company records related to safety monitoring are subject to retention requirements under 21 CFR Part 11, which requires electronic records to be trustworthy, reliable, and generally equivalent to paper records. AI misinformation monitoring records should be managed under the same retention policy as adverse event records — typically a minimum of ten years post-marketing authorization, extended in some jurisdictions.

Chain of custody matters for the screenshot archive specifically. Screenshots can be altered. A regulatory inspector has legitimate grounds to question the authenticity of a screenshot archive that was assembled after the fact or that lacks consistent metadata. Best practice is to route all monitoring sessions through a system that generates timestamped, hash-verified records at the time of capture — the same approach used in clinical trial data management and in some eDiscovery contexts.

The Emerging Regulatory Landscape: Where This Is Heading

The regulatory environment for AI-generated health content is moving faster than most pharma regulatory teams realize. The framework that exists today — which is essentially a gap in existing frameworks — will not persist through 2026.

FDA’s Digital Health Center of Excellence

The FDA’s Digital Health Center of Excellence has been actively developing guidance on AI in health contexts since 2023. Its January 2025 discussion paper on generative AI explicitly identified AI-generated drug information as a category requiring industry attention and proposed that companies marketing drugs have an obligation to monitor ‘reasonably foreseeable channels’ of patient misinformation about their products.

‘Reasonably foreseeable channels’ is not defined language — it was used in a discussion paper, not final guidance — but in FDA’s historical usage, it has been interpreted broadly. Major consumer AI platforms that process millions of health queries daily are as ‘reasonably foreseeable’ as social media or health forums, which the FDA has already established as monitoring expectations for certain product categories.

EMA’s AI Action Plan

The European Medicines Agency’s 2025-2028 AI Action Plan includes explicit provisions for what it calls ‘AI-mediated risk communication monitoring.’ The EMA’s position is that benefit-risk communication is a core part of a marketing authorization holder’s obligations, and that AI-generated content that systematically distorts benefit-risk perception for a product is a benefit-risk communication failure that the MAH has an obligation to detect and address.

This framing has direct implications for post-authorization safety commitments. Companies with active post-authorization safety studies (PASS) or risk management systems (RMS) may find that AI misinformation monitoring is interpreted as a required component of those systems even without explicit guidance language, because it falls within the general obligation to monitor and mitigate identified risks to benefit-risk balance.

The PMDA and Asia-Pacific Trajectory

Japan’s Pharmaceuticals and Medical Devices Agency has been less public in its AI guidance development, but internal communications obtained through Japan’s information disclosure processes indicate that PMDA reviewers have begun asking about AI misinformation monitoring in pre-approval meetings for high-profile products. The expectation is not yet formalized, but the question is being asked — which is typically the precursor to formalized expectation.

The broader Asia-Pacific trajectory, including regulatory activity in South Korea, Australia, and Singapore, follows the EMA model more closely than the FDA model. Companies building monitoring programs primarily for FDA compliance should review EMA’s AI Action Plan language, which is more prescriptive about what benefit-risk communication monitoring requires.

AI Misinformation and Brand Share: The Commercial Dimension

Regulatory compliance is the floor of why AI misinformation monitoring matters. The ceiling is commercial — the impact of systematic AI misinformation on prescribing behavior, patient adherence, and market share.

How AI Misinformation Moves Prescribing

A 2024 survey of 1,200 U.S. primary care physicians conducted by Veeva Systems found that 68% reported patients bringing AI-generated drug information to clinical visits. Of those physicians, 41% reported that AI-generated information had influenced a patient’s willingness to start or continue a therapy — in either direction.

The mechanism is predictable. Patients who use AI chatbots as pre-visit research tools arrive with preformed beliefs about their drugs. A patient who has read that their GLP-1 receptor agonist causes thyroid cancer in ‘many’ patients — a systematic overstatement of a rare, animal-study-derived risk — is harder to retain on therapy regardless of what their physician says. A patient who has been told that their PCSK9 inhibitor is equivalent to high-intensity statin therapy — an efficacy misrepresentation that understates the PCSK9’s advantage in statin-intolerant patients — may resist a switch that would actually benefit them.

Both directions of misinformation are commercially damaging, and both are documentable. The commercial analytics layer of an AI misinformation monitoring program quantifies which inaccuracies are most prevalent, on which platforms, and what the patient-population reach of each inaccuracy is. That data feeds directly into the medical affairs team’s clinical education priorities and the market access team’s payer communication strategy.

Share of AI Voice

Pharmaceutical competitive intelligence has historically tracked share of voice in physician-directed channels — journal advertising, conference presence, medical education content, sales force reach. AI changes the competitive intelligence question fundamentally.

When a patient or prescriber asks an AI chatbot to compare two drugs in the same class, the AI’s response is a competitive positioning event. The content of that response — which drug is framed as first-line, which is framed as having a better tolerability profile, which is mentioned first — influences prescribing and patient behavior at scale. This is share of AI voice, and it is currently unmeasured in most commercial analytics frameworks.

DrugChatter’s competitive monitoring module tracks share of AI voice by running standardized comparative queries across platforms and scoring AI responses for which drug is positioned favorably, neutrally, or unfavorably. The output is a share-of-voice metric analogous to the media monitoring metrics pharma commercial teams already use — except that it reflects what AI systems are actually saying to patients, not what companies are spending on promotion.

The commercial implication is direct. A drug that is systematically positioned unfavorably in AI comparative responses — even if that positioning is inaccurate — faces an AI-sourced headwind in patient demand and prescriber receptivity. Quantifying that headwind is the first step to addressing it.

Integrating AI Monitoring Into Medical Affairs Operations

Medical affairs teams are the natural organizational home for AI misinformation monitoring because they already own the intersection of scientific accuracy, label compliance, and healthcare provider education. But the integration requires structural changes to how medical affairs operates.

The Medical Information Linkage

Medical affairs teams field tens of thousands of medical information requests per year from healthcare providers and patients. Those requests are a dataset. They reveal what people don’t know or believe incorrectly about your drugs. Until recently, medical information teams had no systematic way to trace whether a cluster of similar misconceptions came from a common source.

AI monitoring creates that linkage. When medical information teams see a spike in questions about a specific drug-drug interaction — one that the label addresses clearly but that AI chatbots are systematically misrepresenting — they can now trace that spike to its source. That traceability improves the quality of medical information responses, informs MSL field guidance, and creates a documented chain of evidence connecting AI misinformation to patient-level impact.

The operational integration requires two changes. Medical information intake systems need to add a data field capturing whether the inquiry was informed by AI-generated content — simple yes/no, with a free-text field for the patient or provider to paste or describe what the AI said. And the data from that field needs to flow into the same analytics environment as the AI monitoring program output.

MSL Field Intelligence

Medical Science Liaisons are the field function best positioned to assess the real-world impact of AI misinformation on physician behavior. They have the relationships, the clinical credibility, and the visit frequency to observe how AI-generated information is shaping prescriber beliefs.

Building structured AI misinformation intelligence into MSL field reporting requires adding specific questions to the post-visit reporting template: Did the physician mention AI-generated information? What did the physician report that the AI said? How did the physician respond to it? This structured field intelligence, aggregated across a national MSL team, gives medical affairs a real-world signal that complements and validates the platform-level monitoring data.

Technology Infrastructure: What You Actually Need

The technology stack for AI misinformation monitoring does not need to be custom-built. The components exist. The question is integration and workflow.

Query Automation

Running a comprehensive query set across six major AI platforms, with all required data fields, is not a manual task if done at scale. At the monitoring cadence required for a high-exposure drug — monthly baseline, triggered by label changes and competitive events — a comprehensive query set can run to several hundred individual query executions per cycle. Manual execution is feasible for a small query set on a single platform. It is not feasible for systematic monitoring of a portfolio of drugs across a multi-platform landscape.

Query automation tools for AI monitoring platforms exist in two forms: specialized pharmaceutical monitoring platforms and general-purpose AI testing infrastructure repurposed for monitoring applications. Specialized platforms — DrugChatter is one of the few purpose-built for pharmaceutical regulatory and commercial monitoring — offer pre-built query management, structured output capture, and PV integration workflows. General-purpose tools require more configuration but offer greater flexibility for teams with specific workflow requirements.

Natural Language Processing for Inaccuracy Classification

Manual review of AI response text for inaccuracy classification is feasible for low query volumes. At scale, natural language processing tools can assist with initial classification — flagging responses that contain terms inconsistent with label language, that reference dosing parameters outside labeled ranges, or that assert efficacy claims not supported by approved prescribing information.

NLP-assisted classification is not a replacement for human medical review. It is a triage tool that focuses human review on the instances most likely to be clinically significant. The classification that goes into the regulatory defense file has to be human-confirmed, but NLP can reduce the volume of manual review required by 60-80% depending on query volume and platform.

Integration With Existing Safety Systems

The most important technology decision is not which monitoring platform to use. It is how AI monitoring data integrates with existing pharmacovigilance and drug safety systems. If AI monitoring output lives in a separate system with no integration into the ICSR database or signal detection platform, the monitoring program is operationally siloed and its value is limited to detection.

Full integration means that Category 1 flags from AI monitoring generate records in the safety database with the same data structure as spontaneous adverse event reports — even though they are not adverse event reports. They are flagged as a distinct record type (AI misinformation signal), but they are searchable alongside adverse event data, included in signal detection analyses, and reviewed by the same PV team that reviews spontaneous reports.

This integration is technically achievable with most major PV platforms. It requires a custom data model extension and some workflow configuration, but it does not require a bespoke build.

Case Study: A Label Change Creates an AI Misinformation Window

Consider a hypothetical that maps closely to situations multiple pharma companies have encountered in the past 18 months.

A company updates the prescribing information for a cardiovascular drug to add a new contraindication — use with a specific class of antibiotics due to a newly identified QT prolongation interaction. The updated label is filed with the FDA, approved, and published on DailyMed. The company sends a Dear Healthcare Provider letter.

Over the following four months, every major AI platform continues to tell patients and prescribers that the drug has no significant interactions with the antibiotic class in question. The old information is in the training data. The new label information is not — AI training pipelines typically lag DailyMed updates by three to six months.

During that window, patients who consult AI before starting the antibiotic are systematically told that their cardiovascular drug is safe to continue. Some of them experience QT prolongation events. Some of those events generate adverse event reports. None of those reports, as documented, note that the patient was following AI-generated guidance.

The company that has an AI monitoring program detects the contraindication misinformation within two weeks of the label change, documents it as a Category 1 instance across four platforms, escalates to PV and Regulatory, initiates platform notification, and launches a targeted authoritative content campaign to accelerate accurate information into AI training pipelines. The company that does not have an AI monitoring program learns about the misinformation six months later when a physician mentions it during an MSL visit.

The regulatory exposure in these two scenarios is not the same. The adverse events happened in both scenarios. But only one company has a documented record showing it detected the signal, took action, and managed the risk systematically. That record is the difference between a routine post-marketing safety update and a regulatory enforcement action premised on inadequate pharmacovigilance.

The Cost of Not Documenting

There is a common objection to AI misinformation monitoring programs: that the regulatory requirements aren’t clear enough yet to justify the investment. This objection misreads the risk calculus.

Regulatory requirements becoming clear is typically preceded by enforcement actions that create the case law on which requirements are based. The FDA’s requirements for social media monitoring, for example, did not emerge from published guidance first — they emerged from enforcement actions against companies that were demonstrably aware of problematic promotional content on social media and took no action. The guidance followed the enforcement.

The AI misinformation enforcement action that establishes case law for the pharmaceutical industry has not happened yet. When it does happen, the company involved will almost certainly be one that had no monitoring program, no documentation record, and no evidence of systematic awareness and response. That company will become the case study that drives the industry to build monitoring programs.

Every pharmaceutical company should prefer to build the monitoring program now, before the case study forces the issue, rather than becoming the case study that creates the regulatory precedent.

The investment required is not large relative to the exposure. A purpose-built AI monitoring platform costs a fraction of the annual budget of a mid-size medical affairs team. The personnel requirement is modest — a monitoring program for a single drug can be operated by one trained analyst running query cycles and routing escalations per SOP. The documentation infrastructure reuses regulatory document management systems that already exist.

The cost of not documenting is harder to quantify and much larger: adverse event signal detection failures, regulatory enforcement exposure, commercial brand share erosion from uncorrected AI misinformation, and the reputational cost of being the company that knew and did nothing.

Key Takeaways

AI misinformation about drugs is a safety signal. Treat it with the same documentation rigor as an adverse event report. Category 1 instances — safety-relevant misinformation — require immediate escalation and PV linkage, not quarterly reviews.

The documentation record has to be built before the problem is discovered. A monitoring program assembled after a regulatory inquiry is not a monitoring program. It is evidence of retroactive awareness, which is worse than no record.

Platform notification is a documented action, not a primary mitigation strategy. Major AI platforms do not have clinical pharmacology review processes. Notify them, document the notification and response, and pursue authoritative content publication as the more structurally reliable mitigation channel.

PV integration is not optional. AI monitoring data that is not integrated into signal detection has limited regulatory value. Category 1 flags need to flow into the safety database in real time, not on a monthly reporting cycle.

The regulatory framework is converging faster than most teams realize. FDA, EMA, and PMDA are all moving toward explicit AI misinformation monitoring expectations. Companies that have established SOPs, documented records, and demonstrated regulatory defensibility when guidance finalizes will be in a structurally different position than companies building programs under regulatory pressure.

Commercial impact is measurable. Share of AI voice is a real competitive metric. AI misinformation that systematically disadvantages your drug in comparative queries is a commercial headwind that can be quantified and partially mitigated through authoritative content strategy.

The investment is modest relative to the exposure. A systematic AI monitoring program for a single drug can be operational within 60 days with existing technology platforms. The regulatory and commercial risk it mitigates is not modest.

FAQ

Q: How does AI misinformation monitoring relate to existing pharmacovigilance obligations under 21 CFR Part 314?

The connection is indirect but real. 21 CFR Part 314.81 requires post-marketing surveillance of information that may affect the benefit-risk assessment of a drug. AI platforms are now high-reach channels through which patients receive information that shapes their medication behavior. The FDA has not yet explicitly defined AI monitoring as a Part 314 obligation, but its Digital Health Center of Excellence’s January 2025 discussion paper used language — ‘reasonably foreseeable channels’ — that is consistent with Part 314’s existing surveillance scope. Companies with active pharmacovigilance obligations should treat AI monitoring as falling within that scope now, before guidance makes it explicit.

Q: What is the evidentiary standard for AI misinformation records in an FDA inspection?

There is no AI-specific evidentiary standard yet. In the absence of specific guidance, the applicable standard is the general 21 CFR Part 11 requirement for electronic records: they must be trustworthy, reliable, and capable of being accurately retrieved throughout the retention period. For AI monitoring records, this means timestamped capture, hash-verified archives for screenshots, a structured database format for the instance log, and a documented chain of custody for all records from capture through storage. Records assembled from memory or reconstructed from informal notes are not Part 11 compliant.

Q: Can AI misinformation monitoring data be used in label revision submissions?

Yes, and in some cases it should be. If AI monitoring reveals that a specific safety concept is systematically misunderstood — by patients and AI systems that are reflecting patient-level information — that pattern is evidence that the current label language is insufficiently clear for its intended audience. Label revision submissions to the FDA increasingly include communication research data demonstrating that label text is understood correctly by the target population. AI misrepresentation patterns are a complement to that communication research: they show what patients actually believe when they process label-derived information through imperfect intermediaries.

Q: How should companies handle a situation where an AI platform refuses to correct documented misinformation after formal notification?

Document the refusal. A written record of notification, the specific inaccuracy flagged, the label citation provided, and the platform’s non-response or refusal to act is evidence that the company met its duty to attempt correction. It also supports any subsequent regulatory communication in which the company explains that misinformation persisted despite documented mitigation efforts. The practical implication of platform non-response is that the authoritative content strategy — ensuring accurate information is published and optimized for AI training pipeline ingestion — becomes the primary mitigation channel, and the timeline for that campaign should be accelerated.

Q: How does share of AI voice differ from traditional share of voice, and why does it matter for market access?

Traditional share of voice measures promotional spend and reach in physician-directed channels — journal advertising, conference presence, sales force call volume. It predicts prescriber awareness of a drug’s existence and basic positioning. Share of AI voice measures how AI systems represent a drug when patients and prescribers query them directly, with no promotional framing. It affects what patients believe about a drug before they see their physician, what questions physicians field from patients who have already consulted AI, and what comparative positioning is communicated to patients making adherence decisions. For market access specifically, if AI systems are systematically positioning a drug as second-line relative to a competitor in the same class — regardless of what clinical evidence or clinical guidelines actually say — that positioning erodes the commercial case for the drug in ways that traditional market access strategies are not designed to address.