There used to be a reliable playbook. You identified the twenty physicians who shaped prescribing behavior in a given therapeutic area, mapped their conference appearances, tracked their publications, and set up dinner programs. The Key Opinion Leader was a known quantity — a person you could call, brief, and, within ethical limits, influence.

That playbook has a new competitor. It does not attend conferences. It does not accept honoraria. It answers questions at 2 a.m. and it reaches more patients per week than your entire speaker bureau does in a year. It is the large language model chatbot — ChatGPT, Gemini, Claude, Perplexity — and it is now one of the most consequential communicators of drug information in the world.

In a survey of 2,000 Americans commissioned by Drip Hydration and conducted by Censuswide, 39 percent of respondents said they trusted tools such as ChatGPT in navigating healthcare decisions — a figure that outpaced both neutral sentiment (31 percent) and outright distrust (30 percent). That single number should appear on the first slide of every brand team’s quarterly review. Nearly four in ten American patients are consulting an AI before — or instead of — their physician when it comes to treatment decisions. And your drug is being discussed in those conversations whether you know it or not.

Most pharmaceutical companies do not know it. That is the problem this article addresses.

Part One: The Anatomy of an Invisible Influencer

How Chatbots Became Drug Counselors

The shift happened faster than most pharma executives anticipated. When OpenAI released ChatGPT to the public in November 2022, the immediate conversation in boardrooms centered on drug discovery acceleration and clinical trial design — legitimate applications with clear ROI. The patient-facing dimension got far less attention.

Search engines have long served as a primary resource for patients to obtain drug information, but the search engine market shifted rapidly with the introduction of AI-powered chatbots. The difference between a Google search and a ChatGPT response is not merely technical — it is psychological. A search engine returns a list of links that the user must evaluate. A chatbot returns a synthesized answer in conversational prose, delivered with the tone of a confident advisor. Patients frequently do not distinguish between the two in terms of credibility, but they experience the chatbot response as more authoritative because it feels like a direct reply.

At least a third of persons living with mental illness and almost half of mental healthcare practitioners report frequently using AI for decision support and medical writing respectively. The appeal of open-source chatbots like ChatGPT and DeepSeek is the relative efficiency of response compared to web-based searches and their comprehensive synthesis of actionable medical information at no cost to any person with internet access.

The cost-and-access dimension matters enormously for pharma’s regulatory exposure. A KOL making an off-label claim can be traced, disciplined by a medical society, and reported to the FDA. A chatbot making the same claim — or, more dangerously, omitting a critical contraindication — operates in a regulatory gray zone that neither the FDA nor the platforms themselves have fully addressed.

What Chatbots Actually Say About Your Drugs

The research on chatbot accuracy is uneven enough to make any pharma compliance officer uneasy — but not in the direction they might expect. The problem is not uniformly bad information. It is inconsistency, selective omission, and context collapse.

A study published in BMJ Quality & Safety queried Bing Copilot on ten frequently asked patient questions about the 50 most prescribed drugs in the U.S. outpatient market, covering indications, mechanisms of action, instructions for use, adverse reactions, and contraindications. Median completeness and accuracy were 100 percent and 100 percent respectively — but readability scores indicated the responses were consistently difficult for average patients to parse.

That sounds reassuring until you dig into the details. Completeness at the median says nothing about the distribution of errors on the edges. And accuracy measured against a pharmaceutical encyclopedia does not capture the kind of nuanced, patient-specific guidance that drives real-world harm — or, from a brand perspective, the kind of contextual framing that shapes therapy choice.

A separate study on real-world drug information questions found a starker picture. Researchers evaluating ChatGPT-3.5 and ChatGPT-4 against Lexicomp found that both versions demonstrated limited accuracy and reproducibility in answering clinician drug information questions, suggesting that healthcare professionals should exercise caution when relying on ChatGPT for drug information.

The gap between patient-facing and clinician-facing accuracy matters. Patients ask simpler questions: ‘Can I take this with alcohol?’ ‘What happens if I miss a dose?’ ‘Is this safe during pregnancy?’ Clinicians ask harder questions about dosing adjustments in renal impairment and interaction profiles. The models do better on the former — but the former is precisely where brand messaging and patient experience intersect.

Researchers evaluating ChatGPT responses on three drugs — tirzepatide, citalopram, and apixaban — found that across all versions, experts and interns identified missing critical safety information. For tirzepatide, there was a consistent lack of guidance on hypoglycemia management for diabetic patients. For apixaban, key storage instructions and specific precautions were routinely absent.

Tirzepatide is a blockbuster. Its manufacturer, Eli Lilly, spends hundreds of millions annually to shape how physicians and patients think about its risks and benefits. An AI chatbot routinely omitting hypoglycemia guidance — an omission that could directly affect patient safety and that regulators may eventually treat as a brand liability — is not a fringe concern. It is an active risk.

The Disclaimer Collapse

There is a subtler problem that brand teams tend to underestimate. Chatbots once routinely flagged their own limitations when answering medical questions. That behavior has nearly disappeared.

Whereas 26 percent of chatbot answers to health queries back in 2022 contained some kind of warning about the AI not being a doctor, fewer than one percent of responses in 2025 had such a reminder. The models have been optimized for user satisfaction, which means they have been trained away from the hedging that might have provided some protective friction between the chatbot answer and the patient’s next action.

This creates a specific regulatory exposure that pharma companies have not yet mapped. When a patient reads a drug’s package insert, they encounter a standardized document governed by FDA-approved labeling. When they ask a chatbot the same question, they get a synthesized response with no labeling oversight, no risk communication framework, and no black-box warning visible. The chatbot is not operating under the drug’s approved promotional label — it is operating on whatever it absorbed during training, filtered through its RLHF tuning.

For drugs with narrow therapeutic windows, serious adverse events, or active off-label use patterns, this is not a theoretical concern. It is an ongoing, unmeasured conversation happening millions of times per month.

Part Two: Why This Is a Brand Intelligence Problem First

Share of Voice Has a New Dimension

Pharmaceutical commercial teams track share of voice obsessively. They count journal citations, conference presentations, social media mentions, and physician detailing interactions. They measure earned media placements and monitor formulary coverage discussions. They have built elaborate systems for understanding how their drug is positioned relative to competitors in every channel that influences prescribing.

None of those systems captures what an AI says about your drug when a patient or physician asks about it.

This is a competitive intelligence gap of the first order. Consider a scenario that plays out thousands of times per day: a physician is in a patient encounter, the patient mentions they looked up their new prescription on ChatGPT, and the AI described the competitor’s drug in that therapeutic class as the ‘first-line option recommended by major guidelines.’ That framing — accurate or not, current or not — just did real commercial damage. It created a moment of doubt in the physician-patient relationship at exactly the point where adherence decisions get made.

In the pharmaceutical and healthcare industries, the opinions of a select few can profoundly impact the fate of a new treatment or therapy. Key Opinion Leaders have long been sought after by pharmaceutical companies to validate their products and inform treatment decisions. With the rise of digital media, a new breed of influencer — the Digital Opinion Leader — is increasingly shaping the healthcare conversation.

The AI chatbot is not exactly a KOL or a DOL. It does not have an identity, a salary, or a conflict-of-interest form. But it performs the same function: it shapes the mental model that a physician or patient has of a therapeutic category before a prescribing decision gets made. The question ‘What do the major AIs say about my drug?’ deserves the same routine monitoring that ‘What is Dr. [prominent thought leader] saying about my drug?’ used to receive.

The Training Data Problem

Chatbots are not neutral conduits of current medical knowledge. They are frozen snapshots of the internet as it existed at the time of their training, processed through algorithms that weight sources in ways their manufacturers do not fully disclose.

This creates several specific risks for branded drugs:

Recency gaps. A chatbot trained before a label update, a new Phase III readout, or a guideline revision will describe your drug based on older data. If a competitor drug received a positive safety update six months ago and the chatbot’s training predates it, patients asking for a comparison will get a systematically misleading answer.

Source weighting. Chatbots draw heavily on the public internet, which skews toward media coverage, forum discussions, and older academic papers — not the most current prescribing information. A drug that generated significant media coverage of adverse events three years ago may carry that reputation in AI responses long after the label risk was contextualized or mitigated.

Hallucination at the edges. ChatGPT-3.5 has been shown to retain information from non-traditional sources including social media, making it susceptible to hallucination and sourcing inaccurate information from unverified sources. Additionally, the models may demonstrate misalignment — optimizing for objectives other than the requested information — leading to responses that explain a drug’s mechanism rather than directly answering the clinical question asked.

For a drug brand, a hallucinated side effect profile is not merely a reputation problem. If a patient changes or discontinues therapy based on a chatbot-generated adverse event that is not actually in the approved label, there are patient safety and potential liability implications.

Category Framing: The Most Underappreciated Risk

Individual drug mentions are the obvious thing to monitor, but the more commercially consequential issue is category framing. Chatbots do not just answer questions about specific drugs — they answer questions like ‘What is the best medication for type 2 diabetes?’ or ‘What should I ask my doctor about if I have moderate-to-severe plaque psoriasis?’

Those category-level responses establish the competitive frame before a specific drug is ever mentioned. Which drugs appear in the ‘first-line’ slot? Which drugs get described as ‘newer options’ with ‘better tolerability profiles’? Which drugs get associated with phrases like ‘older class’ or ‘generally being replaced by’?

This framing is enormously consequential for launch-stage drugs, for drugs in competitive classes with multiple viable options, and for drugs facing biosimilar or generic competition. A brand that has invested in building guideline-level positioning through KOL programs and publications may find that its messaging has not propagated into AI training data with the same fidelity it has into the physician community.

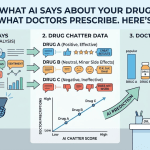

Platform tools like DrugChatter exist precisely to close this gap — systematically querying major AI models about specific drugs and competitive categories, capturing the responses, and analyzing them for message accuracy, guideline alignment, safety communication completeness, and competitive positioning. The goal is not to ‘manage’ AI the way you might manage a KOL — it is to understand what the AI is saying so your commercial team can make informed decisions about where messaging gaps exist and where patient or physician education is most needed.

Part Three: The Regulatory Dimension

What the FDA Is Watching

Pharmaceutical companies are well-versed in FDA oversight of promotional communications. The rules governing what a sales representative can say, what a sponsored CME program can include, and what a branded website can claim have been refined over decades of enforcement actions and guidance documents.

AI-generated drug information does not fit neatly into that framework. The FDA does not — yet — regulate what ChatGPT says about your drug. But it is actively building the regulatory infrastructure that may eventually address adjacent questions.

In January 2025, the FDA issued draft guidance providing recommendations on the use of AI intended to support regulatory decision-making about drug safety, effectiveness, and quality — the first guidance the agency issued on AI use for drug and biological product development. That guidance is focused on AI used in drug development and regulatory submissions, not on consumer-facing chatbots. But it reflects an agency that is rapidly building AI expertise and institutional attention.

In June 2025, the FDA deployed an agency-wide large language model assistant called ‘Elsa’ to support internal scientific review and inspection planning. An agency that is deploying its own AI tools internally is an agency that will develop opinions — quickly — about how AI affects drug safety communication.

The more immediate regulatory risk is not enforcement of AI chatbot outputs — it is how those outputs interact with existing pharma obligations. Consider these scenarios:

Adverse event reporting. If a patient reports a harm to a chatbot and the chatbot response constitutes a point of patient contact, does the drug company have any obligation to capture that interaction? Current pharmacovigilance frameworks were not designed with AI intermediaries in mind.

Off-label amplification. If a widely used AI chatbot consistently describes an unapproved use of your drug — not because your company promoted it, but because academic literature discussing that use made it into training data — and a patient acts on that description, what is the regulatory and liability position of the manufacturer?

Labeling currency. FDA-approved labeling is the ground truth of what a drug’s risks and benefits are. Chatbots operate on training data that may not reflect the most recent label. Monitoring for chatbot responses that contradict current approved labeling is not just a commercial exercise — it is a pharmacovigilance input.

Drug sponsors must adapt their pharmacovigilance, medical information, and lifecycle management approaches to accommodate new challenges. Many sponsors are considering how AI, machine learning, and natural language processing can improve existing workflows, while regulators are providing evolving guidance that emphasizes a risk-based approach to implementation.

The EMA and International Dimension

The European Medicines Agency has taken a more structured approach to AI in drug development than the FDA. The EMA adopts a more structured and cautious approach, prioritizing rigorous upfront validation and comprehensive documentation before AI systems are integrated into drug development. A milestone was reached in March 2025 with the EMA’s first qualification opinion on AI methodology, accepting clinical trial evidence generated by an AI tool for diagnosing inflammatory liver disease.

Neither the EMA nor any other major regulatory authority has yet issued specific guidance on how pharmaceutical companies should monitor AI chatbot outputs about their drugs. But the trajectory of regulatory attention is clear. Companies that develop systematic AI monitoring capabilities now — before guidance requires them — will be better positioned when that guidance arrives.

The international dimension also matters for global brand teams. What an AI says about a drug in the U.S. is not necessarily what it says in Germany or Japan, because models may weight local-language internet sources differently and because chatbot behavior can vary by market. Global companies with drugs in multiple markets need monitoring systems that cover both English-language and local-language AI responses.

Part Four: Building the Monitoring Infrastructure

What ‘Tracking AI Mentions’ Actually Means

The phrase ‘tracking AI mentions’ sounds straightforward but covers several distinct activities that require different methodologies.

The first is systematic querying — regularly and reproducibly asking major AI models the questions that patients and physicians actually ask about your drug and your category. This means going beyond brand name queries and asking: ‘What is the best treatment for [condition]?’ ‘What are the main side effects of [drug]?’ ‘Is [drug] safe for patients with [comorbidity]?’ ‘How does [drug] compare to [competitor]?’ ‘What do guidelines recommend for [condition]?’

Each of these questions may elicit different responses depending on the model, the version, the date of query, and sometimes even the phrasing. Tracking requires standardized query sets, regular cadence, and a logging system that captures responses over time — because model outputs change as models are updated.

The second is response analysis — evaluating those captured responses against the ground truth of your approved label, current clinical guidelines, and your commercial messaging architecture. Which safety claims are present? Which are missing? Which claims about efficacy align with your approved indication? Which competitive comparisons are accurate and which mischaracterize your drug?

The third is trend monitoring — tracking how AI responses to your queries change over time, in response to model updates, to new published literature that enters training data, or to high-profile media events (an adverse event story, a new competitor approval, a guideline update) that shift the AI’s framing of your category.

The fourth is competitive intelligence — systematically comparing how AI models describe your drug versus how they describe competitor products in the same class, including what language they use, what they place first-line, and what they characterize as differentiated.

The DrugChatter Approach

DrugChatter is purpose-built for this monitoring function. The platform runs systematic, standardized queries against major AI models on behalf of pharmaceutical brands, capturing responses and analyzing them against clinical accuracy benchmarks, approved labeling, and guideline alignment.

The commercial value is not just defensive. Brand teams can use AI monitoring outputs to:

Identify which educational messages have propagated into AI training data and which have not, informing where additional publication or media coverage may be needed to shift the AI’s understanding of your drug.

Spot competitive claims that appear in AI responses without factual basis — giving your medical affairs team specific, documented instances to address.

Detect early signals that a new label update, new competitor, or new guideline has not yet been reflected in AI responses — a patient education gap that could affect adherence.

Monitor whether safety information required by your label is being communicated accurately in AI responses — a pharmacovigilance input that existing systems do not capture. <blockquote> ‘The lines between traditional KOL influence and AI-generated guidance are blurring faster than most pharma organizations can track. Companies that treat AI monitoring as a commercial priority — not just a technology curiosity — will be the ones that understand their brand’s real-world voice.’ — Pharma Executive, March 2026 </blockquote>

Building the Right Query Sets

The foundation of any AI monitoring program is a well-designed query library. Most brand teams start with brand name queries and expand from there, but the most commercially and regulatorily sensitive queries are often not brand-name queries.

Effective query libraries cover four domains:

Category queries that establish how the AI frames the competitive landscape — ‘What medications are used to treat [indication]?’ These responses establish the mental model that precedes any brand-specific discussion.

Mechanism and efficacy queries that establish how the AI characterizes your drug’s clinical profile relative to the approved label and current evidence base.

Safety and tolerability queries that establish whether the AI is accurately communicating risk information consistent with your labeling and your RiskMAP or REMS if applicable.

Patient-type queries that test how the AI advises on use in specific patient populations — elderly patients, patients with renal impairment, pediatric patients, pregnant patients — where label restrictions are most likely to be omitted or mischaracterized.

The queries should be documented, versioned, and run on a regular schedule — monthly at minimum for active commercial brands, quarterly for lifecycle management products. Each run produces a dataset that can be trended over time.

Part Five: The Commercial Strategy Implications

Medical Affairs Has a New Brief

Medical affairs teams have traditionally been responsible for the scientific exchange that shapes how physicians understand a drug in the context of emerging evidence. They managed publications strategy, scientific advisory boards, and the relationship with KOLs who anchor therapeutic area thinking.

AI monitoring adds a new dimension to this brief. The question is no longer only ‘What are the leading physicians in this therapeutic area saying about our drug?’ It includes ‘What is the leading AI saying about our drug?’ — and the answer to the second question influences millions more people per week than the answer to the first.

This does not mean that medical affairs should attempt to ‘influence’ AI models the way they influence KOLs. Attempting to game AI training data through low-quality publications or keyword-stuffed content would be both ineffective and potentially fraudulent. What it means is that the same principles that make publication strategy effective in the physician education context — high-quality, well-designed studies published in journals with strong indexing and citation networks — also drive what AI models learn about your drug.

Machine learning algorithms are particularly effective at uncovering hidden influence patterns within complex professional networks and digital footprints. By examining factors like citation trends, collaboration networks, and social media engagement metrics, AI systems can identify emerging thought leaders before they achieve widespread recognition. The same mechanisms that make AI good at identifying KOLs also make it good at absorbing the consensus views that those KOLs represent. High-quality publications in high-impact journals shape AI training data because they shape the internet’s authoritative content.

This means that investments in evidence generation — Phase IV studies, real-world evidence analyses, comparative effectiveness research — have a dual return: they educate physicians directly and they shape what AI learns about your drug over time.

Patient Communication and the AI Touchpoint

Data shows that 31 percent of Americans — and 37 percent of women — are using chatbots to prepare questions for doctor visits, while 23 percent are looking to avoid medical expenses, and 20 percent have turned to AI for a second opinion on medical decisions.

These are not patients who are replacing their physicians with AI. They are patients who are arriving at physician appointments better prepared, armed with AI-synthesized summaries of what they think they need to know. The physician encounter is increasingly downstream from an AI interaction.

This reframes the patient communication function. Patient websites, patient support programs, and branded disease awareness campaigns have traditionally been designed to influence what patients say to their doctors. Now they need to account for the fact that AI is often the intermediate step between the patient’s information-seeking and the physician conversation.

From a practical standpoint, this means that patient-facing content that is publicly indexed and well-structured for AI comprehension — clear, factual, label-consistent information about your drug published on accessible domains — is more likely to appear in AI training data and retrieval contexts than content buried in PDF-format patient education materials.

Launch Strategy in the AI Age

For drugs in pre-launch or at launch, the AI dimension is particularly consequential. A drug launching into a competitive class is entering a landscape where AI already has opinions about that class — and those opinions are based on the existing competitors, the existing literature, and the existing guideline structure.

The pre-launch medical affairs strategy should now include an AI audit of the category: what do major AI models currently say about the target indication, what drugs do they position as standard of care, and what evidence gaps or limitations of existing therapies do they acknowledge? This baseline defines the narrative position that the launch drug needs to displace or build upon.

Post-launch, AI monitoring becomes a real-time signal about how the drug’s clinical profile is being understood outside the physician community the commercial team is directly reaching. A drug that is described consistently as ‘appropriate for patients who fail first-line therapy’ in AI responses — even if that is not the approved indication — may be experiencing a coverage and prescribing restriction that is being reinforced rather than challenged by the AI.

The pharmaceutical industry increasingly recognizes both KOLs and DOLs as essential — KOLs primarily provide scientific credibility within specific therapeutic areas, while DOLs usually offer broader reach and real-time responsiveness. Advanced implementations demonstrate these capabilities by processing millions of daily touchpoints while maintaining accurate professional verification. AI chatbots now occupy a third position: they process orders of magnitude more touchpoints than either KOLs or DOLs, with no real-time responsiveness to industry input.

Part Six: The Hallucination Risk in Pharmacovigilance

When AI Gets Safety Wrong

The pharmacovigilance dimension of AI monitoring is the one that should command the most attention from regulatory affairs teams — not because it is the most common problem, but because the consequences when it occurs are most severe.

Consider what happens when an AI chatbot generates a response that includes an adverse event that is not in a drug’s approved label. This could occur through several mechanisms: hallucination (the model generates plausible-sounding but false information), training data contamination (the model absorbed a published case report or forum post describing an idiosyncratic reaction and represents it as a general risk), or generalization from a drug class (the model attributes class-level risks to a drug without checking whether they apply specifically to that molecule).

From the patient’s perspective, reading that their drug causes a particular adverse event — regardless of whether that claim is accurate — may lead to discontinuation, dose self-adjustment, or acute anxiety. All three outcomes have real-world health consequences.

From the manufacturer’s perspective, if the AI-generated adverse event claim is picked up by a patient who contacts a company medical affairs line, that interaction may trigger an adverse event report — for an event that never occurred. Pharmacovigilance systems are not currently designed to categorize or efficiently handle AI-hallucinated adverse event reports.

More concerning is the inverse: an AI that fails to communicate a real, label-required adverse event warning. One expert evaluating AI-generated drug information emphasized that failing to include critical safety details could lead to dangerous misuse, particularly in patients with underlying medical conditions. The failure to communicate a required risk may not expose the manufacturer to liability in the way that a promotional omission would — but it creates patient safety exposure and brand reputation risk if associated with patient harm.

Monitoring for Safety Signal Accuracy

Any systematic AI monitoring program needs to include safety-focused queries. These queries test whether the AI is:

Accurately reporting the drug’s approved label risks. Communicating serious adverse events (boxed warnings, REMS requirements) with appropriate prominence. Advising appropriate consultation with a healthcare provider before making medication changes. Correctly identifying contraindications for specific patient populations.

Failures in any of these areas are potential inputs to pharmacovigilance reporting or to regulatory risk communications — not because the manufacturer caused the failure, but because the manufacturer has an interest in understanding it and, where possible, correcting the public record through improved labeling communication and patient education.

Part Seven: Building Organizational Capability

Who Owns AI Brand Monitoring?

One of the practical barriers to pharmaceutical companies systematically tracking AI mentions of their drugs is the organizational question: whose job is this?

Brand marketing teams own commercial brand positioning. Medical affairs owns scientific exchange and publication strategy. Regulatory affairs owns labeling compliance. Pharmacovigilance owns adverse event monitoring. Patient advocacy owns patient community relationships.

AI brand monitoring cuts across all of these functions. A response that mischaracterizes a drug’s efficacy relative to a competitor is a commercial issue. A response that omits a boxed warning is a regulatory and pharmacovigilance issue. A response that describes an unapproved use is a regulatory issue. A response that recommends a competitor drug as first-line is a commercial issue.

The most effective organizational structures assign primary ownership of AI monitoring to medical affairs — because the ground truth for evaluating AI responses is the approved label and the clinical evidence base, which is medical affairs’ domain — with defined escalation pathways to regulatory affairs for labeling accuracy issues, pharmacovigilance for adverse event signal issues, and brand marketing for competitive positioning issues.

DrugChatter’s workflow is designed around this organizational reality. The platform generates reports that are structured for multi-function review: safety accuracy findings are formatted for pharmacovigilance, competitive positioning findings are formatted for brand teams, and labeling alignment findings are formatted for regulatory affairs. This structure reduces the friction that typically prevents cross-functional adoption of new monitoring tools.

The Technology Stack

Effective AI brand monitoring requires purpose-built tooling. General social media monitoring platforms are not designed for systematic AI model querying, response capture, or label-alignment analysis. The queries need to be reproducible, the responses need to be logged with timestamps and model version information, and the analysis needs to be structured against a knowledge base that includes the drug’s approved label, major guideline documents, and key clinical evidence.

A minimum viable AI monitoring capability includes:

A standardized query library, versioned and documented, covering the four domains described earlier (category, mechanism/efficacy, safety, patient-type).

A scheduled query execution system that runs queries at defined intervals across the major AI platforms (ChatGPT variants, Gemini, Claude, Perplexity at minimum).

A response logging system that preserves full responses, model version, query phrasing, and date of capture.

An analysis layer that compares responses against the drug’s current approved label, flags deviations, and categorizes findings by type (safety omission, efficacy mischaracterization, competitive framing, contraindication failure, off-label reference).

A reporting structure that routes findings to the appropriate functional owners.

For most pharmaceutical brand teams, building this capability internally is not practical — it requires API access to multiple AI platforms, ongoing query maintenance as models update, and a knowledge base that stays current with label changes. Platforms like DrugChatter exist to provide this capability as a service, allowing brand teams to receive structured findings without building the underlying infrastructure.

Part Eight: What Good Looks Like — And What Comes Next

The Mature Monitoring Program

A mature AI brand monitoring program looks different from the reactive, ad-hoc process that most pharma companies currently have (if they have anything at all).

It runs on a defined cadence — monthly at minimum — and generates reports that go to defined owners. It tracks trends over time, so that changes in AI responses are flagged when they occur and can be correlated with external events (model updates, publications, media coverage). It covers the brand’s full competitive set, not just the brand itself. It includes patient-type queries that test the AI’s handling of special populations. And it generates actionable findings — not just observations, but recommendations for medical affairs, patient education, and regulatory affairs response.

The monitoring program is integrated with the brand’s existing intelligence infrastructure. Findings from AI monitoring inform the publication strategy (where are the evidence gaps that AI is exploiting?), the patient education program (where is the AI giving patients incomplete safety information that a direct patient communication could supplement?), and the regulatory watchlist (where is the AI making claims that a regulatory affairs team needs to document and potentially address?).

The Regulatory Horizon

The regulatory environment around AI and drug information is moving. The CDER AI Steering Committee serves as the central coordinating body working with complementary programs, and in December 2025, the FDA announced the deployment of agentic AI capabilities for all agency employees. An FDA that is actively deploying AI internally is an FDA that will develop expertise in how AI systems handle drug information — and that expertise will eventually shape regulatory expectations for drug manufacturers.

The FDA’s current draft guidance on AI in drug development does not directly address consumer chatbots. But the agency’s evolving attention to AI-generated pharmacovigilance signals, AI in medical information responses, and AI in patient safety communications is visible in the IQVIA-flagged priority list for 2025.

Regulators are providing evolving guidance that emphasizes a risk-based approach to AI implementation, and drug companies must build a broad toolkit, emphasize cross-team collaboration, and enlist the help of seasoned experts wherever possible.

The companies that build systematic AI brand monitoring programs now are not just managing a current commercial risk. They are building the documentation, the institutional knowledge, and the organizational capability that will position them well when regulators begin asking what pharmaceutical companies know — and what they did — about AI-generated communications involving their drugs.

The KOL Parallel, Revisited

The analogy between AI chatbots and traditional KOLs is useful but imperfect. A KOL is a person with a professional reputation, relationships, accountability, and the capacity to update their views based on new evidence through direct scientific exchange. An AI model is none of those things.

But the functional parallel — that both KOLs and AI models shape how physicians and patients understand therapeutic choices, at scale, outside of direct promotional channels — is real and consequential. Pharmaceutical companies learned to treat KOL monitoring as a core commercial intelligence function because the cost of not knowing what influential physicians were saying about their drugs was too high.

The cost of not knowing what influential AI models are saying about drugs is higher. Not because AI responses are more authoritative — they are not — but because the reach is greater by orders of magnitude, the feedback loops that correct human errors are absent, and the regulatory implications of AI-generated drug misinformation are still being written.

Recent industry analyses reveal that around 40 to 50 percent of traditionally identified KOLs could be categorized as ‘Loud Opinion Leaders’ who excel at securing speaking slots and publishing frequently but show limited evidence of affecting real-world treatment decisions or research directions. This misalignment has cost the pharmaceutical industry billions in misallocated engagement resources.

The industry spent decades and billions trying to identify who actually influences prescribing decisions. AI chatbots have emerged as influencers with reach that dwarfs any individual KOL — and most pharmaceutical companies do not yet have a structured program to monitor what they say.

That is the gap DrugChatter addresses. Systematic, structured, continuous monitoring of AI-generated drug information — so that brand teams, medical affairs, and regulatory affairs know what the new KOL is saying about their drugs, in real time, with the analytical framework to act on what they find.

Key Takeaways

AI chatbots now function as drug counselors at scale. Nearly 4 in 10 Americans report trusting AI tools for healthcare decisions. Patients consult chatbots before physician visits, and those interactions shape prescribing conversations that pharmaceutical commercial programs are not currently reaching.

The accuracy risk is asymmetric. Average chatbot drug information accuracy is reasonable but inconsistent. The failures cluster in critical places: safety omissions, patient-specific contraindications, and competitive framing. Those are precisely the areas where errors cause the most damage — commercially and clinically.

AI monitoring is a multi-function need. Findings from AI brand monitoring are relevant to brand marketing (competitive positioning), medical affairs (message propagation), regulatory affairs (labeling accuracy), and pharmacovigilance (safety communication gaps). Organizational design needs to reflect that.

The regulatory horizon is moving. The FDA is building AI expertise rapidly. Companies that develop AI monitoring capabilities now will be better positioned when regulatory expectations evolve to address consumer-facing AI drug information.

Good publication strategy is part of AI strategy. High-quality, well-indexed publications shape AI training data. Evidence generation that serves the physician education function also shapes what AI learns about your drug over time.

Platforms like DrugChatter close the gap. Building AI monitoring infrastructure internally is impractical for most pharma brand teams. Purpose-built tools provide systematic querying, response logging, label-alignment analysis, and structured reporting — the full capability required to treat AI monitoring as a routine commercial intelligence function.

FAQ

Q: If AI chatbot drug information is not regulated as pharmaceutical promotion, why does it create regulatory risk for manufacturers?

The regulatory risk is indirect but real. First, AI responses that contradict approved labeling or omit required safety information create patient safety exposure that can implicate manufacturers if harm occurs and the AI response is in the evidence chain. Second, as the FDA builds AI expertise, expectations for what manufacturers know about AI-generated drug communications will evolve — and companies with no monitoring program will be poorly positioned. Third, the pharmacovigilance dimension is significant: AI-generated adverse event claims that reach patients may eventually generate safety reports that pharma teams are not equipped to categorize or investigate efficiently.

Q: How frequently do major AI models update their drug knowledge, and how does that affect monitoring cadence?

Major AI platforms update their base models irregularly and do not typically publish drug-specific update schedules. Claude, ChatGPT, and Gemini each have different update patterns, and some offer real-time retrieval-augmented responses that may pull from current sources while others are working from fixed training data. This variability makes regular, scheduled monitoring essential — a query result from three months ago may not reflect current model behavior. Monthly monitoring is a practical minimum for commercial-stage brands; quarterly is appropriate for lifecycle management products.

Q: Can pharmaceutical companies influence what AI says about their drugs, and are there ethical limits?

Pharmaceutical companies can legitimately influence AI training data through the same channels that shape scientific consensus: publishing high-quality evidence in well-indexed journals, generating and publishing real-world evidence, ensuring patient-facing educational content is well-structured and publicly accessible, and participating in guideline development. What they cannot do — and should not attempt — is submit content to AI training pipelines in ways that circumvent scientific peer review, or create low-quality keyword-stuffed content designed to skew AI responses. Beyond being ineffective, such attempts could constitute deceptive promotional practices under existing FDA rules if the content makes promotional claims.

Q: How should a pharmaceutical company handle a discovery that a major AI chatbot is consistently omitting a boxed warning for their drug?

The immediate step is documentation — preserving the specific queries, responses, and dates. That documentation should be routed to regulatory affairs and pharmacovigilance for assessment. From there, the response depends on the nature of the omission. If the AI is drawing on a version of prescribing information that predates a recent label update, the publication strategy may need to include more accessible public-domain versions of the current label. If the omission appears to reflect a gap in the AI’s training data about the drug’s risk profile, medical affairs may need to prioritize publications or communications that establish the risk context more clearly in public-domain sources. Direct outreach to AI platforms is an emerging option — some platforms have begun accepting feedback about drug safety information — but the efficacy and terms of such outreach are still being established.

Q: How does AI monitoring integrate with traditional KOL monitoring programs?

Mature commercial intelligence programs track both. Traditional KOL monitoring — identifying which physicians are influencing prescribing patterns and what they are saying — captures the physician-to-physician and physician-to-patient influence layer. AI monitoring captures the AI-to-patient and AI-to-physician influence layer. The two programs generate different types of findings and inform different interventions: KOL monitoring drives engagement strategy and advisory board design, while AI monitoring drives publication strategy, patient education, and regulatory watchlist management. Where they connect is in the evidence base — the same high-quality publications that give KOLs an accurate framework for discussing a drug’s clinical profile also shape what AI learns about it. Treating the two programs as parallel rather than competing is the right organizational stance.