Drug companies spend billions crafting brand narratives. AI is rewriting them in real time — and most manufacturers have no idea what patients and prescribers are actually being told.

The Problem No One in Pharma Is Talking About Loudly Enough

Imagine spending $400 million on a direct-to-consumer campaign for a newly approved GLP-1 receptor agonist. Your medical affairs team has trained hundreds of field reps. Your regulatory submissions are airtight. Your branded website answers every question the FDA will let you answer.

Then a patient asks ChatGPT whether your drug is better than a competitor’s. The model — drawing on clinical abstracts, Reddit threads, drug forums, and whatever else crawled into its training corpus — gives an answer. That answer shapes the conversation the patient has with their physician the next morning. Your $400 million had nothing to do with it.

This is not a hypothetical. It is happening at scale, right now, across every major therapeutic category. And pharmaceutical manufacturers — organizations that can tell you the precise wording of a label update from three years ago — often have no systematic way to know what AI systems are saying about their products, to whom, and with what clinical framing.

The gap between what drug companies think patients and prescribers are hearing and what AI is actually delivering has become one of the most consequential blind spots in modern pharmaceutical marketing. It touches brand equity, pharmacovigilance obligations, regulatory compliance, and competitive strategy simultaneously. Yet most commercial teams treat it as someone else’s problem — a digital team question, or an IT curiosity — rather than a first-order business risk.

What AI Actually Does When Someone Asks About a Drug

To understand why this matters, you need to understand what large language models (LLMs) actually do when a user asks a drug-related question.

Models like GPT-4o, Claude 3.5, Gemini 1.5 Pro, and their successors do not look up answers. They predict the most statistically probable continuation of a prompt, based on patterns learned from training data. That training data includes peer-reviewed literature, manufacturer websites, patient forums, news articles, clinical trial registries, social media, and enormous quantities of unattributed text scraped from across the internet.

The result is an answer that can sound authoritative — complete with mechanism-of-action language, dosing references, and apparent confidence — while being factually wrong, outdated, off-label, or commercially misleading in ways a human medical writer would never approve.

Here is what that looks like in practice. A user asks: ‘Is Ozempic safe for someone with a history of pancreatitis?’ The model may produce a response that accurately reflects the FDA label’s warning language, or it may draw on pre-label forum posts, preliminary case reports, or conflated language from a different GLP-1 product. There is no quality control step. No medical review. No fair balance requirement. The response goes directly to the patient.

Prescribers are not immune. A cardiologist using an AI assistant to quickly review comparative data on PCSK9 inhibitors — say, evolocumab (Repatha) versus inclisiran (Leqvio) — may receive a synthesis that accurately reflects the published trial data, or may receive a subtly distorted picture based on how the model weighted sources during training. The physician may never know the difference, and the prescribing decision downstream reflects that interaction.

This is the information environment in which pharmaceutical brands now operate. It is not the one that drug marketing teams built their strategies for.

The Scale of AI-Mediated Health Information

The numbers make the case better than any abstract argument. <blockquote> ‘By the end of 2024, ChatGPT alone was processing an estimated 100 million queries per day. Health and medical questions consistently rank among the top five query categories across all major AI platforms, accounting for an estimated 7–12% of total query volume.’ <br><br> — Rock Health, <em>Digital Health Consumer Adoption Survey</em>, 2024 </blockquote>

Apply that percentage to ChatGPT’s daily volume and you get seven to twelve million health-related AI interactions per day, on a single platform. Add Gemini, Claude, Perplexity, Microsoft Copilot, and the dozens of specialized AI health assistants now embedded in patient portals and pharmacy apps, and the total easily reaches tens of millions of drug-related AI conversations every day.

These are not idle searches. A 2024 survey by Wolters Kluwer found that 65% of patients who used AI for health information said the response influenced a conversation they subsequently had with their physician. A separate Doceree analysis found that 38% of physicians reported using AI tools at least weekly to support clinical decision-making.

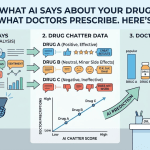

For a drug like Jardiance (empagliflozin), which competes in a crowded SGLT2 inhibitor market alongside Farxiga (dapagliflozin) and Invokana (canagliflozin), the framing that AI tools apply to comparative efficacy questions is not a minor marketing concern. It is a material driver of brand preference at the point of prescribing consideration.

Why Traditional Brand Monitoring Misses All of This

Pharmaceutical brand monitoring has historically focused on four channels: paid media performance, earned media mentions, social listening, and field force feedback. Each of these has established vendors, established metrics, and established processes.

None of them capture what AI says about your drug.

Social listening tools like Brandwatch, Sprinklr, and Talkwalker crawl public posts, comments, and forum threads. They do not monitor AI responses, which are generated dynamically and are not publicly indexed. Earned media tracking covers news articles and press releases — again, published, static content. Field force feedback is qualitative and episodic; reps report what physicians say, but physicians are increasingly less likely to articulate that their opinion was shaped by an AI interaction.

The result is a data gap that grows wider as AI adoption accelerates. A pharma brand team can tell you that their drug received 3,200 social media mentions last month with a net sentiment score of 0.62. They cannot tell you whether ChatGPT is describing their drug’s cardiovascular outcomes data accurately, or whether Claude is recommending a competitor when a user asks for ‘the safest blood thinner for someone over 70.’

This is not entirely the fault of the monitoring vendors. The AI response environment presents genuinely novel measurement challenges. Responses are not published — they are ephemeral, generated fresh for each user. There is no URL to index, no post to crawl. Capturing what AI says about a drug requires actively querying AI systems with representative prompts, systematically, at scale, across platforms, and then analyzing the outputs for accuracy, sentiment, competitive framing, and regulatory compliance risk.

That is precisely what platforms like DrugChatter are built to do. By running structured query batteries across major AI platforms and analyzing outputs against gold-standard clinical and regulatory references, DrugChatter gives pharmaceutical commercial teams a view into the AI information layer that traditional monitoring tools simply cannot provide. The outputs feed into brand strategy, medical affairs prioritization, and pharmacovigilance workflows in ways that legacy monitoring never could.

The Regulatory Dimension: Who Is Responsible When AI Gets It Wrong?

This question does not yet have a clean answer, and the ambiguity is causing serious discomfort in pharma legal and regulatory affairs departments.

The FDA’s current framework for pharmaceutical promotion was designed for a world of manufacturer-controlled communications: advertisements, sales rep detailing, speaker programs, patient assistance materials. The manufacturer creates the content, the manufacturer bears responsibility for accuracy and fair balance, and the FDA has clear jurisdiction to act when content is misleading.

AI-generated drug information fits none of those assumptions. The manufacturer did not create it. The AI platform did. But the platform is not a pharmaceutical company and is not subject to FDA promotional regulations. The patient or physician receiving the information has no way to distinguish an FDA-reviewed promotional claim from an AI hallucination that happens to sound equally authoritative.

The FDA issued a discussion paper in 2023 on artificial intelligence in drug development, and has made statements about AI and healthcare more broadly, but it has not yet issued formal guidance on the regulatory status of AI-generated drug information. The FTC has flagged AI-generated health claims as a potential consumer protection issue. Neither agency has moved decisively.

In the interim, pharmaceutical manufacturers face an uncomfortable asymmetry: they bear reputational and potentially pharmacovigilance-related consequences when AI gets their products wrong, but they have no regulatory leverage to compel AI platforms to correct inaccurate information, and no formal obligation to monitor what AI says about them.

That last point is the one that keeps medical affairs directors up at night. FDA adverse event reporting requirements are clear: if a manufacturer becomes aware of safety information through any channel, including unsolicited reports from patients or prescribers, they have an obligation to evaluate and potentially report it. The question of whether AI-mediated patient communications constitute a reportable channel has not been tested. But it will be.

Case Study: GLP-1s and the AI Information Mess

No therapeutic category illustrates the problem more vividly than GLP-1 receptor agonists, where a commercially frenzied market, intense media coverage, and genuinely complex clinical profiles have created an AI information environment that is, at best, unreliable.

The GLP-1 class includes semaglutide (Ozempic, Wegovy, Rybelsus), tirzepatide (Mounjaro, Zepbound), liraglutide (Victoza, Saxenda), and dulaglutide (Trulicity), among others. Each has distinct FDA-approved indications, dosing regimens, label warnings, and comparative trial data. The distinctions between the diabetes-indicated and obesity-indicated formulations of semaglutide alone — Ozempic versus Wegovy — represent meaningful clinical and regulatory differences that carry real consequences for patient care.

AI platforms routinely blur these distinctions. A 2024 analysis by researchers at the University of California San Francisco found that when asked about semaglutide dosing for weight loss, major LLMs gave responses that were inconsistent with FDA labeling in more than 40% of cases. Some models conflated Ozempic and Wegovy dosing schedules. Others referenced clinical trial doses from the SURMOUNT and STEP trial programs that exceed approved maintenance doses. Several failed to include mandatory label warnings about thyroid C-cell tumors.

For Novo Nordisk, whose brand management for semaglutide represents one of the most complex pharmaceutical marketing operations on earth, the AI information layer is both a threat and, potentially, an opportunity. The company’s brand teams know exactly what Wegovy’s label says, what the STEP trial data shows, and how the product should be positioned against tirzepatide. What they cannot easily know is how AI platforms are translating that information — or mistranslating it — in the millions of patient interactions that occur daily without any Novo Nordisk involvement.

A platform like DrugChatter can run systematic query audits across major AI platforms — asking the same clinical questions a real patient or prescriber would ask, then scoring responses against regulatory and clinical benchmarks. The outputs tell a brand team not just whether the AI is getting it right, but where the gaps are, which platforms are performing worst, and whether competitor products are being favorably framed in comparative queries.

The Competitive Intelligence Angle: AI as an Uncontrolled Share-of-Voice Channel

Brand teams have spent decades managing share of voice through tightly controlled promotional channels. Detailing frequency, journal ad placements, speaker program scale, DTC spend — all of it is tracked, analyzed, and optimized with the intention of shaping how prescribers and patients think about a product relative to its competitors.

AI has introduced a share-of-voice channel that no brand team controls, and that many are not even measuring.

When a physician asks an AI assistant which SGLT2 inhibitor has the best cardiovascular outcomes data, the model’s response functions as a share-of-voice moment. If the model preferentially surfaces EMPA-REG OUTCOME trial data for empagliflozin while giving less attention to the DECLARE-TIMI 58 data for dapagliflozin — for reasons entirely internal to its training and architecture — that affects brand preference in ways that have nothing to do with promotional effort or media spend.

The same dynamic applies at the consumer level. If a patient asks which cholesterol medication is ‘easiest on the liver’ and the AI response frames rosuvastatin (Crestor) more favorably than atorvastatin (Lipitor) — or vice versa — that framing shapes the conversation the patient has with their doctor. It may influence whether the patient requests a specific drug by name, which remains a potent driver of prescribing behavior in branded pharmaceutical markets.

The intelligence value of systematic AI monitoring for competitive purposes is therefore significant. A brand team that knows its drug is being underrepresented, mischaracterized, or outcompeted in AI responses has actionable information. They can invest in content strategies designed to improve the quality of training data for future model updates. They can brief medical affairs teams to proactively correct misinformation in prescriber-facing channels. They can adjust their clinical education priorities to address the specific gaps that AI monitoring reveals.

This is not abstract. It is the same logic that drove the original investment in social listening — the recognition that brand reputation is being shaped in channels you do not control, and the choice between measuring those channels or ignoring them.

Pharmacovigilance: The Obligation That AI Complicates

The FDA’s pharmacovigilance framework is built on the premise that safety signals emerge from real-world use and that manufacturers have an obligation to collect, analyze, and report them. Post-marketing surveillance requirements under 21 CFR Part 314 are extensive and well-established.

What the framework did not anticipate is a world in which patients routinely discuss drug side effects with AI systems rather than reporting them to their physician or the manufacturer’s medical information line.

This is already happening. Forums like Reddit’s r/diabetes and r/loseit are full of posts from patients describing GLP-1 side effects — nausea, vomiting, gastroparesis symptoms, hair loss — in granular detail. Historically, social listening tools could scan these forums and flag potential adverse event signals for pharmacovigilance review. The FDA has been aware of this capability and has encouraged its use.

Now, some of those conversations are moving to AI chat interfaces. A patient who experiences unexpected symptoms while taking a new medication may ask ChatGPT about it before calling their doctor or their pharmacist. The AI’s response may reassure them, or escalate their concern, or suggest a mechanism of action — none of which gets captured in any pharmacovigilance workflow.

The FDA’s current adverse event reporting regulations focus on what the manufacturer knows or should know. As AI becomes a primary channel through which patients discuss their medication experiences, the question of what a manufacturer ‘should know’ about AI-mediated patient conversations will eventually require regulatory interpretation.

Companies that get ahead of this by building systematic AI monitoring into their pharmacovigilance infrastructure — flagging AI conversations where adverse event signals may be present, and routing those to their safety teams for evaluation — are better positioned both for current compliance and for whatever regulatory requirements eventually emerge.

The Medical Affairs Dimension: When AI Spreads Medical Misinformation About Your Drug

Medical affairs teams have a mission that is both distinct from and complementary to commercial marketing: ensuring that accurate, scientifically sound information about their company’s products reaches healthcare professionals. This mission is increasingly complicated by AI.

The problem is not that AI always gets things wrong. Sophisticated models trained on high-quality clinical literature can produce remarkably accurate summaries of mechanism of action, pivotal trial data, and comparative clinical profiles. The problem is that accuracy is inconsistent and non-transparent. A physician receiving an AI-generated summary of the ODYSSEY OUTCOMES trial data for alirocumab (Praluent) has no way to know whether the summary is drawing on the New England Journal of Medicine publication, an outdated preprint, or a secondhand summary from a medical education website with its own agenda.

Medical affairs teams at companies like Regeneron and Sanofi — co-developers of Praluent — have substantial infrastructure for managing medical information requests. Their field-based medical science liaisons are trained to provide accurate, label-consistent information to physicians who want to go beyond what sales representatives can discuss. Their medical information call centers handle thousands of requests per year.

None of that infrastructure captures what happens when a cardiologist uses an AI tool to quickly review PCSK9 inhibitor outcomes data instead of picking up the phone. The MSL relationship may be intact. The medical information team may be standing by. But the physician used a different channel, and the quality of information they received reflects the quality of whatever the AI system ingested during training.

Systematic AI monitoring gives medical affairs teams something they have never had before: visibility into the clinical information environment that exists outside their direct control. When a monitoring platform shows that AI systems are consistently underweighting a drug’s mortality benefit data relative to its competitor, that is a signal for targeted medical education investment. When AI responses are perpetuating a contraindication that was removed from the label two years ago, that drives MSL field briefings and medical information team talking point updates.

The medical affairs use case for AI monitoring is, arguably, even more important than the commercial one. Commercial misinformation affects market share. Medical misinformation affects patient outcomes.

How Brand Teams Should Think About AI Monitoring: A Practical Framework

Most pharmaceutical commercial teams are not starting from zero on digital intelligence. They have social listening deployments, digital analytics stacks, and — increasingly — data partnerships with specialty pharmacy and claims data vendors. The question is how AI monitoring integrates with and extends what they already have.

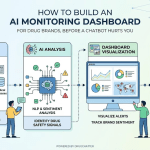

A practical framework has four components.

The first is systematic AI query auditing. This involves running structured batteries of prompts — the questions real patients and prescribers ask — across major AI platforms on a regular cadence. The prompts cover core brand claims, competitive comparisons, safety questions, dosing questions, and off-label queries. Each response is scored against a regulatory and clinical benchmark. The output is a dashboard that tracks AI information quality over time and across platforms.

The second is competitive framing analysis. For each major competitive query — ‘which is better, X or Y?’ — systematic monitoring captures how AI platforms frame the comparison. Which drug gets mentioned first? What trial data gets cited? What language is used to characterize each option? This is direct competitive intelligence that no other monitoring channel can provide.

The third is pharmacovigilance signal detection. AI monitoring outputs are reviewed by safety teams for patterns that may indicate unreported adverse events — patient descriptions of unexpected symptoms, clusters of concerns about specific side effects, or questions about drug interactions that suggest real-world safety issues the company’s surveillance systems have not yet captured.

The fourth is content strategy feedback. When AI monitoring reveals systematic gaps or errors in how a drug is represented, those findings inform content strategy. The goal is to improve the quality of scientific information in the public domain that AI systems will eventually ingest during training updates. This includes investing in high-quality, openly accessible clinical education content, ensuring trial publications are indexed in major scientific repositories with accurate metadata, and working with medical education partners to produce content that accurately represents the drug’s clinical profile.

DrugChatter’s platform is designed to support all four of these components, giving pharma brand and medical affairs teams a single interface for understanding, tracking, and responding to how AI describes their products.

The Training Data Problem: Why Correcting AI Is Harder Than Correcting a Label

When a pharmaceutical manufacturer needs to update their FDA-approved labeling — to add a new indication, update a warning, or reflect new safety information — the process is formal, documented, and relatively predictable. The manufacturer files a supplemental NDA or BLA, the FDA reviews it, the label is updated, and the new label becomes the authoritative reference for the product.

Correcting what an AI says about a drug has no equivalent process. It is not clear that any process exists at all.

Large language models are trained on datasets that are assembled, cleaned, and filtered before training begins. Once a model is trained, its parametric knowledge — the information baked into its weights — does not update automatically when new clinical information becomes available. A model trained in early 2024 does not know about a label update that occurred in late 2024, unless the model has access to real-time retrieval tools or has been fine-tuned on more recent data.

The major AI platform operators — OpenAI, Google, Anthropic, Meta — run training cycles on different schedules and with different degrees of transparency about their data sources. None of them have established formal mechanisms for pharmaceutical manufacturers to flag and correct inaccurate drug information, in the way that Wikipedia has an editorial process or that social media platforms have content correction policies.

This means that the pharmaceutical industry’s primary lever for improving AI information quality is indirect: producing high-quality, openly accessible, accurately indexed clinical and scientific content that AI systems will preferentially draw on when generating responses. It also means that retrieval-augmented generation (RAG) systems — AI tools that pull from specified, curated knowledge bases rather than relying purely on parametric knowledge — are significantly more attractive for healthcare applications, because their information currency and accuracy can be controlled.

Several health systems and pharmacy benefit managers are already deploying RAG-based AI tools that draw exclusively from their own curated clinical formularies and FDA labeling databases. For pharmaceutical manufacturers, these systems represent a cleaner information environment — one where the quality of AI outputs can be better controlled. They also represent a channel where direct engagement with health system informatics teams can influence the knowledge bases that AI tools draw from.

Where Regulatory Clarity Is Heading

The FDA is not ignoring this space. Its evolving framework for AI and machine learning in medical products, developed primarily around diagnostic software and clinical decision support tools, provides some indication of where the agency’s thinking is heading.

The FDA’s 2021 action plan for AI/ML-based software as a medical device signaled an intention to apply a risk-based framework to AI health tools — higher scrutiny for tools that make specific clinical recommendations, lower scrutiny for tools that provide general educational information. Applied to AI chatbots, this framework would suggest that an AI tool that answers a patient’s question about drug interactions differently than one that recommends a specific dosing regimen.

But this framework was designed for AI tools developed by medical device manufacturers, not for general-purpose LLMs that answer health questions as a byproduct of their general capabilities. Applying device-style regulatory oversight to ChatGPT or Google Gemini faces obvious practical and jurisdictional hurdles that Congress would need to resolve.

The more tractable near-term regulatory development may come from the FTC, which has authority over deceptive and unfair practices in consumer-facing AI. The FTC’s 2023 policy statement on AI made clear that the commission considers AI-generated health misinformation a potential enforcement target. Several FTC investigations into AI health claims are in progress, though none have yet resulted in formal action against a major LLM operator.

The European Union’s AI Act, which came into force in August 2024, classifies AI systems that influence health decisions as high-risk systems subject to conformity assessment requirements, transparency obligations, and human oversight requirements. Whether these provisions will practically improve the quality of drug information in European AI tools remains to be seen, but the regulatory direction is clear.

For pharmaceutical manufacturers operating in this environment, the practical implication is to treat AI monitoring as a proactive regulatory readiness investment. Companies that can document what AI was saying about their products at any given time, and demonstrate that they had processes to identify and respond to misinformation, are better positioned when regulators eventually formalize their expectations.

The MSL’s Changing Role in an AI-Mediated World

Medical science liaisons have always operated in the gap between promotional detailing and peer-to-peer scientific exchange. They are the channel through which manufacturers can discuss pre-approval data, off-label science, and complex clinical nuances that FDA promotion regulations prohibit from sales interactions. Their value proposition rests on being more informed, more credible, and more scientifically rigorous than any other information source a physician can access.

AI threatens that value proposition in a specific way: it is faster, always available, and increasingly credible to physicians who have not been trained to evaluate its accuracy limitations.

A physician who once called a medical affairs hotline to ask a complex mechanism question about a new drug in their pipeline may now ask Claude or Perplexity instead. The answer arrives in seconds. It may be accurate or it may contain errors. Either way, the physician has received information without any MSL involvement.

This creates both a challenge and an opportunity for medical affairs. The challenge is obvious: the MSL’s role as the go-to expert on complex clinical questions is being partially displaced by AI tools that are less accurate but far more convenient. The opportunity is that AI monitoring can now tell medical affairs exactly which questions physicians are asking AI systems, which questions are generating the most inaccurate or incomplete responses, and therefore where MSL-led scientific exchange would be most valuable.

If AI monitoring shows that oncology physicians in large academic centers are asking AI tools about a bispecific antibody’s cytokine release syndrome management protocols and receiving inconsistent guidance, that is a precise brief for MSL field strategy. Send the MSLs with CRS management data. Don’t send them to talk about what the AI is already getting right.

This is a smarter, more targeted deployment of MSL resources than the traditional geographic coverage model. It requires that AI monitoring outputs flow systematically to medical affairs leadership — which requires that medical affairs and commercial teams treat AI monitoring as shared infrastructure rather than a marketing department curiosity.

The Patient Journey Has Already Changed. Brand Strategy Has Not.

Pharmaceutical marketing has long used the concept of the ‘patient journey’ to map the touchpoints through which a patient moves from symptom awareness to diagnosis to treatment initiation and adherence. Each touchpoint represents an opportunity for brand exposure and information delivery. Patient journey mapping has become a sophisticated discipline, with teams tracking digital touchpoints, physician interactions, pharmacy encounters, and patient support program engagements.

AI has inserted itself into the patient journey at multiple points, and most patient journey maps have not been updated to reflect this.

A patient with newly diagnosed Type 2 diabetes may receive their diagnosis, go home, and immediately ask an AI chatbot to explain their options. Before they have spoken to a diabetes educator, before they have visited a branded website, before they have seen a television ad, they have received an AI-generated summary of the treatment landscape. That summary may mention Jardiance, Farxiga, Invokana, Januvia, and metformin — in some order, with some framing, with some comparative language — that neither Boehringer Ingelheim nor AstraZeneca nor Merck had any involvement in shaping.

The patient arrives at their first endocrinology appointment already anchored to that AI-generated framing. Their questions reflect it. Their openness to the physician’s recommendation is conditioned by it. The brand that won or lost in that AI interaction had their patient journey influence determined before any conventional marketing touchpoint was reached.

This is not a marginal edge case. As AI health tool adoption increases, this pattern will characterize a growing majority of newly diagnosed patients in major therapeutic categories. The pharmaceutical brands that understand this — and that invest in monitoring and influencing the AI information layer — are building a competitive position that will compound over time. The brands that treat AI monitoring as a future priority are ceding ground they will have a hard time recovering.

What ‘Winning’ in the AI Information Layer Looks Like

There is no regulatory-approved mechanism for a pharmaceutical manufacturer to directly prompt an AI to say favorable things about their drug. There is no paid placement, no sponsorship model, no branded AI chatbot that can do what a DTC television ad does.

What manufacturers can do is influence the quality and completeness of the scientific information that AI systems draw on, and measure how well that information is reflected in AI outputs.

‘Winning’ in the AI information layer means four things.

First, it means having high-quality, openly accessible clinical content that accurately represents the product’s evidence base. This includes well-written publications in indexed journals, clear and accurate clinical trial registry entries, accurate FDA label information that is well-indexed and easily machine-readable, and medical education content that is produced to high scientific standards and distributed through channels that AI systems index.

Second, it means monitoring AI outputs systematically enough to know when the information environment has degraded — when a new model version is getting something wrong, when a competitor’s trial data is being overstated, when a label update has not yet been reflected in AI responses.

Third, it means having a response playbook. When monitoring identifies systematic AI misinformation about a product, what does the brand team do? Who owns the response? What channels are activated? Is it a medical affairs issue, a regulatory issue, a communications issue, or all three? Companies that have answered these questions before the crisis are better positioned than those answering them in real time.

Fourth, it means treating AI monitoring data as a first-class input to brand strategy — not a digital team afterthought, but a primary source of competitive intelligence that informs positioning, clinical education investment, and field force deployment.

The Vendor Landscape: What Exists and What Doesn’t

The market for pharmaceutical AI monitoring is nascent but growing. Several categories of vendors are relevant, though none fully solve the problem on their own.

Social listening platforms like Brandwatch and Sprinklr have begun developing AI response monitoring capabilities, but these are generally additions to social-media-primary platforms rather than purpose-built pharmaceutical AI monitoring solutions. Their drug information accuracy benchmarking capabilities are limited.

Clinical intelligence vendors like IQVIA and Komodo Health have deep pharmaceutical data assets and strong regulatory understanding, but their AI monitoring capabilities are primarily focused on AI use in clinical trials and real-world evidence generation rather than on what AI says to patients and prescribers.

Specialty platforms purpose-built for pharmaceutical AI monitoring are the category most directly relevant to this problem. DrugChatter sits in this category, with a specific focus on tracking how AI platforms describe pharmaceutical products, benchmarking AI outputs against clinical and regulatory references, and giving brand and medical affairs teams actionable intelligence about the AI information environment.

The right vendor evaluation criteria for pharma teams include: the breadth of AI platforms covered, the quality of clinical benchmarking, the ability to run custom prompt batteries, the integration with existing brand monitoring workflows, and the capability to flag potential pharmacovigilance signals in AI-mediated conversations.

Building the Internal Case: Who Owns AI Monitoring in Pharma?

One reason AI monitoring has been slow to gain traction in pharma commercial organizations is that it is genuinely unclear who should own it. It touches at least four functions: brand marketing, medical affairs, regulatory affairs, and pharmacovigilance. Each function has legitimate stakes. None has obvious primary responsibility.

The most successful internal advocates for AI monitoring investment tend to frame it in terms of the function they are addressing. For commercial teams, the frame is competitive intelligence and share of voice. For medical affairs, it is scientific accuracy and MSL deployment efficiency. For regulatory, it is proactive compliance and pharmacovigilance readiness. For legal, it is risk management.

The most effective organizational structure is a cross-functional working group with representation from all four functions, reporting to a senior leader with authority to allocate budget and drive action across organizational boundaries. This is not structurally different from how effective social listening governance works in mature pharmaceutical companies — it just has not yet been widely adopted for AI monitoring.

Budget allocation is the other barrier. AI monitoring requires investment in a capability whose ROI is harder to demonstrate than, say, a media buy with clear CPM metrics. The case for investment rests on risk avoidance — the cost of not knowing what AI is saying about your drug — and on competitive intelligence value that accrues over time. Neither argument is simple. Both are true.

The Next Two Years: Where This Goes

The AI information environment will not get simpler. It will get more complex, faster, and more consequential for pharmaceutical brands.

Several near-term developments will accelerate the stakes.

AI tools embedded in electronic health record systems are moving from pilot to production at major health systems. Epic’s Agentic AI framework, announced in late 2024, enables AI agents to perform clinical workflows within the EHR environment. When AI agents are pulling drug information to support prescribing decisions inside the EHR, the accuracy of that information has direct patient care implications — and the manufacturer of the drug involved has no visibility into what the agent is saying.

Pharmacy chains are deploying AI-powered patient communication tools. CVS, Walgreens, and Amazon Pharmacy have all announced or piloted AI-assisted medication counseling. These systems will field questions about drug side effects, interactions, and adherence at enormous scale. The quality of the drug information they draw on will vary, and most pharmaceutical manufacturers have no relationship with those information pipelines.

Specialty AI health tools — targeted at specific therapeutic areas, patient populations, or clinical workflows — are proliferating. A diabetes management AI that helps patients track their blood glucose and adjust their lifestyle may also answer questions about their medication. An oncology decision support tool used by community oncologists may synthesize trial data in ways that favor certain treatment regimens. Each of these represents an AI information channel that pharmaceutical brand teams currently cannot see.

The manufacturers who build the capabilities to monitor these channels now are building a durable competitive advantage. The ones who wait for regulatory clarity or internal organizational alignment are making a strategic bet that this problem will be easier to solve later than it is today. The evidence suggests they will lose that bet.

Key Takeaways

AI chatbots have become a primary channel through which patients and prescribers access drug information, operating entirely outside the pharmaceutical industry’s existing regulatory and monitoring frameworks.

Major LLMs produce drug-related information that is often inaccurate, outdated, or misleadingly framed — not because they are designed to mislead, but because their training data is imperfect and their outputs lack any medical review process.

Traditional brand monitoring tools — social listening, earned media tracking, field force feedback — do not capture AI-generated responses, creating a growing information blind spot for pharmaceutical commercial and medical affairs teams.

The pharmacovigilance and regulatory implications of AI-mediated drug conversations remain unresolved, but the trajectory of FDA and FTC attention suggests that formal expectations are coming.

Competitive intelligence from AI monitoring is actionable: knowing how AI platforms frame comparative drug questions gives brand teams information they cannot get from any other channel.

Medical affairs teams can use AI monitoring to identify where clinical information gaps are most acute and deploy MSL resources more precisely.

‘Winning’ in the AI information layer is not about gaming AI systems. It is about producing high-quality scientific content, monitoring the AI information environment systematically, and responding to gaps with targeted clinical education investment.

Platforms like DrugChatter exist specifically to give pharmaceutical companies the AI monitoring infrastructure that their legacy tools do not provide — systematic query auditing, competitive framing analysis, and pharmacovigilance signal detection across major AI platforms.

The internal governance challenge — determining which function owns AI monitoring — is real but solvable. The companies that solve it now will have a head start that compounds as AI adoption in healthcare accelerates.

FAQ

Q: Is there any legal risk to pharmaceutical manufacturers if an AI chatbot gives misleading information about their drug?

A: The direct legal risk to manufacturers is currently limited, because they are not producing the AI content and have no contractual relationship with the AI platforms generating it. The indirect risks are more significant. If AI misinformation about a drug leads to patient harm, plaintiffs’ attorneys will almost certainly explore whether the manufacturer had knowledge of the misinformation and failed to address it. The pharmacovigilance question is the most acute: if a manufacturer’s monitoring systems should reasonably have detected AI-mediated adverse event signals and failed to do so, that could create regulatory exposure. The safest position — legally and reputationally — is to monitor proactively and document your response process.

Q: Can pharmaceutical companies pay AI platforms to ensure their drugs are represented accurately?

A: Not in any direct, FDA-compliant way. Paying an AI platform to generate favorable content about a drug would raise serious concerns under FDA promotional regulations, FTC guidelines on undisclosed advertising, and the terms of service of most major AI platforms. What manufacturers can do — compliantly — is invest in high-quality, openly accessible scientific content that AI systems index and draw on during training. They can also engage with health system technology teams who are deploying RAG-based clinical AI tools to ensure those tools draw on accurate clinical references. Neither approach guarantees outcomes, but both are legally and regulatorily defensible.

Q: How quickly do AI platforms update their drug information when FDA labeling changes?

A: Inconsistently, and often slowly. Models with static training data will not reflect label changes until they are retrained — which may be months or years later. Models with retrieval-augmented generation capabilities that pull from real-time FDA databases are better positioned to reflect current labeling, but even these tools vary in how quickly they incorporate updates and how reliably they apply the most current label to their responses. Systematic AI monitoring is currently the only way for a manufacturer to know whether a specific platform is reflecting a label change accurately.

Q: What is the most common type of AI drug misinformation in high-volume therapeutic categories?

A: Based on available analysis, the most common patterns are: dosing errors (wrong dose, wrong frequency, wrong titration schedule), contraindication omissions (missing or outdated contraindications), off-label indication conflation (describing uses that are not FDA-approved as if they were), and comparative framing errors (misrepresenting trial data to favor or disfavor a specific drug). The GLP-1 category has the highest observed error rate, likely because of the enormous volume of media coverage that introduced non-clinical sources into the training data alongside rigorous clinical content.

Q: What should a pharmaceutical company do immediately if it discovers that a major AI platform is giving systematically wrong information about one of its drugs?

A: The immediate response should run on two tracks simultaneously. The first is internal escalation: brief medical affairs, regulatory, legal, and pharmacovigilance. Document what the AI is saying, when you discovered it, and on which platforms. If the misinformation could affect patient safety, evaluate whether it constitutes a reportable pharmacovigilance signal. The second track is external: engage the AI platform’s safety and content teams directly — all major platforms have mechanisms for flagging harmful health misinformation, even if they are not optimized for pharmaceutical-specific concerns. Simultaneously, accelerate investment in high-quality public-domain content that accurately represents the drug’s clinical profile, to improve the information quality that will inform future training cycles. Do not wait for the misinformation to self-correct. It will not.

This article was produced with research support from DrugChatter’s AI monitoring platform. DrugChatter helps pharmaceutical brand and medical affairs teams track how AI describes their products, identify competitive framing risks, and integrate AI intelligence into pharmacovigilance and commercial strategy workflows.