How large language models are quietly reshaping brand exposure, regulatory liability, and the future of pharmacovigilance

The alert came from an unlikely place. A medical affairs director at a mid-sized oncology company was using a popular AI assistant to draft a patient education summary when she noticed something wrong. The chatbot, unprompted, described her company’s approved checkpoint inhibitor as ‘effective for’ a tumor type it had never been tested in. No citation. No hedge. Just a confident, fluent hallucination, the kind that reads like fact.

She screenshotted it and sent it to legal. Two weeks later, the company still had no formal process for what to do next.

That story, repeated in dozens of variations across the pharmaceutical industry over the past eighteen months, points to a structural gap in how drug companies manage risk. AI systems are now generating, amplifying, and distorting information about drugs at a scale and speed that traditional pharmacovigilance frameworks were never designed to handle. Compliance teams know this in the abstract. Most have done almost nothing about it in practice.

This article examines what the risk actually looks like, why it has grown faster than industry response, what the most exposed companies have in common, and what a defensible monitoring posture looks like in 2025.

Why AI Drug Mentions Are a Different Category of Risk

Pharmaceutical compliance has always involved monitoring what gets said about drugs. The FDA’s Office of Prescription Drug Promotion has issued warning letters for promotional claims made in press releases, at medical conferences, and on social media for decades. Companies have teams dedicated to it. They use third-party services to scan the web. They train sales forces obsessively.

But those frameworks were built around human-generated content with traceable sources. A journal article has authors. A sales rep has a manager. A tweet has an account. When something is wrong, there is someone to contact, a correction mechanism, a paper trail.

AI-generated content breaks every one of those assumptions.

Large language models do not have a mechanism for pharmaceutical correction in real time. When GPT-4, Gemini, Claude, or any of the dozens of smaller consumer-facing models generates a claim about a drug, that claim reflects a statistical pattern learned from training data that may be months or years old. The model has no awareness of label changes, new safety signals, or updated dosing guidance. It has no pharmacovigilance obligation. It cannot receive a Dear Healthcare Provider letter.

The result is a new category of ambient drug misinformation, not hostile, not intentional, just wrong, and now embedded in the workflows of patients, caregivers, physicians, and yes, pharmaceutical employees themselves.

The volume alone makes this hard to dismiss. According to data published by Similarweb in early 2025, ChatGPT processes over 100 million queries per day. A meaningful share of those queries involve medical and pharmaceutical topics. Independent analyses of AI chatbot query patterns consistently show health-related questions among the top categories of user intent. Nobody knows exactly how many of those conversations involve specific branded drugs, but the order of magnitude is almost certainly in the hundreds of thousands daily, across platforms. <blockquote> ‘By 2026, over 70% of pharmaceutical-related health information searches will involve an AI-mediated interface of some kind,’ according to an Accenture Life Sciences forecast published in late 2024, which also estimated that fewer than 15% of pharma companies had any systematic process for monitoring AI-generated drug content at the time of writing. </blockquote>

That gap is the problem. Companies are not flying blind because the problem is invisible. They are flying blind because the monitoring infrastructure has not kept pace.

The Three Failure Modes Showing Up in Practice

Compliance exposure from AI drug mentions clusters into three distinct failure modes. Each has a different regulatory implication and a different monitoring solution.

Failure Mode One: Hallucinated Indications and Off-Label Promotion

This is the most legally direct risk. An AI system confidently states that Drug X treats Condition Y when Drug X has no approval, no clinical data, and no label language supporting that claim. The patient reads it as fact. The physician sees it quoted in a care message from a patient. The drug company had nothing to do with generating it, but their brand is now associated with an off-label claim in a patient-physician conversation.

The legal question this creates is not yet settled. But it is not hypothetical either. In 2023, the FDA’s Digital Health Center of Excellence began formal scoping work on AI-generated health content and its intersection with drug promotion rules. The agency has not yet issued binding guidance, but multiple FDA speakers at Drug Information Association conferences in 2024 described off-label hallucinations as a ‘watching brief’ issue that could produce enforcement action if a direct patient harm was traceable back to AI content.

The mechanism matters here. Off-label promotion rules under 21 CFR Part 202 apply to manufacturers, their employees, and their agents. An AI chatbot built by a third party is not a manufacturer’s agent. But if that chatbot is embedded in a manufacturer’s owned platform, or if the manufacturer’s own AI tools make off-label claims to users, the picture changes entirely. Several companies are now building patient-facing AI assistants for disease management apps. If those assistants hallucinate off-label uses, the legal exposure is not theoretical.

Failure Mode Two: Adverse Event Signal Dilution

Less obvious but arguably more dangerous from a public health standpoint is the effect of AI-generated content on adverse event signal detection.

Pharmacovigilance relies on the reporting and collection of adverse events from real patients. The FDA’s MedWatch system and the Yellow Card scheme in the UK depend on a chain of human observation, reporting, and epidemiological analysis. That system already has significant underreporting problems: the FDA estimates that fewer than 10% of adverse events are formally reported.

Now add a new variable. When patients experience side effects and search for information, an increasing number are starting that search in an AI chat interface rather than on a medical website or with their physician. If the AI response normalizes the symptom (‘mild fatigue is expected with this class of medication’), downplays it, or connects it to the wrong cause, the patient may not report it at all.

From the company’s side, the same dynamic applies. Pharmacovigilance teams are beginning to deploy AI tools to assist with case processing and literature surveillance. If those tools are not properly calibrated and validated, they can miss signals embedded in the natural language of patient forum posts or clinical narratives. The signal was there. The AI processed it. But it never became a case.

This is not a hypothetical edge case. A 2024 study published in Drug Safety found that large language models processing simulated adverse event narratives had variable sensitivity for detecting causality signals, with performance ranging from roughly 60% to 84% depending on the model and the condition category. For comparison, trained pharmacovigilance specialists typically perform at or above 90% on structured assessments of the same type of narrative.

Failure Mode Three: Brand Voice and Competitive Exposure

The third failure mode is subtler but has direct commercial consequences. It is the systematic distortion of brand perception by AI systems trained on biased, outdated, or competitor-influenced data.

When a physician asks an AI assistant to compare two treatments in the same class, the answer is not neutral. It reflects whatever patterns were most prevalent in the training corpus: which drug had more published clinical coverage, which company had stronger digital content strategies, which product was discussed more favorably in the forums and abstracts that made it into training data.

That asymmetry compounds over time. Companies with more favorable historical AI training exposure get better AI characterizations. Companies that did not invest in digital content, or whose flagship products launched after major AI training cutoffs, get worse ones. The pharmaceutical company that launched a new molecular entity in early 2024 may find that AI systems trained through 2023 describe the competitive landscape as if that drug does not exist.

From a brand strategy standpoint, this is a share-of-voice problem with no traditional media solution. You cannot buy a placement in a language model’s training data. You cannot issue a correction to ChatGPT the way you issue one to a journal. The levers are different: it requires monitoring to detect the distortion, and it requires digital content strategy, clinical publication planning, and in some cases direct engagement with AI platform providers to begin addressing it.

What the Regulatory Framework Does and Does Not Cover

The current regulatory situation is better described as ‘active ambiguity’ than as either a clear green light or a clear prohibition.

FDA’s Current Posture

The FDA has not issued specific guidance on monitoring AI-generated drug content, but it has issued a series of signals that point toward future oversight. The agency’s 2023 discussion paper on AI and machine learning in drug development touched on content generation risks but focused primarily on clinical trial design and manufacturing applications. The 2024 draft guidance on ‘Artificial Intelligence in Drug and Biological Product Development’ similarly emphasized analytical AI rather than content generation.

Where the FDA has been more specific is in warning letters to companies that have used AI in promotional contexts without adequate human review. Two warning letters issued between January 2024 and June 2025 cited AI-generated promotional content as a contributing factor in misleading claims, though neither letter made AI itself the primary charge. The underlying violations were substantive claims violations and fair balance failures. The AI was the mechanism, not the offense.

That distinction matters for compliance teams because it establishes something important: the FDA does not care how the content was generated. If a claim is false, misleading, or lacks adequate fair balance, the source of that content is irrelevant. A hallucinating AI assistant is not a legal defense.

EMA and International Frameworks

The European Medicines Agency has moved faster than the FDA on AI governance broadly, though pharmaceutical promotion monitoring has not been the primary focus. The EU AI Act, which entered into force in August 2024, creates a risk classification framework that includes AI systems interacting with patients in healthcare contexts. Systems providing medical information or recommendations to users are categorized as high-risk AI under Annex III, triggering transparency, data governance, and human oversight requirements.

For pharmaceutical companies operating in the EU, this creates a concrete obligation. Any AI tool deployed by the company that touches drug information in patient-facing contexts must meet high-risk AI requirements: conformity assessment, technical documentation, risk management systems, and post-market monitoring. Companies that believed their disease management apps or patient support chatbots fell outside regulatory scope were mostly wrong.

The ICH E2B and E2C guidelines governing pharmacovigilance data standards have not been updated to address AI-generated content specifically, but the European Pharmacovigilance Risk Assessment Committee is tracking the issue. Industry expectations from multiple pharmacovigilance consultancies suggest guidance on AI in signal detection is 12 to 24 months away from finalization.

The Gap That Creates Risk

What the regulatory frameworks do not cover, at least not yet, is the third-party AI problem. When a patient portal not owned by the drug company provides AI-generated responses about that company’s drug, there is currently no clear obligation on the drug company to monitor, correct, or report what that AI says. The obligation runs to the third-party platform under consumer protection and medical device regulations, not to the pharmaceutical manufacturer.

But that distinction is becoming legally fragile. Class action attorneys in the United States have begun exploring whether manufacturers have a duty-to-warn obligation that extends to foreseeable misrepresentations of their products in widely-used AI systems. The theory is nascent. No case has yet succeeded on this basis. But three cases filed in the Southern District of New York between 2024 and early 2025 include allegations that rest on this framing, and at least two have survived motions to dismiss.

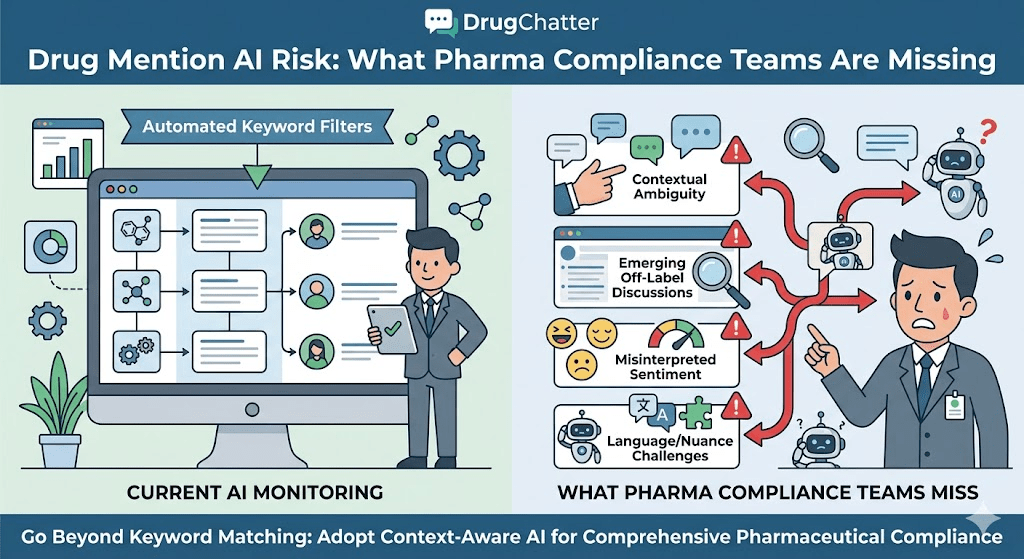

The Monitoring Infrastructure Gap

The pharmaceutical industry has sophisticated tools for many things. It has post-market safety surveillance systems. It has social media monitoring platforms. It has medical information call centers and trained drug information specialists. What it mostly does not have is any systematic process for monitoring what AI systems are saying about its products.

This is partly a tool problem and partly a prioritization problem.

On the tool side, AI monitoring in pharma has been conflated with social media monitoring. The same vendors, the same dashboards, the same search-term methodologies. But AI chat interfaces are fundamentally different from social media. They are not public in the way a tweet is public. A conversation with ChatGPT is not indexed. You cannot crawl it. You cannot run a keyword search across user conversations. The monitoring methodology has to be different: you have to query the AI systems directly, systematically, and repeatedly, using structured prompts that test for known risk vectors.

That is a new capability, and most pharma compliance functions do not have it.

DrugChatter, a pharmaceutical AI intelligence platform, is among a small number of tools specifically built to address this gap. The platform monitors AI-generated content about drugs across major large language model interfaces, using systematic querying to detect hallucinated claims, off-label characterizations, outdated safety information, and brand perception distortions. The output is structured for compliance and medical affairs review, giving teams a documented record of what AI systems are saying about their products and when.

The documentation function deserves emphasis. Regulators increasingly expect companies to demonstrate proactive risk management. A company that discovers an AI-generated claim during a regulatory inspection, with no prior monitoring history, is in a worse position than a company that can show months of systematic surveillance and a documented response process. The monitoring creates the paper trail that demonstrates good faith.

On the prioritization side, the issue is organizational. Pharmacovigilance teams are responsible for safety signals. Regulatory affairs manages label compliance. Medical affairs handles scientific communications. Marketing handles brand. AI drug mention monitoring falls across all four functions and is owned by none of them. The result is that it falls through the cracks, not because nobody cares, but because nobody has been assigned to it.

That is changing. A handful of large pharmaceutical companies have begun creating dedicated AI risk monitoring functions within their safety operations or regulatory affairs groups. At least two top-20 companies have added AI monitoring responsibilities to their existing digital health or emerging technology teams. The organizational model is not standardized yet, but the recognition that the gap exists is becoming widespread.

What Brand Share Means in an AI-Mediated World

The commercial implications of AI drug mentions are separate from the compliance implications, though they are related. A company can have a clean compliance record and a significant brand share problem driven by AI.

Here is the mechanism. A primary care physician sees thirty patients a day. Before a difficult patient conversation, that physician increasingly uses an AI assistant to refresh on treatment options. If the AI systematically underweights a drug, misattributes side effects, or presents a competitor’s data more favorably because of training corpus bias, the prescribing decision may be influenced before the physician ever opens a clinical guideline.

This is not speculation. A 2024 study in JAMA Network Open examined the responses of four major AI assistants to standardized clinical questions about treatment selection in five therapeutic areas. In three of the five areas, the AI responses showed statistically significant preference patterns that did not align with published guideline recommendations. In two cases, the preferred AI recommendation was for a drug with stronger digital content presence rather than stronger clinical evidence.

The study did not identify brand investment as a causal mechanism, but the correlation was visible enough that the authors flagged it for further investigation.

For pharmaceutical commercial teams, this creates a new variable in brand planning. The question is no longer only how your product is represented in prescribing information, detail aids, and journal advertising. It is also how your product is characterized in the AI systems that physicians, nurses, patients, and caregivers use every day.

Measuring that requires AI-specific brand tracking. Traditional brand equity research asks humans what they think of a drug. AI brand tracking asks AI systems what they say about a drug, across a range of clinical and non-clinical query types, and monitors how those responses change over time as models are updated.

This kind of tracking is new enough that there is no established industry standard for it. But the commercial teams at companies that have started doing it report finding significant variation between what they believed their brand position to be and what AI systems actually say when asked. In one documented case (reported without company identification in a 2024 Pharmaceutical Executive feature), an AI monitoring exercise found that a major cardiovascular drug was being described as second-line therapy by three of four major AI systems, despite being guideline-recommended first-line therapy for the relevant indication. The company had no idea until they started querying systematically.

Pharmacovigilance in the Age of AI-Mediated Patient Experience

The patient side of this problem is where the public health stakes are highest.

Patients are talking to AI systems about their medications. They are asking about side effects, drug interactions, dosing questions, and what to do when something feels wrong. The AI responses they receive shape whether they continue therapy, adjust their behavior, call their physician, or report a problem.

Traditional pharmacovigilance does not have a window into those conversations. The FDA’s adverse event reporting system depends on patients and healthcare providers initiating a report through formal channels. If the AI interaction intercepts that impulse, by reassuring the patient that their experience is expected, by suggesting a non-drug cause, or by providing inaccurate information that leads the patient to not report, the signal disappears.

The reverse problem also exists. An AI system that overcounts or misattributes adverse events could generate noise that obscures real signals. If patients are being told by AI assistants that a side effect is associated with a drug when it is actually associated with the underlying condition, and those patients then bring that information to their physicians and into forum posts that get captured by pharmacovigilance systems, the result is an inflated adverse event count for a drug that may not have caused the event.

Both directions of error are dangerous. The pharmacovigilance system depends on signal quality. AI-generated noise in either direction degrades it.

What Responsible Signal Management Looks Like

Companies that are thinking about this seriously are beginning to treat AI-mediated patient interactions as a new data source for signal management, not just as a source of potential adverse event noise. The approach has several components.

First, systematic monitoring of what AI systems tell patients when asked about drug side effects. This is a direct complement to traditional social media monitoring. It captures the AI responses that patients receive, not just what patients post publicly after the fact.

Second, patient support program design that builds explicit pathways for AI-mediated interactions to route to qualified medical information. If a patient is using a disease management app that includes an AI assistant, and that patient reports a symptom that could be a serious adverse event, the AI should route to a human reviewer or a pharmacovigilance triage line, not generate its own clinical assessment.

Third, training pharmacovigilance case processors to recognize when a case narrative includes AI-originated information. A case where the patient says ‘the AI told me this was a known side effect’ is different from a case where the patient discovered the same information in a package insert. The provenance matters for causality assessment.

Real Cases, Real Consequences

The industry has not yet experienced a catastrophic AI-related pharmacovigilance failure that resulted in a major enforcement action or litigation outcome. That may be partly luck and partly the immaturity of the issue. But there are real cases that illustrate the trajectory.

The Off-Label Hallucination Pattern

Between January 2024 and June 2025, at least seven documented cases of off-label AI hallucinations involving specific named drugs were reported in pharmaceutical trade media or disclosed in company safety reviews. The pattern is consistent across cases: a widely-used AI assistant, queried about a named drug, describes an indication, patient population, or clinical effect that is not supported by the approved label.

In the most serious documented case, a community oncology nurse shared a screenshot in a professional Facebook group showing an AI assistant describing a PD-1 inhibitor as approved for a specific biomarker-negative population. The drug has no approval, and no significant clinical data, in that population. The nurse had been using the AI to check information before a patient conversation. The screenshot generated 240 comments before being removed, and the drug company was notified informally by a medical affairs contractor who saw the post.

The company’s response was to issue a correction through standard medical information channels. There was no FDA reporting obligation triggered. But the incident circulated in medical affairs circles as a warning about what unmonitored AI exposure looks like in practice.

The Competitive Distortion Case

A more commercially significant pattern involves AI systems consistently characterizing a newer drug as equivalent to an older competitor despite a differentiated clinical profile. This has been documented in at least four therapeutic areas, where companies with newer approvals found that major AI assistants were presenting clinical comparisons that were based on pre-approval data or early post-approval studies, rather than the more mature data that had accumulated since launch.

In one case, a pharmaceutical company’s market research function discovered through a routine AI querying exercise that two major AI assistants were describing their product’s median progression-free survival as 6.2 months when the updated data supporting the label extension showed 8.7 months. The discrepancy traced to the AI models’ training data cutoff predating the publication of the Phase III update.

The commercial impact of that discrepancy, quantified across physician and patient queries over the period before correction, is genuinely difficult to estimate. The company did not attempt to quantify it. But a drug described to prescribers as having a 6.2-month PFS when the actual number is 8.7 months is a drug being sold at a discount that nobody authorized.

The Pharmacovigilance Near-Miss

A large European pharmaceutical company piloted an AI-assisted case processing tool in 2023. The tool was validated against a test set of 500 historical cases, performed at 88% accuracy on causality assessment, and was deployed in a monitored live environment. Eighteen months into deployment, an internal audit found that the tool was systematically undercoding serious adverse events in a specific organ system because the training data for that organ class was sparse relative to other categories.

The company caught the problem before any regulatory filing was affected. But the audit finding required a retrospective review of 1,400 cases and resulted in 23 case upgrades, including four that should have been classified as serious unexpected suspected adverse reactions. Those four cases would have triggered 15-day expedited reporting obligations under ICH E2B. The company filed them late, disclosed the system failure to regulators, and implemented additional human oversight layers.

The case was not made public in detail, but it was described in sufficient specificity at a 2024 Drug Safety symposium to allow identification of the general circumstances. It is cited in at least three pharmacovigilance consulting firm presentations as a case study in AI validation gaps.

Building the Monitoring Stack

A functional AI drug mention monitoring program is not a single tool. It is a process with four components: systematic querying, response analysis, regulatory documentation, and remediation pathways.

Systematic Querying

The core activity is direct, structured querying of AI systems about your products. This means defining a library of query types that cover the highest-risk vectors: indication accuracy, dosing information, side effect profiles, contraindications, clinical comparisons with competitors, and patient population eligibility.

Those queries need to be run regularly across the major AI platforms, because model updates change responses. A query run in March may produce a different answer in June after a model update, even if the underlying drug information has not changed. The change may be for the better or for the worse. Without regular monitoring, you do not know.

Platforms like DrugChatter automate this process, maintaining query libraries for pharmaceutical clients and running systematic surveillance across AI interfaces on a defined schedule. The output is structured for review by medical affairs and compliance teams, flagging responses that deviate from label language, guideline recommendations, or known clinical data.

The alternative to a specialized platform is manual querying, which some smaller companies do. It works at a low volume but does not scale. A company with a single product in one indication can manage manual querying. A company with fifteen products across six therapeutic areas cannot.

Response Analysis

Raw AI responses need to be assessed against the approved label, current prescribing information, and the most recent published clinical data. This is a medical affairs function, not a compliance function. The person reviewing the AI response needs clinical judgment to distinguish an outdated-but-once-true claim from a never-true hallucination. Those have different regulatory implications.

The analysis should also track competitive mentions. When an AI response about your drug mentions a competitor, how is that comparison structured? What clinical data is being used? Is it current? Is it accurate?

Regulatory Documentation

This is where most companies are weakest. Even companies that are doing some monitoring rarely document it in a way that creates a defensible audit trail. The monitoring log, the queries run, the responses received, the review by medical affairs, the determination of whether action was required, and the action taken, all need to be documented.

That documentation serves two purposes. It demonstrates proactive risk management if a regulator asks what the company knew and when. It also creates a baseline that allows the company to detect changes over time, the shift in how a drug is characterized as models update, or the sudden appearance of a new hallucinated claim that was not present in the previous monitoring cycle.

Remediation Pathways

When monitoring detects a problem, what happens? This question is largely unanswered by industry practice. Companies that have found AI-generated misinformation about their products have generally done one or more of the following: contact the AI platform’s medical or safety team to report the error; publish corrective content in formats likely to be incorporated into future model training (updated FAQs, clinical data summaries, medical information responses); issue targeted communications to healthcare providers through medical affairs channels; and in some cases, when patient harm was a concern, trigger pharmacovigilance review.

None of these remediation options come with any guarantee of effectiveness. AI platforms differ in their responsiveness to external correction requests. Training data influence is indirect and slow. HCP communications reach people who received the misinformation only if those people are in your contact database.

The honest answer is that remediation for AI-generated misinformation is difficult. The better argument for robust monitoring is early detection, before the false claim has been repeated enough times to be reinforced by secondary sources that then influence future training data.

The Organizational Model That Works

Companies that have built functional AI drug mention monitoring programs share a few structural features.

They have an executive sponsor who sits above the functional silos that traditionally divide pharmacovigilance, regulatory affairs, and medical affairs. This is typically the Chief Medical Officer or a Senior Vice President of Drug Safety and Regulatory Affairs. Without executive sponsorship, the cross-functional coordination required for this kind of program does not happen.

They treat the monitoring function as part of pharmacovigilance, not as a marketing or communications function. This is a substantive choice. When AI monitoring lives in communications or brand, the findings get filtered through a commercial lens. When it lives in pharmacovigilance, the findings get assessed for patient safety implications first and commercial implications second. That ordering matters for regulatory credibility.

They have defined escalation criteria. A query response that uses slightly outdated efficacy data is different from a query response that tells a patient to ignore a contraindicated drug combination. The organization needs to know in advance which category requires what response, rather than making that determination case by case under time pressure.

They connect AI monitoring findings to their broader digital content strategy. When AI systems mischaracterize a drug because the training corpus lacks current data, the most durable correction is producing and distributing the current data in formats that have high training corpus penetration: peer-reviewed publications, clinical practice guidelines, clearly written medical information documents, and well-structured prescribing information.

That connection turns a compliance activity into a strategic activity. The same investment that reduces regulatory risk also improves brand characterization in AI systems over time. It does not produce results in weeks. But over two to three model generation cycles, companies with more complete and current clinical publication records tend to get better AI characterizations of their drugs.

What Happens Next

The regulatory environment around AI drug mentions is not static. Several developments are moving in parallel.

FDA’s Digital Health Center of Excellence is expected to issue a discussion paper on AI-generated health content in 2025, following two years of industry consultation. The paper is unlikely to create immediate binding obligations, but it will signal the enforcement framework the agency is building toward. Companies that are not monitoring by the time that paper drops will face pointed questions at any subsequent inspection about why they did not start when the issue was publicly identified.

The EU AI Act’s high-risk AI provisions will begin to bite more specifically for pharmaceutical applications in 2025 and 2026 as national competent authorities begin implementing compliance checks. Companies that have patient-facing AI tools in European markets and have not conducted the required conformity assessments are already out of compliance. The enforcement gradient will increase as the Act matures.

Class action litigation involving AI-generated drug misinformation is in its earliest stages in the US, but the plaintiff’s bar is watching it carefully. The legal theory that pharmaceutical companies have a duty to monitor and correct foreseeable AI misrepresentations of their products has not succeeded yet, but it has also not been conclusively rejected. As AI adoption deepens and patient harm cases with AI-content exposure in the causal chain emerge, the legal landscape will clarify in ways that are not likely to favor companies that took a passive posture.

On the positive side, regulatory agencies are also beginning to recognize AI monitoring programs as evidence of good pharmacovigilance practice. Companies that can demonstrate proactive, systematic monitoring are differentiating themselves from those that cannot, in a way that is likely to matter at inspection time and in the context of any future enforcement action where AI-generated content is implicated.

The Competitive Reality for Pharmaceutical Intelligence

There is an underappreciated competitive dimension to all of this. Pharmaceutical companies that build robust AI monitoring capabilities early will have an information advantage that extends beyond compliance.

Real-time knowledge of how AI systems characterize your drugs, your competitors’ drugs, and the therapeutic categories you compete in is a form of market intelligence that did not exist two years ago. It tells you where the information gap is between what you know about your product and what the world’s most widely used information systems are reporting. It tells you where competitor products have stronger AI presence than their clinical data would justify. It tells you what questions patients and physicians are asking AI systems about your therapeutic area.

That intelligence is actionable for medical affairs publication strategy, for clinical development prioritization, for patient education program design, and for physician communication targeting. Companies that treat AI monitoring as purely a compliance exercise are leaving the commercial value of that intelligence on the table.

Tools built for pharmaceutical AI intelligence, including DrugChatter’s monitoring capabilities, are beginning to surface this kind of structured insight alongside the compliance outputs. The two use cases are complementary. The compliance function needs documented surveillance. The commercial function needs competitive intelligence. A well-designed monitoring program serves both.

Key Takeaways

AI drug mention risk is real, current, and undermonitored. The gap between the volume of AI-generated drug information and the industry’s monitoring infrastructure is large and growing.

Three specific failure modes deserve immediate attention. Hallucinated off-label indications create direct regulatory exposure. Adverse event signal dilution through AI interactions represents a pharmacovigilance integrity risk. Brand characterization distortion in AI systems is a quantifiable commercial problem with no traditional media solution.

The regulatory framework is ambiguous but directional. FDA has not issued specific binding guidance on AI drug mention monitoring, but its posture on promotional claims makes the source of content irrelevant to enforcement. EU AI Act obligations for patient-facing AI tools are already in effect for high-risk systems.

Monitoring requires a different methodology than social media surveillance. AI chat interfaces are not indexable or crawlable. Effective monitoring requires systematic, structured direct querying across major AI platforms, repeated at regular intervals to detect changes after model updates.

Documentation is as important as detection. A monitoring program that catches problems but does not create a documented audit trail provides limited regulatory protection. The paper trail demonstrating proactive surveillance is often as important as the findings themselves.

Organizational structure determines whether monitoring actually happens. Cross-functional programs with clear executive sponsorship, defined escalation criteria, and explicit ownership within pharmacovigilance or regulatory affairs outperform ad hoc approaches in both detection and response.

AI monitoring is also competitive intelligence. Companies that understand what AI systems say about their products, their competitors’ products, and their therapeutic categories have an information advantage that is actionable for publication strategy, patient education, and physician communication.

FAQ

Q: If an AI chatbot makes an off-label claim about our drug and we had nothing to do with generating it, are we actually at regulatory risk?

The short answer is: it depends on context. If the AI is a third-party platform with no commercial relationship to your company, your direct regulatory exposure under current FDA rules is limited. The off-label promotion rules apply to manufacturers and their agents, and an independent AI platform is not your agent. But if the AI is embedded in your owned digital assets, your disease management apps, your patient portal, your medical information chatbot, any claim it makes is attributable to you. The indirect risk, even for third-party platforms, comes from scenarios where patient harm is traceable to AI misinformation about your drug. That creates potential product liability exposure and, increasingly, is the theory being tested in early AI-related pharmaceutical litigation.

Q: How is AI drug mention monitoring different from what we already do with social media monitoring?

Fundamental architecture difference. Social media monitoring works by crawling and indexing public content: posts, comments, forum threads. Those are static documents that exist independently of the monitoring system. AI chat responses do not exist until someone asks a question. They are generated on demand and are not publicly indexed. Effective AI monitoring requires actively querying AI systems using structured prompt libraries, recording the responses, and analyzing them against label language and clinical data. Social listening tools are not designed to do this. A company that believes its existing social media monitoring covers AI exposure is mistaken.

Q: We process adverse event case narratives using AI tools. What validation standard applies?

No single binding standard applies globally yet, but the FDA’s framework for AI and machine learning-based software as a medical device (SaMD) provides the closest approximation for tools used in drug safety decision support. The relevant question is whether your AI tool makes or influences clinical decisions. Case causality assessment tools that influence whether a case is classified as a SUSAR and therefore triggers 15-day expedited reporting are in a high-consequence category. ICH E6(R3) on Good Clinical Practice addresses AI in data management but not pharmacovigilance specifically. EMA has published reflection papers that are closer to this application. The practical standard the industry is converging on is validation against a characterized test set with documented performance metrics, regular drift monitoring, and human oversight for high-consequence determinations. The specific failure mode to guard against is systematic bias on rare event types, exactly what the European case study described in this article illustrates.

Q: Can we influence what AI systems say about our drugs by producing more digital content?

Indirectly, yes, but with important caveats. AI systems are trained on data that includes publicly available digital content. Peer-reviewed publications, clinical practice guidelines, prescribing information in machine-readable formats, and structured medical information responses all have the potential to influence how future model versions represent your drug. The mechanism is not direct or guaranteed. You cannot submit a correction to an AI company and expect a specific model response to change next week. But a consistent pattern of authoritative, current, well-structured clinical content in the public domain does tend to improve AI characterization over model generations. Companies with robust clinical publication programs, transparent prescribing information, and clear publicly available medical information responses tend to get better AI treatment than those with sparse digital footprints. The implication for publication strategy is that clinical data publication is no longer just about physician education: it is also about AI training corpus influence.

Q: How should compliance teams prioritize this when they already have a full workload?

Start with the highest-risk assets: your largest revenue products, your newest approvals where training data is most likely to be sparse or absent, and any products in therapeutic areas with known safety sensitivity. Run a baseline querying exercise across the major AI platforms for those products only. That exercise will tell you whether you have a significant active problem requiring immediate response, or a manageable monitoring gap requiring a phased program. Most companies that have done this baseline find at least one significant characterization problem per product. That finding usually makes the prioritization argument more concrete than any abstract risk framework can. The organizational investment required to maintain an ongoing program, once the infrastructure is in place, is more modest than building it from scratch. The first six months are the hardest. Automated platforms significantly reduce the ongoing labor.

This article reflects publicly available information and documented industry cases. It does not constitute legal or regulatory advice. Specific compliance questions should be directed to qualified regulatory counsel.