Why Pharmaceutical Brands Must Shift from Social Listening to AI Monitoring — Before Regulators Do

The clinical detail rep used to be the first voice in the room. Then search engines took that slot. Now, for a growing share of physicians, nurses, patients, and payers, the first voice belongs to a large language model.

When a hospitalist in Des Moines types “best GLP-1 for a patient with CKD stage 3 and BMI over 40” into ChatGPT at 11 p.m., no pharmaceutical brand manager knows it happened. No pharmacovigilance officer sees the response. No medical affairs team can verify whether the AI cited the right contraindications, referenced the current label, or mentioned a competitor’s product instead. The interaction is invisible — and that invisibility is the problem.

Drug companies have spent the better part of two decades building sophisticated social media listening programs. They track Twitter mentions, Reddit threads, patient forums on Drugs.com, and Facebook groups. They have compliance teams reviewing adverse event signals from online posts. They have brand trackers measuring sentiment on Instagram. That infrastructure is real, and it produces value. But it is watching the wrong screen.

The conversation that matters — the one that shapes clinical decisions, patient expectations, and payer coverage logic — is increasingly happening inside AI chat interfaces. And the pharmaceutical industry is almost entirely absent from it.

The Scale of the Shift

Before examining what AI monitoring means for drug brands, it helps to understand how quickly the underlying behavior has moved.

OpenAI reported that ChatGPT reached 100 million weekly active users within two months of its public launch in late 2022. By early 2025, the company disclosed that the platform was handling over one billion messages per day. Google’s Gemini, Anthropic’s Claude, and Microsoft’s Copilot — embedded directly inside the tools physicians already use — have collectively added hundreds of millions more sessions per month.

A 2024 survey by the American Medical Association found that 38 percent of practicing physicians reported using AI tools at least once per week for clinical decision support, drug information, or patient communication drafting. Among residents and fellows under 40, that number exceeded 60 percent.

Patients are moving faster. A Pew Research report published in early 2025 found that 27 percent of American adults had used an AI chatbot to research a health condition or medication in the prior twelve months. Among adults aged 18 to 34, the figure was 44 percent.

These are not marginal behaviors. They represent a structural shift in where drug information is consumed, processed, and acted upon — and they are happening at a speed that most pharmaceutical compliance and brand teams have not yet internalized.

What AI Actually Says About Your Drug

The simplest way to grasp the monitoring gap is to run the experiment yourself. Ask a major AI platform about a drug in your portfolio. Ask it about dosing, contraindications, drug-drug interactions, or patient eligibility. Then ask it how the drug compares to its primary competitor.

What you will find is not random noise. Large language models are highly consistent within a given version. Ask the same question a thousand times in the same session context, and you will get substantively similar answers. That consistency is what makes AI a meaningful signal — and a meaningful risk.

The responses AI platforms generate about drugs are shaped by training data that may include: outdated label versions, clinical trial abstracts from before a supplemental NDA was approved, patient forum posts, news articles from regulatory disputes, litigation documents, and medical education content of variable quality. There is no guarantee that a given AI’s drug information reflects the current approved indication, the current boxed warning, or the current contraindication language.

For a brand manager, that creates a specific set of problems. The AI might be describing a drug’s efficacy profile using data from a failed Phase 3 indication. It might be describing off-label uses without the legal hedging a human medical science liaison would apply. It might be citing adverse event rates from an early clinical trial that predate the post-marketing safety updates on the current label. It might be recommending a competitor first.

None of these problems appear in your social media dashboard.

The Regulatory Exposure You Are Not Tracking

The FDA has been deliberate about not rushing AI-specific pharmaceutical guidance, but the agency has not been silent. The 2023 discussion paper on AI in drug development acknowledged that the use of AI to generate or transmit drug information raises questions about promotional standards, fair balance requirements, and adverse event reporting obligations. The European Medicines Agency published its AI workplan in 2024, with explicit language about the need for manufacturers to monitor AI-generated information that could constitute promotional material.

The key regulatory question — still unresolved in most jurisdictions — is whether a pharmaceutical company has an obligation to correct, report, or respond to AI-generated content that misrepresents its drug.

The analog from social media is instructive. After years of ambiguity, FDA’s 2014 guidance on social media made clear that companies have an obligation to submit Form 3500A reports for adverse event information encountered online, including on third-party platforms, when the company becomes aware of it. The phrase “when the company becomes aware” carried enormous compliance weight. It created an incentive to monitor. It created liability for willful blindness.

No equivalent AI-specific guidance exists yet. But the legal logic is likely to be the same. If a patient tells an AI chatbot about a side effect they experienced, and the AI is trained on that public data, and the company could have detected it through reasonable monitoring, the question of when the company “became aware” becomes a litigation variable.

Companies that build AI monitoring infrastructure now are not being paranoid. They are avoiding the position of having to argue, in a future regulatory enforcement action, that they had no way of knowing what AI was saying about their products. <blockquote> “By 2026, an estimated 75% of drug information queries from HCPs in digital channels will pass through or be influenced by generative AI interfaces, up from under 15% in 2022.” — IQVIA Institute for Human Data Science, Digital Health Trends in Pharma Report, 2024 </blockquote>

Brand Share of Voice Has a New Denominator

Share of voice has always been a blunt instrument. Measuring how often your drug is mentioned relative to competitors across paid, earned, and owned media gives you a directional signal, but not a causal one. The traditional denominator — total media mentions — was always noisy and hard to normalize.

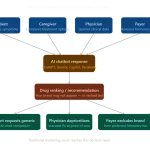

AI changes the denominator problem by adding a new channel with unique properties. When a physician asks an AI “which SGLT2 inhibitor should I consider for a heart failure patient already on a loop diuretic,” the AI gives a recommendation. That recommendation is not a mention. It is a preference — one generated without any promotional input from the competing brands, based entirely on how the AI’s training data weighted the clinical literature, safety data, and comparative effectiveness evidence.

If ChatGPT recommends empagliflozin 70 percent of the time in response to that query and dapagliflozin 20 percent of the time, that is actionable intelligence. It tells Boehringer Ingelheim and AstraZeneca something about how their clinical evidence has been absorbed, synthesized, and ranked by the most widely used information channel their target audience now uses. It tells them whether their medical education investments, publication strategies, and congress presence are being reflected in how AI interprets the literature.

This is not the same as social media share of voice. It is closer to an always-on medical knowledge audit — one that runs 24 hours a day, responds to queries in hundreds of languages, and never sleeps through a label update.

Platforms like DrugChatter are being built to track exactly this. Rather than crawling public posts for drug mentions, AI monitoring tools query AI platforms systematically and repeatedly — across different question formulations, across different clinical contexts, and across different patient profiles — to build a statistically robust picture of how an AI responds to drug-related questions. The output is not a sentiment score. It is a recommendation pattern.

The Three Failure Modes AI Monitoring Catches That Social Doesn’t

Failure Mode 1: Outdated Clinical Positioning

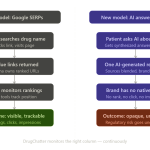

When Ozempic received FDA approval for cardiovascular risk reduction in adults with type 2 diabetes and established cardiovascular disease in March 2024, the label change was significant. It gave Novo Nordisk a new promotional claim — and a new competitive differentiation point against other GLP-1s that lack the CV indication.

Social media captured some of the patient and physician reaction to the approval. But social media could not tell you whether, six months after the approval, the leading AI platforms were accurately reflecting the new indication when answering queries about Ozempic’s cardiovascular benefits. Only systematic AI monitoring could reveal that.

If an AI trained before the label update continues to describe Ozempic primarily as a diabetes drug without flagging the CV indication, Novo Nordisk’s medical education investment in that new indication is being partially negated in a channel the company cannot see. That is a direct competitive and commercial impact — not a hypothetical one.

Failure Mode 2: Amplified Adverse Event Signals

Social listening programs exist in part to capture adverse event signals — reports of side effects, drug interactions, or unexpected clinical outcomes that might warrant pharmacovigilance review. The methodology is imperfect, but it generates data.

AI monitoring adds a different kind of adverse event signal. It captures how AI is synthesizing and presenting adverse event information to the people who use it. An AI that consistently leads its description of a drug with its black box warning, for example, is shaping prescriber caution even for patients where the benefit-risk profile is clearly favorable. An AI that misattributes a competitor’s side effect profile to your drug is doing active brand damage every time a physician or patient asks the relevant question.

These are not adverse events in the pharmacovigilance sense. But they are adverse outcomes for patients who are steered toward less appropriate therapy because of inaccurate AI responses. And for companies tracking how their drugs are discussed, they are exactly the kind of signal that warrants response.

Failure Mode 3: Competitor Displacement in AI Recommendation Logic

The most commercially sensitive failure mode is the simplest. AI recommends your competitor first.

This happens not because AI platforms are paid to prefer competitors. It happens because recommendation logic in LLMs reflects the weight of clinical evidence, publication frequency, guideline citations, and safety profile discussions in training data. A drug with more published Phase 3 trials, more guideline citations, and a cleaner safety discussion in the medical literature will, all else equal, score better in AI recommendation logic.

That means your publication strategy — the conferences where you present data, the journals where you publish outcomes studies, the clinical practice guidelines you support — now has a direct impact on AI recommendation patterns. It is no longer just about influencing prescribers. It is about influencing the training signal that shapes what AI tells prescribers.

Companies that understand this dynamic can adjust their medical affairs strategy accordingly. Companies that are not monitoring AI recommendations will not know they have a displacement problem until it shows up in script data, six months late.

Why Social Media Listening Cannot Be Retooled for This

The instinct in many pharmaceutical brand teams, when confronted with a new monitoring challenge, is to ask whether the existing social listening vendor can add a module. It is a reasonable instinct — integration is cheaper than new infrastructure. But the technical and methodological differences between social listening and AI monitoring are large enough that repurposing social tools is the wrong approach.

Social listening is fundamentally retrospective. It indexes content that has been published. A social listening tool can tell you what was said about your drug on Reddit last Tuesday. It cannot tell you what ChatGPT will say about your drug tomorrow when a physician asks.

AI monitoring is prospective and query-based. It works by systematically asking AI platforms questions — the same questions your target audiences are asking — and recording, analyzing, and tracking the responses over time. The methodology requires a query library (a structured set of clinically relevant prompts that represent real-world use cases), a testing cadence (because AI responses can change as models update), and a response analysis framework that can interpret nuanced clinical language at scale.

Social listening vendors have none of this infrastructure, because it is not a crawling problem. It is a querying problem. The tools, APIs, and analytical frameworks are different, and the compliance implications — particularly around what constitutes “awareness” for pharmacovigilance purposes — require specialist legal input that most social listening programs have not had to develop.

DrugChatter was built specifically for the query-based AI monitoring use case, with a query library designed around clinical workflows, regulatory considerations, and competitive intelligence needs. That specificity matters, because a generic AI monitoring tool that asks “what is [drug name]” is not generating the clinical intelligence that pharmaceutical brands actually need.

The Medical Affairs Angle: AI as a Knowledge Competitor

Medical science liaisons have spent years building relationships with key opinion leaders. Their value proposition is access to depth — to clinical data, real-world evidence, and disease area expertise that a prescriber cannot easily find on their own. That value proposition is under pressure.

When a KOL can ask Claude to synthesize the entire published literature on CDK4/6 inhibitors in HR+/HER2- metastatic breast cancer in thirty seconds, the information access gap that the MSL was filling narrows. The MSL’s competitive advantage shifts from information delivery to interpretation, relationship, and institutional trust. That is a meaningful change in the role — and it is happening faster than most medical affairs organizations have planned for.

For the pharmaceutical company, AI as a knowledge competitor creates a new strategic question. If an AI platform is regularly synthesizing your clinical data — accurately or not — and presenting it to the prescribers you are investing MSL resources to reach, you need to know what that AI is saying. You need to know whether it is accurate. You need to know whether it is favorable. And you need to know whether the responses are consistent with your approved label and promotional materials.

This is not an abstract strategic concern. It is a resource allocation question. If AI is already providing accurate, favorable, label-consistent information to high-decile prescribers in your target segments, the marginal value of an MSL call in that segment may be different than you assumed. If AI is providing inaccurate or competitor-favoring information, the priority and message of the MSL call needs to change.

Neither decision can be made without knowing what the AI is actually saying.

Patient-Facing AI: The Adherence and Expectation Problem

The physician side of the AI monitoring equation gets more attention in pharmaceutical discussions, but the patient side may be more immediately consequential. Patients are using AI to research their diagnoses, understand their prescriptions, and decide whether to fill them.

Consider a patient newly prescribed apixaban for atrial fibrillation. Before filling the prescription, they ask an AI chatbot what apixaban is, what the side effects are, and whether it is safe for someone with their medical history. The AI’s response shapes the patient’s expectations, their willingness to adhere, and in some cases their decision about whether to fill the prescription at all.

If the AI over-emphasizes bleeding risk without contextualizing the absolute risk reduction in stroke prevention, the patient may not fill the prescription. If the AI mentions the cost without noting patient assistance programs, a price-sensitive patient may abandon the medication before trying. If the AI’s description of the dosing regimen is inaccurate, the patient may take the drug incorrectly and experience a preventable adverse outcome.

All of these scenarios are playing out right now, at scale, in patient populations for every major drug category. No one in the pharmaceutical industry has a clear picture of how often they are happening, how consistent the AI responses are, or what the adherence impact is.

Measuring this requires AI monitoring specifically designed around patient-facing queries — which use different language, different clinical contexts, and different informational needs than HCP-facing queries. The query libraries have to be built differently. The analytical frameworks have to account for health literacy variation and the emotional dimensions of patient decision-making.

This is specialized work. But the commercial stakes are direct. If AI-generated patient information is suppressing adherence for your drug, you have a revenue problem you cannot see in your current monitoring infrastructure.

The Competitive Intelligence Layer

Pharmaceutical competitive intelligence teams have historically relied on a combination of: conference proceedings, published clinical trial results, FDA approval announcements, court filings in patent disputes, and field intelligence from the sales force. These are all lagging signals — they tell you what a competitor has done, not what they are planning or how their positioning is landing.

AI monitoring adds a real-time competitive intelligence layer that no previous technology has provided. When you systematically query AI platforms about your competitor’s drugs — the same way you query them about your own — you learn several things that traditional CI cannot tell you.

First, you learn how AI is weighting your competitor’s clinical evidence relative to yours. If AI consistently recommends a competitor’s drug first for a given patient profile, it is a signal about where the comparative clinical literature sits, not just about marketing execution. That is a medical affairs input, not just a brand team input.

Second, you learn how AI is characterizing your competitor’s safety profile. If an AI consistently cites a boxed warning for a competitor drug in a patient population where the prescriber might otherwise consider that drug, it represents a competitive advantage for your product — one that your commercial team should understand and that your MSLs should be prepared to discuss.

Third, you learn how AI responds to patient objections and questions about your competitor. The questions patients ask AI about drugs are a direct window into prescriber and patient concerns that are not always captured in traditional market research. A query pattern like “why did my doctor switch me from [competitor] to [your drug]” tells you something about the real-world switching dynamics in your market.

None of this intelligence requires any interaction with competitor employees, confidential documents, or restricted information. It is all derived from asking the same publicly accessible AI platforms that your customers are already using.

The Liability Architecture: Who Owns What AI Says

The legal question of AI liability in pharmaceutical contexts is genuinely unsettled. But the contours of how it is likely to develop are visible from adjacent areas of law.

In the social media context, pharmaceutical companies have been held to FDA promotional standards for content they post, sponsor, or control — but generally not for third-party content they cannot control. The “awareness” standard created an obligation to monitor and report, but not an obligation to retroactively correct every inaccurate third-party post.

AI is different in two respects. First, AI platforms are not passive content hosts. They actively synthesize and present information. A user asking ChatGPT about a drug is not reading a third-party post — they are receiving a generated response that the AI presents as authoritative. The user’s perception of that response as reliable creates a different harm profile than a random Reddit post that the user might discount.

Second, AI responses are systematic and consistent in ways that social media posts are not. A single inaccurate Reddit post reaches whoever sees it. An AI response that consistently mischaracterizes a drug’s safety profile reaches every user who asks the relevant question — potentially millions of queries per month. The scale of systematic AI misinformation about a drug is qualitatively different from the scale of social media misinformation.

Whether this means pharmaceutical companies have an obligation to engage with AI platforms to correct inaccuracies, submit adverse event reports based on AI-observed patient disclosures, or take other proactive steps — these questions are coming to the FDA’s desk regardless of whether companies are ready for them. Companies that have already built AI monitoring programs will have data to work with when the guidance arrives. Companies that have not will be constructing their position from scratch under regulatory time pressure.

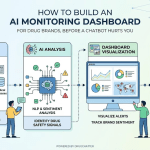

What a Mature AI Monitoring Program Looks Like

A sophisticated pharmaceutical AI monitoring program has four components, each of which requires different technical and analytical capabilities.

The first is a query library — a structured, validated set of questions designed to probe how AI responds to clinically relevant queries about your drug. This library should cover: indication-level questions (what is [drug] used for), dosing and administration questions, safety and contraindication questions, comparative effectiveness questions (how does [drug] compare to [competitor]), and patient-specific scenario questions (is [drug] appropriate for a patient with [comorbidity]). The library should be built with input from medical affairs, regulatory, pharmacovigilance, and commercial teams, because each function needs different outputs.

The second is a testing methodology — a systematic cadence for running the query library against the major AI platforms, recording responses, and tracking changes over time. This is not a one-time snapshot. AI models update, sometimes with major version releases and sometimes with incremental fine-tuning. A response that was accurate six months ago may not be accurate today. The testing cadence needs to be frequent enough to catch material changes in a clinically relevant timeframe.

The third is an analysis framework — the methodology for interpreting AI responses at scale. Individual responses can be read by a medical affairs professional. Ten thousand responses per month require NLP-based classification, sentiment analysis calibrated to clinical language, and a response taxonomy that can identify specific types of inaccuracy, competitive displacement, or safety mischaracterization.

The fourth is a governance and escalation process — the internal workflow that determines what happens when the monitoring program identifies a material issue. Who reviews a finding that AI is consistently omitting a new approved indication? Who decides whether to submit an adverse event report based on a patient disclosure detected in AI response data? Who engages with the AI platform when a systematic inaccuracy is identified? These are not technical questions. They are organizational and legal questions that need to be resolved before the monitoring program goes live.

DrugChatter’s platform is designed to address the first three components — the query library, testing methodology, and analysis framework — at the level of clinical specificity that pharmaceutical brands require. The governance question is one that each company’s legal and compliance teams need to own.

The Publication Strategy Implication

One finding that consistently surprises pharmaceutical executives when they first engage with AI monitoring data is how directly their publication strategy shows up in AI recommendation patterns.

Large language models are trained on text. The text that appears most frequently in high-quality, widely cited sources has the most influence on how the model responds to related questions. For pharmaceutical companies, this means that the clinical data published in high-impact journals, cited in major guidelines, and presented at high-visibility congresses has a disproportionate influence on how AI characterizes their drugs.

This is not a new insight in medical affairs — publication strategy has always been partly about shaping clinical perception. But AI makes the feedback loop both faster and more measurable. A well-designed AI monitoring program can detect, within months of a major publication, whether and how the new data is being reflected in AI responses. That gives medical affairs teams an empirical signal about publication impact that was previously available only through the much noisier signal of prescribing behavior.

It also creates a clear investment case for publication strategy spending. If systematic AI monitoring shows that a competitor’s drug is being recommended first in a patient segment where your drug has superior data, and that pattern correlates with the competitor’s more extensive publication record in that segment, the path to closing the gap is identifiable. You do not need to reverse-engineer prescriber behavior. You need to generate and publish the data that will shift the AI’s synthesis.

This feedback loop — monitor AI, identify gaps, design publications to close them, monitor AI again — is a practical example of how AI monitoring integrates into pharmaceutical commercial and medical strategy rather than sitting alongside it.

The Global Complication

The AI monitoring challenge is complicated by the fact that AI usage patterns vary significantly by market. In the United States, ChatGPT and Claude dominate physician AI usage for drug information. In China, Baidu’s ERNIE Bot and Alibaba’s Tongyi Qianwen are the relevant platforms. In Germany, many HCPs access AI through Microsoft Copilot integrated into clinical workflow tools. In Japan, Line’s AI assistant has significant patient-facing penetration.

Each of these platforms has different training data, different response tendencies, and different relationships with pharmaceutical regulatory environments. A monitoring program designed only for US-based AI platforms will miss the majority of the global AI drug information landscape.

For multinational pharmaceutical companies, this means AI monitoring needs to be a global function — one that is coordinated across affiliates, aligned with local regulatory requirements, and resourced at a level commensurate with the commercial importance of each market.

The query library needs to be translated and culturally adapted, not just linguistically. The clinical context in which a drug is being asked about differs by market — disease prevalence, treatment guidelines, prescribing patterns, and patient expectations are all market-specific. A query that is clinically relevant in the US may not reflect how the drug is used in Japan, and vice versa.

This is a real operational challenge for companies used to treating digital monitoring as a US-centric function. It is also an opportunity — companies that build global AI monitoring programs early will have a competitive intelligence advantage in international markets that is genuinely difficult to replicate.

How Regulatory Affairs Should Be Using This Data

The primary audience for AI monitoring data in most pharmaceutical organizations will be brand teams and medical affairs. But regulatory affairs has a distinct and underutilized claim on this data.

The FDA’s Framework for Regulatory Advanced Manufacturing Evaluation (FRAME) and the agency’s 2024 digital health guidance both emphasize the importance of real-world evidence in post-market surveillance. AI monitoring data — specifically, systematic records of how AI platforms describe approved drugs — represents a new category of real-world evidence that regulatory affairs teams should be incorporating into their post-market surveillance infrastructure.

From a practical standpoint, this means regulatory affairs should be involved in the design of the query library, to ensure that the monitoring program captures the specific types of misinformation that are most relevant from a safety and labeling standpoint. It means regulatory affairs should receive escalations when the monitoring program identifies AI responses that describe the drug in ways inconsistent with the current label. And it means regulatory affairs should be developing a position, in advance, on how the company will engage with FDA if the agency begins asking questions about AI-generated drug information.

Companies that treat AI monitoring as a commercial intelligence function only — a brand team capability disconnected from regulatory — are leaving the most defensible compliance argument on the table.

The Data Privacy Wrinkle

Any discussion of AI monitoring in pharmaceutical contexts has to address data privacy, because the queries being asked of AI platforms frequently contain patient health information. When a physician asks an AI “my patient has stage 3 CKD, BMI of 42, and HbA1c of 9.2, which GLP-1 would you recommend,” they may be inadvertently sharing protected health information — depending on whether the AI platform has a BAA in place with their employer and whether the platform logs queries for training purposes.

This creates a monitoring wrinkle. The most clinically rich AI queries — the ones most likely to contain information relevant to pharmacovigilance and brand intelligence — are also the ones most likely to contain PHI. A pharmaceutical AI monitoring program cannot and should not attempt to capture individual patient data from AI interactions. What it can do is query AI platforms with synthetic patient scenarios that represent realistic clinical contexts without including real patient identifiers.

This is not a limitation — it is actually how a well-designed AI monitoring query library works. The goal is not to intercept individual physician queries. It is to understand how AI responds to the types of queries physicians are likely to ask. Synthetic scenarios accomplish this without any privacy exposure.

The privacy question becomes more complex when AI monitoring attempts to capture patient-facing disclosures — cases where a patient tells an AI about a side effect they experienced. This is an area where pharmaceutical legal teams need to be involved in program design from the start, and where the regulatory interpretation of “awareness” for pharmacovigilance purposes may need to be developed in consultation with FDA before a clear protocol can be established.

Building the Business Case Internally

The pharmaceutical executives most likely to read this article are not the ones who need to be convinced that AI monitoring matters. They are the ones trying to build an internal business case that moves the function from “interesting emerging capability” to funded program.

The business case has three components.

The first is risk mitigation. The compliance and regulatory exposure created by operating without AI monitoring is real. The cost of building a monitoring program is small compared to the cost of a regulatory enforcement action based on undetected adverse events or uncorrected labeling misrepresentations in AI channels. This argument works with general counsel and regulatory affairs.

The second is commercial intelligence. AI recommendation patterns are a direct signal about how clinical evidence is being synthesized in the most rapidly growing prescriber information channel. Companies with AI monitoring data can identify competitive displacement, track publication impact, and calibrate medical affairs resource allocation in ways that are not possible with existing tools. This argument works with brand teams and commercial leadership.

The third is first-mover advantage. AI monitoring methodology is still being established. Companies that build programs now will develop proprietary query libraries, analytical frameworks, and governance processes that will be difficult for later entrants to replicate quickly. The regulatory guidance that is coming will favor companies with existing monitoring programs. Moving early is strategically and legally preferable to moving in response to a regulatory prompt. This argument works with senior executives and boards.

DrugChatter and the Emerging Monitoring Stack

The AI monitoring category for pharmaceutical companies is genuinely new. The vendors that exist today — DrugChatter among the most sector-specific of them — are building against a use case that did not exist three years ago. The methodologies are evolving. The regulatory framework is evolving. The AI platforms themselves are evolving.

What is not evolving is the directional trend. AI is taking share of clinical decision-making time from every other information source. The pace of adoption among HCPs and patients is accelerating, not plateauing. The query volumes are increasing. The range of clinical questions being directed at AI platforms is expanding.

Companies that wait for this trend to mature before building monitoring infrastructure are making the same mistake that social media late adopters made in 2012 — they will spend years catching up to a capability gap that early movers are using for competitive advantage. The difference is that the regulatory stakes for pharmaceutical AI monitoring are higher than they were for social media. The adverse event implications are more complex. The label compliance questions are more specific.

DrugChatter’s query-based approach, with pharmaceutical-specific query libraries and a clinical language analysis framework, represents the type of purpose-built monitoring infrastructure that the sector needs. Generic AI monitoring tools — those built for consumer brand tracking or general enterprise AI governance — lack the clinical specificity and regulatory context to be directly useful for pharmaceutical compliance and commercial intelligence applications.

The pharmaceutical industry has always had to monitor what is being said about its drugs. The channel has changed. The tools need to change with it.

Key Takeaways

1. The channel has shifted. Physicians and patients are increasingly using AI platforms — not search engines or social media — as their first source of drug information. Pharmaceutical monitoring programs that focus on social media are watching a channel that is losing clinical decision-making share.

2. AI responses are consistent and systematic, which makes them both valuable as intelligence and dangerous as misinformation vectors. Unlike social media posts, AI responses to a given query are highly reproducible. This means inaccurate AI information about a drug reaches every user who asks the same question.

3. The regulatory exposure is real and growing. FDA’s “awareness” standard for pharmacovigilance obligations will likely apply to AI-observed adverse event disclosures. Companies without AI monitoring programs will be in a weaker position when guidance arrives.

4. AI recommendation patterns are a direct measure of medical affairs effectiveness. If your publication strategy, congress presence, and guideline citations are not being reflected in AI recommendation logic, your medical education investment is producing less clinical impact than your investment model assumes.

5. Social listening tools cannot be retooled for AI monitoring. The methodologies are different. Social listening is retrospective and crawl-based. AI monitoring is prospective and query-based. Different infrastructure, different vendor capabilities, different analytical frameworks.

6. The business case covers risk, intelligence, and first-mover advantage. These three arguments address the concerns of general counsel, commercial leadership, and senior executives respectively. All three are supported by observable trends in AI adoption and regulatory posture.

7. Query library design is the foundational technical decision. A monitoring program is only as valuable as the clinical relevance of the queries it runs. The query library needs input from medical affairs, regulatory, pharmacovigilance, and commercial teams to be operationally useful across the pharmaceutical company’s functions.

FAQ

Q1: Does AI monitoring create an obligation to report adverse events that patients disclose to AI chatbots?

The short answer is that this is legally unsettled, and pharmaceutical companies should develop a position before they start monitoring, not after. The FDA’s existing framework for social media adverse event reporting uses an “awareness” standard — companies that become aware of adverse event information have reporting obligations, regardless of the channel. If a systematic AI monitoring program surfaces patient disclosures of side effects, the question of whether those disclosures constitute “awareness” for Form 3500A purposes is one that needs to be resolved with regulatory counsel. Companies that avoid monitoring to avoid awareness are not avoiding liability — they are avoiding the data that would allow them to manage it responsibly.

Q2: How often do AI models update in ways that materially change their responses about drugs?

Major model version updates — which can substantially change response patterns — occur several times per year for the leading platforms. Minor updates and fine-tuning are more frequent. A pharmaceutical AI monitoring program should be designed to detect material changes in response patterns on at least a monthly cadence, with the capability to run ad hoc comparisons when a major model update is announced publicly. Label changes, new clinical data publications, and significant safety communications should also trigger targeted AI monitoring reviews, because these events create conditions where AI responses may diverge from current regulatory status.

Q3: Can pharmaceutical companies engage with AI platforms to correct inaccurate drug information?

Yes, and several have begun to do so — though the processes are not standardized. OpenAI, Anthropic, and Google have all developed channels through which organizations can submit factual corrections or flag inaccurate information in AI responses. The regulatory question is whether this engagement constitutes promotional activity and whether it triggers review requirements under FDA promotional guidelines. The answer likely depends on the nature of the correction and the documentation around it. Companies should involve regulatory and legal counsel before initiating any formal engagement with AI platforms about drug information.

Q4: Is AI monitoring applicable to biologics and specialty drugs, or primarily to small molecules?

It is applicable to both, but the monitoring priorities differ. For specialty drugs and biologics — particularly those with complex dosing, restricted distribution programs, or significant safety profiles — the concern is primarily about AI mischaracterizing label requirements or patient selection criteria in ways that could lead to inappropriate use. For small molecules competing in crowded generic categories, the competitive intelligence dimension is more central. In both cases, the patient education and adherence implications of AI monitoring are significant, because patients are as likely to research a specialty biologic as a primary care drug before their first dose.

Q5: How do pharmaceutical companies integrate AI monitoring data into existing market research and brand tracking frameworks?

The cleanest integration point is alongside quarterly brand tracker data, where AI recommendation patterns can be compared to prescriber awareness, consideration, and preference scores from traditional research. AI monitoring data adds a behavioral signal — what the AI platform recommends when asked — to the attitudinal data that brand trackers collect. Over time, the correlation between AI recommendation patterns and prescribing behavior will provide a calibration point that makes AI monitoring data more interpretable within existing commercial frameworks. Companies should also integrate AI monitoring data into their publication planning cycles, using it as a leading indicator of how new clinical data is being absorbed into AI’s clinical synthesis.