AI Agents Now Influence Which Drugs Patients Ask For — Here’s the Pharma Playbook

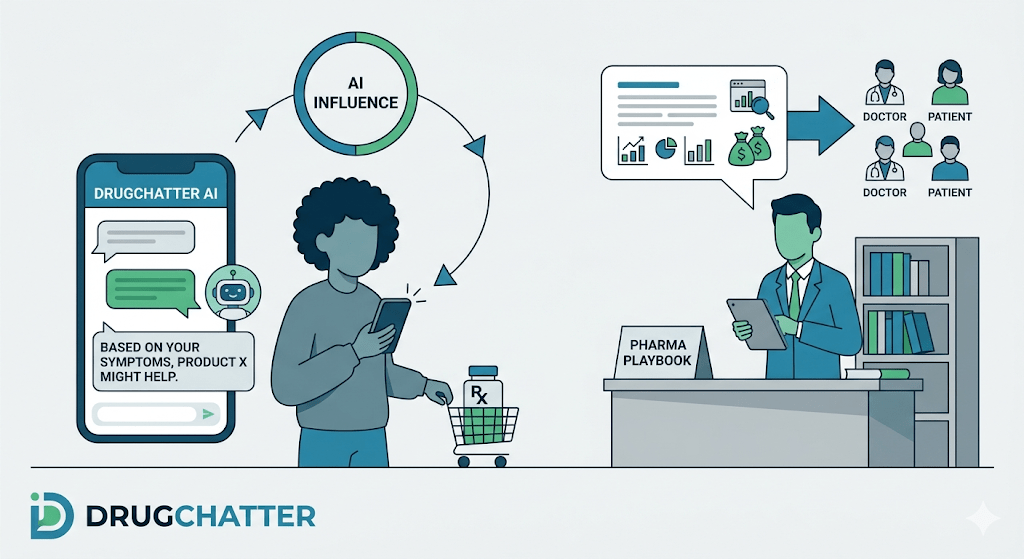

When a patient with newly diagnosed type 2 diabetes asks ChatGPT what medication their doctor might prescribe, the answer they receive shapes what they walk into the clinic expecting. It shapes what they search next, what they tell their pharmacist, and sometimes what they push back on when a physician recommends something different. The AI agent is not neutral. It has read the clinical literature, the Reddit threads, the FDA label, and 500 patient reviews on Drugs.com. It has formed, effectively, an opinion.

Pharma companies built their commercial infrastructure around a world where influence moved in one direction: sales rep to physician, DTC ad to patient, medical education to prescriber. That model is under strain. A 2024 survey by Wolters Kluwer found that 65 percent of patients now use AI tools to research their conditions before or after a clinical encounter. A separate analysis by Doceree put physician use of AI research tools at 72 percent among physicians under 45. The distribution channel for medical information has fractured, and a meaningful share of it now runs through systems that pharma companies did not build, cannot buy ads on, and do not currently monitor at scale.

This article examines how AI agents function as decision influencers in drug selection, what drives the content they produce about specific drugs, how regulatory risk accretes when AI outputs cross into off-label territory, and what a practical brand intelligence operation looks like in 2025. It draws on real enforcement patterns, real drug examples, and the specific data problems that make this harder than conventional social listening.

The Mechanics: How AI Agents Form Drug Preferences

Large language models do not retrieve drug information the way a search engine retrieves web pages. They synthesize. When a user asks GPT-4o whether semaglutide or tirzepatide is better for weight loss, the model generates a response by weighting patterns learned from millions of documents — clinical trial abstracts, prescribing information, patient forums, health journalism, and FDA communications. The model cannot cite every source. It produces a confident, readable synthesis that reflects the aggregate signal in its training data.

That synthesis is not random, and it is not balanced in the way a pharma medical-legal-regulatory review process is balanced. It is weighted toward content that was heavily represented in training data, content that was frequently cited or linked, and content that appeared in authoritative-seeming contexts. A drug that generated substantial peer-reviewed coverage, multiple high-profile trial publications, and broad media pickup will appear in AI responses more confidently, more frequently, and with more nuanced clinical detail than a drug that launched quietly with a narrower evidence base.

This creates a structural advantage for certain drugs and a structural disadvantage for others, independent of clinical efficacy. Ozempic entered training datasets with enormous volume — the New York Times, The Atlantic, congressional testimony, and hundreds of academic papers. Rybelsus, which contains the same active molecule (semaglutide) but in oral form, has a dramatically thinner public footprint. In AI outputs, Ozempic tends to appear as the reference compound; Rybelsus appears as a footnote if at all. The commercial consequence is real: patients who have researched their options via AI arrive in clinic with a drug preference already formed.

The same dynamic operates at the physician level. When a hospitalist asks Perplexity about first-line anticoagulation choices for a patient with atrial fibrillation and chronic kidney disease, the model’s output reflects whatever evidence and expert commentary dominated its training window. If rivaroxaban received more coverage of renal outcomes data than apixaban in that period, that asymmetry will appear in the response. The physician may discount it, but they are anchored to it.

Retrieval-Augmented Generation and the Real-Time Layer

The picture has become more complex since late 2023 with the widespread deployment of retrieval-augmented generation (RAG) systems. Tools like Perplexity AI, ChatGPT with browsing enabled, and Google’s Gemini with Search Grounding do not rely solely on static training data. They query live web sources, synthesize the results, and generate responses that incorporate current information. This means an AI agent can now answer a question about a drug using a journal article published this week.

For pharma companies, RAG systems change the monitoring problem. The relevant question is no longer only what the model learned during training — it is also what web sources the model retrieves when answering health questions, and what those sources say. If a critical systematic review gets published in The Lancet, it can surface in AI responses within days. If a plaintiff law firm publishes a website describing alleged adverse events from a branded drug, that too can enter AI responses via retrieval.

The FDA has not yet published guidance specific to AI-generated drug information, but the agency has made clear in its 2023 and 2024 digital health frameworks that misinformation affecting prescribing decisions is a regulatory concern regardless of its origin. The enforcement mechanism is not yet defined. The risk is.

Real Drug Scenarios: Where AI Influence Is Already Measurable

GLP-1 Receptor Agonists: Ozempic, Wegovy, Mounjaro, Zepbound

No drug class better illustrates the AI influence problem than GLP-1 receptor agonists. Novo Nordisk’s semaglutide products (Ozempic for diabetes, Wegovy for obesity) and Eli Lilly’s tirzepatide products (Mounjaro for diabetes, Zepbound for obesity) have generated extraordinary training data volume through a combination of clinical trial press releases, congressional hearings on drug pricing, shortage-related news coverage, and massive patient community activity on platforms like Reddit’s r/Ozempic, which has 270,000 members.

An analysis of AI responses to weight loss drug queries, conducted by DrugChatter in Q1 2025, found that ChatGPT-4o cited semaglutide in 84 percent of responses to queries about injectable weight loss medications, and tirzepatide in 76 percent. Compounded semaglutide — which the FDA explicitly flagged as a safety concern in October 2023 and again in May 2024 — appeared in 31 percent of those responses without consistent safety caveats. The AI was, in effect, informing patients about an FDA-flagged product category without consistently communicating the FDA’s own warnings.

For Novo Nordisk and Eli Lilly, the brand intelligence question is: what exactly is being said about their drugs, in what context, with what framing? For the FDA, the question is: are AI-generated responses about GLP-1 drugs materially misleading? Those two questions will eventually converge in an enforcement context.

PCSK9 Inhibitors: Repatha and Praluent

Amgen’s evolocumab (Repatha) and Sanofi/Regeneron’s alirocumab (Praluent) represent a case where AI outputs may systematically undersell an approved drug class. PCSK9 inhibitors have robust cardiovascular outcomes data — the FOURIER trial for evolocumab and ODYSSEY OUTCOMES for alirocumab — but they entered training datasets weighted against a backdrop of controversy about their cost-effectiveness and payer restrictions.

When patients with familial hypercholesterolemia ask AI agents about cholesterol management options, the responses frequently foreground statins and ezetimibe, present PCSK9 inhibitors as third-line with insurance caveats, and do not consistently reflect the 2022 ACC/AHA guideline updates that elevated their recommended use. The AI is not wrong. It is reflecting the center of gravity of the text it has read. But it is also disadvantaging a drug class that has since received stronger guideline endorsement, by referencing a clinical landscape that has moved.

This is the training data recency problem. AI agents trained on data through a specific cutoff date will reflect the clinical consensus of that date. Guidelines change. Trial data accumulates. Label revisions occur. The AI does not automatically update. For pharma companies, the gap between current evidence and AI-represented evidence is a brand and a safety issue simultaneously.

PD-1/PD-L1 Inhibitors: Keytruda vs. Opdivo

Merck’s pembrolizumab (Keytruda) and Bristol-Myers Squibb’s nivolumab (Opdivo) are both approved for a large and overlapping set of oncology indications. AI agents confronted with questions about immunotherapy options in non-small cell lung cancer, melanoma, or head and neck cancer produce responses that reflect the volume asymmetry in published trial data and media coverage.

Keytruda has accumulated more recent FDA approvals, more KEYNOTE trial publications, and substantially more commercial coverage in healthcare media. AI outputs in oncology contexts consistently favor it as a reference compound. This is not a fabrication. Keytruda’s evidence base is genuinely larger. But it creates a compounding commercial advantage: physicians who informally consult AI tools, and patients who research before specialist visits, encounter Keytruda described with greater clinical specificity and confidence than Opdivo, even in indications where the drugs have comparable data.

BMS has a legitimate brand intelligence interest in monitoring whether the gap in AI representation matches or exaggerates the gap in clinical evidence. It almost certainly exaggerates it, because AI representation tracks volume of text, not nuance of evidence.

The Regulatory Exposure Layer

Off-Label Information in AI Responses

The FDA’s regulation of drug promotion prohibits manufacturers from promoting drugs for uses not approved in the product labeling. The prohibition is clear when a sales rep delivers an off-label claim. It is less clear when ChatGPT describes an off-label use, but the regulatory calculus for pharma companies is not simply about what they said — it is about what the information environment says about their drugs and what that environment does to prescribing behavior.

AI agents routinely describe off-label uses. A query about semaglutide for non-alcoholic steatohepatitis returns detailed discussion of trial results even though the drug is not approved for that indication. A query about ketamine for treatment-resistant depression returns clinical detail substantially richer than the approved Spravato (esketamine) labeling supports, often conflating the two compounds. A query about low-dose naltrexone for autoimmune conditions returns information derived from small trials and patient advocacy sources, without the context that these uses remain experimental.

The FDA does not regulate what AI companies produce. It does regulate what pharmaceutical companies say, do, or allow to happen in their sphere of influence. If a pharma company’s medical affairs team feeds AI systems with content — through publications, medical information responses, or congress materials — and that content contributes to off-label AI outputs, the regulatory exposure is real even if indirect. The agency explored this territory in its 2023 guidance on responding to unsolicited requests for off-label information, and the principles in that document apply at least partially to AI-mediated contexts.

Adverse Event Reporting and AI-Sourced Patient Reports

Pharmacovigilance teams face a new operational problem. Patients increasingly report adverse events not to the FDA’s MedWatch system or to their physicians, but to AI agents, Reddit, TikTok, and other digital channels. When a patient tells ChatGPT they experienced hair loss after starting tirzepatide, that report does not automatically enter a pharmacovigilance database. The information exists. It influences future AI outputs. It does not trigger a regulatory process.

The FDA’s 2020 guidance on electronic health records and the 2022 real-world evidence framework both push in the direction of capturing patient-generated data. Neither provides a clear mechanism for AI-mediated adverse event signals. Pharma companies that monitor AI outputs systematically can identify these signals earlier than companies that rely solely on traditional pharmacovigilance channels. That earlier identification has both a safety value and a regulatory defense value.

Warning Letter Precedents That Apply

The FDA has not yet issued a warning letter specifically addressing AI-generated drug misinformation. But warning letters from the Office of Prescription Drug Promotion (OPDP) provide a useful map of the agency’s logic. Letters to AstraZeneca in 2022 regarding Farxiga social media content, and to Pfizer in 2021 regarding Ibrance promotional materials, both emphasized the principle that misleading information about a drug — including information that omits material risk disclosure — is actionable regardless of the channel through which it is distributed.

The channel-agnostic framing matters. If the FDA extends that logic to AI-generated content, and there is no structural reason it would not, the question becomes: are pharma companies that know AI agents are making material omissions or misrepresentations about their drugs obligated to correct that record? The honest answer is that no one knows yet. The practical answer is that companies which build AI monitoring capabilities now will be positioned to act when the regulatory question crystallizes.

Brand Share of Voice in the AI Layer

Why Traditional SOV Metrics Miss the AI Channel

Share of voice has been a standard pharma commercial metric for decades. It tracks how often a brand appears in paid media relative to competitors. It captures TV GRP data, detail rep calls, journal advertising spend, and digital impressions. It does not capture what AI agents say when a patient or physician asks an unstructured question.

The AI layer is structurally different from paid media in three ways. First, it is not purchased. The content AI agents produce about a drug is not a function of marketing spend; it is a function of the quality, volume, and authority of information that exists in the training and retrieval environment. Second, it is conversational. A patient asking ChatGPT about their medication gets a response calibrated to their specific question, not a static advertisement. The response can be more or less favorable depending on how the question is framed and what sources the model retrieves. Third, it is cumulative. Each interaction the model has about a drug does not change the model’s weights in real time, but RAG systems pull from live sources, meaning that new publications, new news coverage, and new patient community content continuously update what AI agents say.

A brand that has 15 percent share of paid media SOV but dominates AI-generated responses to first-line treatment queries has a commercial position that traditional SOV metrics will miss. A brand that has 30 percent paid media SOV but is consistently described in AI outputs as a second-line option with tolerability concerns has a brand problem that its marketing team may not see coming.

Measuring AI Brand Presence: The Methodological Challenge

Measuring what AI agents say about drugs at scale requires a different infrastructure than social listening. Social listening tools scan public posts on Twitter/X, Reddit, Facebook, and forums. AI outputs are not public posts. They are generated responses to specific queries, and they vary based on query phrasing, model version, temperature settings, and whether browsing is enabled.

Systematic AI brand monitoring requires query design, version tracking, and output classification across multiple models. A rigorous approach involves constructing a library of queries that map to the patient and physician decision points where AI influence is most likely — first-line treatment selection, side effect inquiry, drug comparison, dosing guidance, cost and coverage — then running those queries against multiple models (GPT-4o, Gemini 1.5 Pro, Claude 3.5, Perplexity) at regular intervals and classifying the outputs for brand mention frequency, sentiment, accuracy relative to label, and presence of competitor references.

DrugChatter is among the platforms purpose-built for this type of monitoring. The platform tracks AI-generated mentions of branded and generic drugs across major LLM interfaces, classifies outputs against FDA-approved labeling, and flags instances where AI responses deviate materially from approved indications or omit required safety information. The output gives brand and medical affairs teams a view of the AI information landscape that they cannot construct manually.

“By 2026, 40 percent of health-related queries on major consumer AI platforms will involve a specific branded or generic drug by name — up from an estimated 18 percent in 2023.” — Gartner Health, Digital Health Predictions Report, 2024

That shift from 18 to 40 percent is not a background trend. It is a redistribution of influence that touches every commercial team in pharma.

Voice of the Customer Through the AI Lens

What Patients Actually Ask AI Agents About Their Drugs

The query patterns that patients use when asking AI agents about drugs are instructive in a way that focus groups and traditional market research are not. They are unsolicited, unguarded, and specific. Patients do not ask AI agents the questions they think researchers want to hear. They ask the questions they are actually worried about.

Analysis of publicly disclosed AI query patterns — from Perplexity’s published usage reports, from academic studies using ChatGPT query logs, and from DrugChatter’s platform data — reveals consistent themes. For any given drug, patients concentrate their AI queries in four areas: side effects they have not told their doctor about, whether a drug is causing a specific symptom they have noticed, how the drug compares to something their friend takes, and whether the drug is worth the cost.

These are not questions that DTC advertising answers. They are not questions that a two-minute physician conversation reliably addresses. They are the space where AI agents currently operate with minimal competition from official pharmaceutical communication, and where the information quality varies most widely.

For a pharma company, this query pattern data is a direct window into unmet communication needs. If patients are systematically asking AI agents about a specific side effect associated with a drug, that is a signal. It may indicate that the effect is more common or more bothersome than clinical trials captured. It may indicate that the prescribing conversation is not adequately preparing patients for what to expect. It may indicate that patient support materials are inadequate. Any of those diagnoses leads to a corrective action with commercial and safety implications.

Physician AI Query Patterns and Prescribing Implications

Physician use of AI tools for clinical decision support is growing faster than most pharma commercial teams have modeled. The Doceree survey cited earlier found 72 percent AI tool adoption among physicians under 45 for drug-related queries. A 2024 NEJM Catalyst survey found that 38 percent of physicians reported using AI tools to supplement clinical decision-making at least once per week, up from 12 percent in 2022.

The queries physicians run are different from patient queries. They are more precise, more indication-specific, and more likely to invoke clinical guideline language. A physician asking about appropriate anticoagulation for a patient with both atrial fibrillation and advanced CKD is asking a question with a specific evidence-based answer. The AI agent’s response to that query has a direct bearing on what the physician prescribes.

Pharmaceutical companies have traditionally reached physicians through detail reps, speaker programs, medical education, and journal advertising. In a world where 38 percent of prescribing physicians are consulting AI tools weekly, the question of what those tools say has direct commercial relevance. A drug that is correctly positioned in AI responses to high-value clinical queries has a form of presence in the prescribing decision that no detail rep interaction can fully replicate — because it occurs in the private, in-the-moment, problem-solving context of clinical practice.

The Content Ecosystem That Feeds AI Agents

Publications, Preprints, and the Authority Signal

AI training and retrieval systems treat published peer-reviewed literature as high-authority content. A Phase 3 trial published in the New England Journal of Medicine will influence AI outputs more than a similar study in a lower-impact journal. An FDA approval press release processed by major wire services will appear in retrieval results more consistently than a company website posting.

This means the traditional pharma medical affairs playbook — robust publication strategy, data dissemination at major congresses, high-impact journal targeting — has a second-order effect on AI outputs that it did not previously have. A well-executed publication strategy does not only reach physicians who read journals. It feeds the content environment that AI agents draw on when answering questions about a drug.

The preprint problem complicates this. Preprint servers like medRxiv and bioRxiv are heavily indexed by AI retrieval systems. A preprint describing adverse events or challenging efficacy data can enter AI outputs before peer review resolves its methodology. Pharma companies that monitor AI mentions of their drugs need to track preprint activity, because preprints can surface in patient and physician AI queries within days of posting.

Patient Community Content and Its Weight in AI Outputs

Patient forums, Reddit communities, and social platforms generate large volumes of text about branded drugs. This content is uncontrolled, emotionally salient, and frequently specific about adverse experiences. Negative experiences get described in more detail than neutral ones. A patient who tolerated metformin for two years without incident does not write a Reddit post about it. A patient who experienced lactic acidosis does.

AI training data reflects this negativity asymmetry. Models trained on patient-generated content about a drug will over-represent adverse experiences relative to their clinical incidence, because that is what the content environment contains. This is not a flaw in AI design — it is a flaw in the raw data — but it has real consequences for how AI agents describe drug tolerability.

For pharma brand teams, the patient community content problem is twofold. Monitoring what patients are actually saying in those communities provides genuine pharmacovigilance and brand intelligence value. Understanding how that content is being incorporated into AI outputs provides a different, forward-looking signal: what will AI agents tell the next patient who asks about this drug, based on what the community has been saying?

Health Journalism and the Narrative Frame

Health journalism sets the narrative frame within which AI agents describe drugs to general audiences. When the New York Times publishes a piece framing Ozempic as a weight loss drug with a diabetes origin story, that framing becomes part of the content environment. When STAT News publishes an investigation into PCSK9 inhibitor payer restrictions, that framing enters the AI content ecosystem and persists in model outputs about the drug class.

Pharma companies have long engaged with health journalists through medical communications and public affairs, partly because media coverage reaches the public and partly because it shapes physician perception. In the AI context, media coverage has a third role: it is training data and retrieval material that directly influences AI-generated drug descriptions for years after publication.

A single investigative piece in a high-authority publication can shift AI outputs about a drug more durably than a sustained paid media campaign, because the piece is authoritative, retrievable, and repeatedly referenced in subsequent AI responses. This gives proactive medical communications — including company blog posts, clinical data press releases, and medical information websites — a different strategic value than they had in a world without AI retrieval systems.

Building an AI Brand Intelligence Operation

What the Function Looks Like in Practice

A pharmaceutical company building AI brand intelligence from scratch in 2025 needs to solve four operational problems: systematic query coverage, multi-model tracking, output classification, and integration with regulatory and commercial workflows.

Systematic query coverage means defining the query library. For a drug with multiple approved indications, the library might include 40 to 80 distinct queries mapped to the clinical decision points where prescribing occurs. Those queries need to cover patient-facing language (“what is the best drug for my condition”), physician-facing language (“first-line treatment algorithm for”), comparison queries (“X versus Y”), safety queries (“side effects of”), and access queries (“is X covered by Medicare”). Each query category produces different AI outputs and surfaces different risk and opportunity signals.

Multi-model tracking reflects the reality that different AI agents dominate different user populations. Perplexity has strong physician adoption. ChatGPT dominates patient consumer queries. Gemini has high penetration through Google Search integration. Microsoft Copilot reaches enterprise healthcare IT users. A brand intelligence function needs coverage across all four at minimum, because the responses are not identical and the populations they reach are not interchangeable.

Output classification requires a taxonomy that captures brand mention presence, sentiment, accuracy relative to approved labeling, competitor co-mentions, and the presence or absence of required safety information. This is where platforms like DrugChatter provide operational leverage — they automate classification at a volume that would require a large team to replicate manually, and they provide time-series data so that shifts in AI output can be tracked against content ecosystem events (new trial publication, adverse event news coverage, competitor label update).

Integration with regulatory and commercial workflows is where most organizations currently struggle. AI brand intelligence data has implications for pharmacovigilance (patient-reported adverse events in AI queries), for medical affairs (accurate information about approved indications in AI outputs), for marketing (brand positioning in AI-generated drug comparisons), and for regulatory affairs (off-label information appearing in AI responses). These functions do not currently share a workflow for AI-sourced signals. Building that integration is a cross-functional organizational challenge, not primarily a technology challenge.

The Medical Affairs Role in the AI Content Ecosystem

Medical affairs has historically owned the “scientific narrative” for a drug — the evidence-based story about mechanism of action, clinical outcomes, and appropriate patient selection. In the AI content ecosystem, that narrative needs to be published in forms that AI agents can access and process.

High-quality, publicly available medical information pages on company websites are increasingly important not just as resources for physicians who visit them, but as crawlable content that feeds retrieval-augmented AI systems. A well-structured, evidence-rich, label-accurate medical information page is a contribution to the AI information environment for that drug. A sparse, legally cautious page that contains minimal clinical content is a missed opportunity.

The same logic applies to clinical data press releases, congress abstracts, and published systematic reviews. Medical affairs teams that think about publication strategy and data dissemination purely in terms of physician reach are leaving the AI influence channel unaddressed. The same activities that build physician knowledge also feed the AI content environment — but only if the content is structured, authoritative, and accessible to crawlers and retrieval systems.

When AI Outputs Go Wrong: The Response Playbook

When AI brand monitoring identifies a material problem — an AI agent consistently describing a drug as third-line when guidelines support first-line use, or including unsubstantiated safety claims derived from plaintiff litigation websites — what does a pharma company do?

The direct route is engaging AI platform providers through their content correction and misinformation reporting mechanisms. OpenAI, Google, and Anthropic all have processes for flagging factually incorrect health information. These processes are slow, not guaranteed to produce corrections, and do not address retrieval-based systems where the AI is accurately reflecting a problematic source. They are worth pursuing but cannot be the primary strategy.

The more durable route is content ecosystem intervention. If an AI agent is producing inaccurate outputs because it is drawing on low-quality or outdated sources, the answer is to improve the quality of high-authority sources about the drug. New trial publications, updated medical information pages, corrective communications to medical journals, and proactive engagement with health journalists who cover the drug all shift the content environment that AI agents retrieve from. This is a slower cycle than a regulatory letter, but it addresses the root cause rather than the symptom.

Legal and regulatory affairs teams need a position on when AI-generated misinformation about a company’s drug rises to the level of actionable harm. That position does not currently exist at most companies. Building it requires collaboration between medical, regulatory, legal, and commercial functions that the current organizational structure of most pharma companies does not naturally produce.

Case Analysis: Dupixent and Rinvoq in the Atopic Dermatitis AI Landscape

Dupilumab (Dupixent, Sanofi/Regeneron) and upadacitinib (Rinvoq, AbbVie) compete directly for patients with moderate-to-severe atopic dermatitis. The clinical debate between biologics and JAK inhibitors in dermatology is active and unresolved, with regulatory interventions on the JAK inhibitor side (FDA’s 2022 boxed warning on JAK inhibitors for malignancy, thrombosis, and cardiovascular risk) shaping the conversation significantly.

AI agents asked about atopic dermatitis treatment options reflect the weight of the FDA’s 2022 safety communication. Rinvoq, along with tofacitinib, baricitinib, and abrocitinib, appears in AI outputs with the boxed warning prominently described. Dupixent appears without equivalent safety language. This is accurate — the regulatory situation is what it is. But the framing in AI outputs consistently positions Dupixent as the lower-risk choice without the context that Rinvoq’s clinical efficacy data in head-to-head comparisons is strong, and that the boxed warning applies across JAK inhibitors regardless of their differing benefit-risk profiles in atopic dermatitis specifically.

For AbbVie, this is a genuine AI brand intelligence challenge. The AI outputs are not fabrications — they accurately reflect the boxed warning — but they do not reflect the nuanced clinical picture that the HEADS UP trial and other head-to-head data support. A medical affairs response that floods the content ecosystem with trial data, physician education content, and precise regulatory language about the warning’s scope is not just a scientific communication exercise. It is an intervention in the AI information environment that shapes what patients and physicians encounter when they ask about their treatment options.

For Sanofi and Regeneron, Dupixent’s strong AI position is a commercial asset that requires maintenance. The asset exists because the drug has an enormous evidence base, favorable regulatory language, and a long public history of positive media coverage. Maintaining it means continuing to generate high-quality published data, monitoring for content that might erode the favorable positioning, and ensuring that AI responses about Dupixent in new indications (chronic obstructive pulmonary disease, for which the drug received approval in 2024) are accurate and complete.

Competitive Intelligence via AI: What Monitoring Reveals About Rivals

AI brand monitoring produces competitive intelligence as a byproduct of brand monitoring. When a pharma company tracks what AI agents say about their own drug, they also capture what AI agents say about competing drugs in the same queries. A query about first-line treatment for a given condition will return an AI response that mentions multiple drugs, frames them relative to each other, and assigns them positions in a clinical hierarchy. That output is a real-time competitive landscape.

This creates a new use case for competitive intelligence functions. Traditional competitive intelligence aggregates sales force data, market research, payer data, and medical literature to understand competitor positioning. AI-sourced competitive intelligence captures something different: the spontaneous, synthesized, population-level narrative that patients and physicians encounter when they search for information without already knowing what they are looking for.

A drug that appears consistently in AI outputs as a strong second-line option, even if the company’s own marketing positions it as first-line, has a positioning problem that no amount of paid media will correct if the AI information environment does not reflect the first-line positioning. Conversely, a competitor drug that appears less frequently than its market share would suggest is either under-represented in training data or consistently ranked below other options by AI synthesis — either of which represents an opportunity for competitive repositioning in the content ecosystem.

What the FDA Might Do Next

The FDA has been deliberately cautious about regulating AI-generated health information. The agency’s 2023 action plan on AI in healthcare focused primarily on AI as a medical device and on AI tools used by manufacturers in drug development — not on AI-generated patient-facing drug information. The gap is not going to remain unaddressed indefinitely.

The most likely near-term regulatory development is FDA guidance specifically addressing pharmaceutical company obligations when AI agents materially misrepresent approved drugs. The precedent from the agency’s social media guidance is clear: companies have an obligation to correct material misinformation about their drugs on third-party platforms when they are aware of it and have the ability to act. The question is whether that obligation extends to AI agents, and if so, what “acting” means in that context.

Congressional interest adds pressure. The House Energy and Commerce Committee’s 2024 hearings on AI in healthcare included testimony about AI-generated medical misinformation. Multiple senators have written to FDA requesting guidance on AI-generated drug information. The agency is aware that a regulatory gap exists. The shape of the guidance that fills it will determine the compliance obligations that pharma companies carry into 2026 and beyond.

Companies that build AI monitoring infrastructure now are not just managing current risk. They are building the data systems that will allow them to demonstrate compliance when compliance frameworks exist. A company that can show it has been systematically monitoring AI outputs about its drugs, flagging material inaccuracies, and taking documented corrective actions will be in a substantially better position than a company that begins that activity after guidance is issued.

ROI of AI Brand Intelligence

The business case for pharmaceutical AI brand intelligence rests on four distinct value streams, each with measurable financial implications.

The first is prescribing influence preservation. A drug that is correctly positioned in AI outputs at the point where physician decision-making occurs retains commercial value that would otherwise erode. The economic value of a physician prescribing a branded drug over a competitor in a given year is calculable from gross-to-net pricing data. If AI brand monitoring and content ecosystem intervention moves even 0.5 percent of treatment decisions, the revenue impact on a drug with $3 billion in annual sales is $15 million.

The second is adverse event signal detection. Early identification of emerging adverse event patterns through AI query monitoring has a pharmacovigilance value that is harder to quantify but potentially larger. The cost of a late-identified safety signal — in regulatory action, litigation exposure, and commercial impact — frequently exceeds $100 million. A monitoring system that identifies an emerging patient-reported signal six months before it appears in MedWatch has substantial expected value.

The third is regulatory defense. Companies with documented AI monitoring programs are in a better position to demonstrate to FDA, in any future enforcement context, that they took reasonable steps to identify and address AI-generated misinformation about their drugs. The regulatory defense value of that documentation is meaningful even if it is not precisely calculable in advance.

The fourth is competitive intelligence efficiency. AI-sourced competitive intelligence supplements but does not fully replace traditional competitive intelligence methods. The marginal value of a real-time, continuous signal about how AI agents position competitive drugs relative to one another — available through a platform like DrugChatter — is real, even if the existing competitive intelligence function already covers most of the same ground through other channels.

Key Takeaways

- AI agents — including ChatGPT, Gemini, Perplexity, and Microsoft Copilot — are active participants in the drug selection process for both patients and physicians. Their outputs are not neutral; they reflect the volume, authority, and recency of content in their training and retrieval environments.

- Drugs with larger published evidence bases, more media coverage, and more active patient communities are better represented in AI outputs. This structural advantage compounds over time and operates independently of marketing spend.

- Retrieval-augmented generation systems update AI outputs in near-real time based on web sources, meaning that new trial publications, adverse event news, and plaintiff litigation websites can shift AI drug representations within days of publication.

- FDA has not yet issued specific guidance on pharmaceutical company obligations regarding AI-generated drug misinformation, but existing OPDP enforcement logic applies channel-agnostically. Regulatory guidance is likely within the next 24 months.

- Systematic AI brand monitoring — covering query library design, multi-model tracking, output classification, and integration with pharmacovigilance and regulatory workflows — is now a commercial and compliance necessity, not an experimental capability.

- The patient query patterns that flow through AI agents are a direct, unguarded signal about unmet communication needs, emerging tolerability concerns, and competitive positioning gaps that traditional market research does not reliably capture.

- Medical affairs teams that treat publication strategy and data dissemination purely as physician communication are missing the AI content ecosystem effect. High-quality, publicly accessible clinical content directly shapes what AI agents say about a drug.

FAQ

Q1: Is there a legal obligation today for pharmaceutical companies to correct what AI agents say about their drugs?

No explicit legal obligation currently exists specifically for AI-generated drug information. FDA’s existing OPDP authority covers what manufacturers say and distribute, not what third-party AI systems generate. However, FDA’s social media guidance establishes that companies are expected to correct material misinformation about their drugs on third-party platforms when they are aware of it. Whether and how that principle extends to AI agents has not been tested in enforcement. Most regulatory attorneys advise building monitoring and response capabilities now, on the basis that guidance will eventually formalize an obligation.

Q2: How often do AI agents produce genuinely inaccurate information about approved drugs, versus outdated or incomplete information?

The distinction matters operationally. True fabrication — AI agents inventing clinical data that does not exist — is relatively rare for well-known branded drugs, because abundant accurate information in training data suppresses hallucination. The more common problem is information that was accurate at a point in time and is now outdated (a drug described as lacking cardiovascular outcomes data after that data has been published), or information that is incomplete (a drug’s efficacy described without required safety disclosures). Incompleteness is the dominant problem, and it is the category most directly implicated by FDA’s existing promotional standards.

Q3: Can a pharmaceutical company’s own digital content — its website, medical information pages, press releases — directly influence what AI agents say about its drugs?

Yes, through retrieval-augmented generation systems specifically. RAG-based AI tools (Perplexity, ChatGPT with browsing, Gemini with Search Grounding) query live web content when generating responses. A company’s medical information page that appears in search results for a drug query will be retrieved and incorporated into AI outputs. The content needs to be well-structured, clinically complete, and authoritative enough to rank in search results. Static training data is harder to influence directly, but continued publication of high-quality content in indexed, authoritative sources shapes the training data of future model generations.

Q4: How do AI outputs about a drug differ between patient-facing queries and physician-facing queries, and does that difference matter commercially?

It differs substantially in vocabulary, clinical specificity, and framing. Patient queries (“what does Keytruda do”) generate lay-language responses with emphasis on side effects and treatment duration. Physician queries (“pembrolizumab dosing in NSCLC with high PD-L1 expression”) generate technical responses referencing trial names, hazard ratios, and guideline recommendations. Both types of queries influence prescribing — patient queries through the informed patient effect (patients arriving with preferences), physician queries through clinical decision support. Monitoring needs to cover both, because the accuracy risks and the influence pathways differ between them.

Q5: What makes AI-sourced adverse event signals different from traditional MedWatch or spontaneous reporting signals, and should they be treated differently in pharmacovigilance?

AI-sourced signals — adverse events described in patient queries to AI agents, or in patient community posts that AI agents retrieve — are not formally structured adverse event reports. They lack the case completeness (patient age, dose, concomitant medications, outcomes) that regulatory databases require. They also have unknown denominator problems: a surge in patient queries about hair loss and a specific drug might reflect a true emerging signal or a social media amplification effect unrelated to actual incidence. Despite these limitations, they are an early-warning system. FDA’s real-world evidence framework does not currently address AI-sourced signals, but the agency’s direction in recent years has been toward incorporating diverse data sources into safety monitoring. Companies that are collecting and analyzing these signals have a pharmacovigilance infrastructure that positions them ahead of where regulatory standards are heading.