A look at the $50B opportunity reshaping how pharma companies launch, monitor, and defend their brands in the age of generative AI.

Sometime in late 2023, a brand manager at a mid-size oncology company noticed something strange. Patients calling into their medical information line were arriving pre-armed with clinical talking points — specific hazard ratios, subgroup data, off-label questions — that the company’s own reps had never emphasized. When the team traced the source, it wasn’t a competitor’s sales force. It was ChatGPT.

The patients had asked an AI chatbot about their cancer treatment options. The chatbot had answered. And its answer shaped what those patients expected from their oncologist before they ever walked into the office.

That brand manager’s company had no system to detect this. No monitoring. No visibility into what any large language model was saying about their drug. No way to know whether AI was recommending it, dismissing it, getting the dosing wrong, or conflating it with a biosimilar competitor.

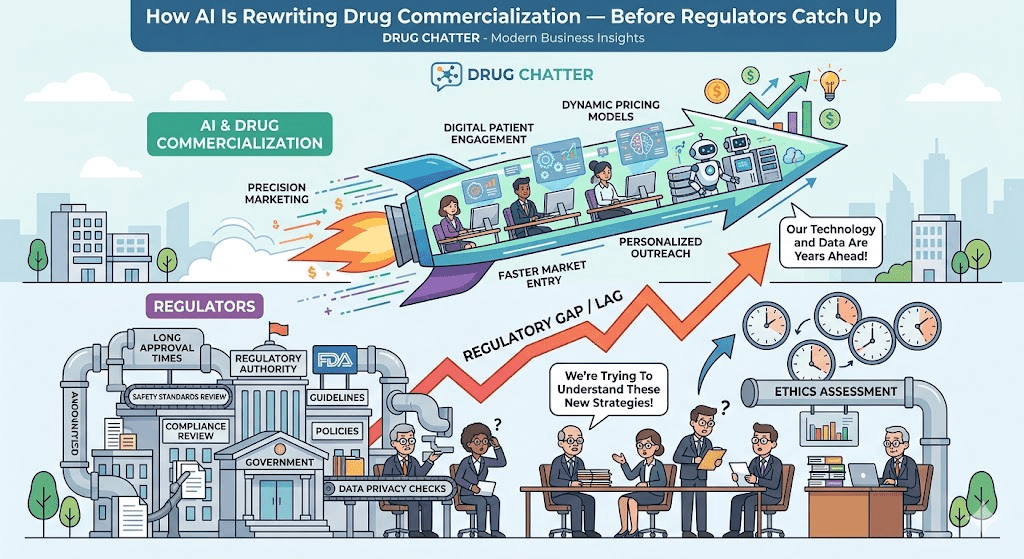

That gap is closing fast — and the companies closing it first will define what drug commercialization looks like for the next decade.

The Commercial Model That AI Is Dismantling

The pharmaceutical go-to-market playbook has been remarkably stable for forty years. You run a Phase III trial, publish in a major journal, build a sales force, detail physicians, run speaker programs, and hope your medical affairs team can contain off-label questions. Market research meant focus groups, advisory boards, and prescription data feeds from IQVIA.

That model assumed something that’s no longer true: that the primary gatekeepers of drug information were physicians, pharmacists, and the FDA-approved label.

Now there’s a third category. Call it the AI layer.

By early 2025, over 60% of U.S. adults reported using an AI assistant for health-related questions at least monthly, according to a Rock Health survey. Physicians themselves are not immune. A 2024 study published in JAMA Internal Medicine found that doctors asked ChatGPT clinical questions at rates that would have seemed implausible three years ago — and rated its responses as satisfactory in a substantial fraction of cases.

This is not speculative. AI is already in the exam room, in the pharmacy, and in the patient’s bedroom at midnight when anxiety spikes and Google feels too blunt. The question pharma companies are only beginning to ask seriously is: what is the AI saying about our drug?

Three Channels Where AI Is Replacing Traditional Drug Touchpoints

Direct-to-Consumer Information

For most of the past twenty years, direct-to-consumer drug advertising was a television problem. You bought airtime, ran a branded spot with a pleasant voiceover and a thirty-second side-effect reel, and measured aided awareness. Digital complicated that with programmatic and social, but the basic structure held: you controlled the message.

Generative AI breaks the structure entirely.

When a patient with newly diagnosed Type 2 diabetes asks Claude or ChatGPT to compare SGLT2 inhibitors, they get a synthesized response drawn from clinical literature, package inserts, and whatever training data the model ingested. That response might accurately represent the clinical picture. It might overweight a study that favored a competitor. It might repeat a safety signal from a 2019 FAERS report that was later contextualized. The patient has no way to know the difference.

Pharma companies have no way to know what’s being said unless they build systems to ask.

DrugChatter, one of a handful of purpose-built tools now targeting this problem, monitors AI-generated responses about specific drugs across multiple language model platforms. The pitch is simple: if a competing molecule is being described more favorably by the three AI systems your patients are actually using, your commercial team should know that before they see it in prescription data six months later.

The latency problem matters here. Traditional market research operates on quarterly cycles. AI-driven brand surveillance can surface a shift in AI-generated drug characterization within days. That speed changes the response calculus for medical affairs and regulatory teams alike.

HCP Decision Support

Physicians are time-constrained in ways that are almost comical. The average primary care visit runs eleven minutes. Cardiologists managing a complex patient on five medications don’t have time to re-read the EMPEROR-Reduced trial before each prescribing decision.

AI-based clinical decision support is filling that gap — at a pace that has surprised even enthusiasts. Epic’s embedded AI tools, Microsoft’s partnership with Nuance for ambient clinical documentation, and a growing range of point-of-care AI tools are all, in various ways, surfacing drug-relevant information at the moment of prescribing.

The opportunity for pharma companies is significant and largely unaddressed. If a clinical AI tool is systematically underweighting your drug’s outcomes data when presenting treatment options to cardiologists — whether because of a training data artifact, a guideline lag, or a competitor’s more aggressive publication strategy — that’s a commercial problem. It’s also potentially a patient safety problem, depending on the clinical context.

What’s novel about the AI layer is that it’s not static like a printed formulary. LLMs are updated, fine-tuned, and retrained. A drug that scores well in today’s AI-generated treatment summary could score worse after the next model update. Monitoring for this requires a kind of commercial vigilance that most pharma organizations haven’t built.

Payer and Formulary AI

Payer organizations are experimenting with AI-assisted formulary management, prior authorization review, and utilization management. This is early-stage but accelerating. Several large PBMs have announced AI pilots for prior authorization workflows, and at least two Blue Cross Blue Shield regional plans used AI-assisted clinical review tools for specialty drug authorization in 2024.

The implication is that clinical evidence summaries generated by AI may influence formulary tier placement for high-cost specialty drugs — a commercial outcome worth hundreds of millions of dollars for a successful biologic.

Pharma companies that can monitor what AI systems are saying about their drugs in payer contexts — and identify gaps between AI-generated evidence summaries and the full clinical data package — have a defensible advantage in formulary negotiations.

The Regulatory Problem Nobody Has Fully Solved

Here’s the part that keeps regulatory affairs teams awake.

The FDA regulates drug promotion. The FTC regulates advertising. HIPAA governs patient data. But none of these frameworks were designed for a world where an AI chatbot with no pharma industry affiliation — and no legal relationship with any drug company — spontaneously generates clinical information about branded drugs and delivers it to millions of patients.

This creates two distinct problems.

The first is third-party liability exposure. If an AI system gives a patient incorrect dosing information about your drug, can the drug company be held responsible? Current legal precedent suggests no — the AI developer is the relevant party. But that answer is legally unsettled, and plaintiffs’ attorneys are watching the space carefully. Several product liability scholars have argued that drug companies that are aware of systematic AI misinformation about their products and take no steps to correct it could face novel theories of liability as case law develops.

The second problem is promotion-by-inference. FDA’s regulations on off-label promotion are strict and well-established. But what happens when an AI system, trained partly on scientific literature and conference proceedings, routinely describes off-label uses of a drug in clinical detail? The AI is not the drug company. The drug company did not sponsor the training. But the effect on prescribing behavior may be functionally similar to what the FDA prohibits in direct promotional contexts.

The FDA held a public meeting on AI in drug promotion in October 2024. Its preliminary conclusions were cautious: the agency acknowledged the gap between existing regulatory frameworks and AI-generated drug content but stopped short of issuing specific guidance. A proposed rule is expected at some point in 2025 or 2026, though the timeline has already slipped once.

For pharma companies, the practical implication is to treat AI monitoring as a regulatory activity, not just a commercial one. Having documented surveillance of AI-generated drug content creates a defensible record if regulatory questions arise later. Not having it creates exposure. <blockquote> ‘AI is now a primary information source for patients making treatment decisions, yet pharma companies have almost no visibility into what AI systems are saying about their drugs. That’s both a commercial gap and an emerging compliance risk.’ — Endpoints News, citing a 2024 survey of pharma regulatory affairs executives (n=312) </blockquote>

How Brand Share Plays Out in AI Outputs

Traditional share-of-voice measurement counted physician detailing calls, journal advertising placements, speaker program reach, and digital impressions. The metric was an input measure: how much promotional noise are you generating relative to competitors?

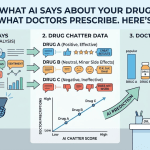

AI-era brand monitoring requires a fundamentally different approach. What matters is not how much you’re saying, but what the AI is synthesizing from the available evidence base — and how that synthesis stacks up against what your commercial team wants physicians and patients to believe about the drug.

Consider a concrete example. In the GLP-1 receptor agonist space, Novo Nordisk’s semaglutide (Ozempic, Wegovy) and Eli Lilly’s tirzepatide (Mounjaro, Zepbound) are locked in the most commercially consequential head-to-head battle in metabolic disease since the statins. Both companies have invested heavily in clinical data generation. The SURMOUNT and SURPASS trial programs for tirzepatide, and STEP and SELECT for semaglutide, have produced a rich clinical literature.

When patients and physicians ask AI systems to compare these drugs, the quality, recency, and framing of the underlying evidence becomes commercially decisive. A language model that has ingested the SELECT cardiovascular outcomes data for semaglutide but hasn’t yet incorporated the most recent tirzepatide real-world evidence will produce a response that favors semaglutide on cardiovascular outcomes — not because of any promotional intent, but because of a training data lag.

That lag is real commercial risk for Lilly, and real commercial opportunity for Novo Nordisk — entirely apart from anything either company has done in traditional promotion.

Monitoring this requires asking AI systems the same questions your patients and physicians are asking, systematically and repeatedly, and analyzing the outputs for accuracy, completeness, and competitive framing. This is what DrugChatter and similar tools do, and why pharma companies with large commercial stakes in contested therapeutic categories are paying attention.

Voice of the Customer in the AI Age

Patient centricity became a pharma industry mantra around 2015. Every company announced patient advisory boards, journey mapping exercises, and experience research initiatives. Most of this work was expensive, slow, and produced insights that arrived six months after the commercial question had moved on.

AI changes the economics of voice-of-customer research in ways that are not fully appreciated yet.

When patients interact with AI systems about their conditions and treatments, they generate language. They ask questions. They describe symptoms, concerns, and priorities in their own words. That language, at scale, is the most accurate patient insight data the industry has ever had access to — and it’s being generated continuously, not in an annual advisory board meeting.

The challenge is access. The conversations patients have with AI systems are private, and they should remain so. But there are two legitimate paths to this data.

The first is simulation. Pharma companies can run structured research in which they ask AI systems the questions their patients are most likely to ask, analyze the outputs, and use the linguistic patterns as a proxy for patient priority signals. If the questions patients ask AI systems about a particular drug cluster around adherence difficulty and side effect management, that’s a product development and patient support signal that would have taken two years of focus groups to surface using traditional methods.

The second is consent-based data collection. Several patient advocacy organizations and digital health platforms are developing frameworks for consented, de-identified sharing of AI interaction data for research purposes. This is nascent, legally complex, and involves real privacy considerations — but it’s coming.

Either way, the companies that build systematic voice-of-customer AI monitoring now will have a structural advantage in patient insight within three years. This is not a speculative claim; it follows directly from where patients are already going for health information.

The Medical Affairs Opportunity

Medical affairs organizations have spent the past decade trying to prove their commercial value. The traditional argument — we build KOL relationships, we run advisory boards, we support publications — has always been difficult to quantify in dollar terms that satisfy CFOs.

AI gives medical affairs a specific, high-value, and measurable function: ensuring that the scientific record about a drug is accurately represented in AI systems.

This isn’t a promotional activity in the regulatory sense. It’s the scientific mission that medical affairs was always supposed to serve. If a language model’s representation of your Phase III data is incomplete, outdated, or distorted by a biased corpus, a medical affairs team can do something about it — by ensuring that high-quality scientific content is published, indexed, and accessible to the data pipelines that train and update AI systems.

Publication strategy becomes AI strategy. The traditional medical affairs calculus was: publish in high-impact journals, get cited, build the scientific narrative. That calculus now has a new output variable: are AI systems finding and correctly weighting this literature?

Some evidence suggests that AI systems are disproportionately influenced by publications in journals that are well-indexed in the databases these systems preferentially train on. A landmark study published in a specialized subspecialty journal may be less likely to reach AI training pipelines than a more accessible publication in a general medicine journal. Medical affairs teams that understand these dynamics can shape publication strategy accordingly.

This is not manipulation of AI systems. It’s the same scientific communication work medical affairs has always done — applied to a new information channel.

Real-World Evidence and AI: A New Commercial Weapon

Real-world evidence has been building its case for commercial relevance for years. FDA’s 2018 Real-World Evidence Program, subsequent guidances, and the growing willingness of payers to accept RWE in formulary negotiations have all pushed the field forward.

AI accelerates the RWE cycle in ways that compress timelines from years to months.

Traditional RWE generation required manual chart abstraction, claims data licensing negotiations, IRB approvals, and months of statistical analysis. AI-assisted RWE platforms — several of which have achieved significant scale in the past two years, including Flatiron Health’s AI-enhanced oncology analytics and Komodo Health’s claims intelligence tools — can generate comparative effectiveness evidence at speeds that were impossible in 2020.

For drug commercialization, faster RWE has two direct implications.

First, it means formulary conversations can be armed with comparative effectiveness data sooner after launch. A drug approved in Q1 can have a preliminary real-world comparative effectiveness analysis ready for Q3 payer negotiations, instead of waiting eighteen months for traditional RWE to generate.

Second, it means competitors can use the same tools against you. A competitor that launches six months after your drug can commission AI-assisted RWE analysis and potentially arrive at formulary meetings with more current real-world data than you have, even though they’ve been on market less time.

The speed advantage is temporary for everyone. It’s a permanent structural feature for the first movers who build the capability.

What the GLP-1 Wars Teach Us About AI Commercial Strategy

No therapeutic category illustrates the AI commercial dynamic more clearly than GLP-1 receptor agonists.

Demand for semaglutide and tirzepatide has been so intense, and patient curiosity so high, that these drugs generate more AI-directed questions than almost any other pharmaceutical category. Every week, millions of people ask AI systems whether they qualify for a GLP-1, what the side effects are, how the drugs compare, and whether compounded versions are safe.

The compounding question is instructive. Following FDA’s shortage designations for semaglutide and tirzepatide between 2022 and 2024, compounding pharmacies legally produced copies of these drugs. FDA resolved the semaglutide shortage designation in early 2024 and the tirzepatide designation in late 2024, meaning compounded versions lost their legal basis.

But AI systems trained before these regulatory changes were still answering patient questions about compounded GLP-1s with outdated information months after the legal landscape had shifted. Patients were receiving AI-generated information suggesting compounded semaglutide remained a legal option when, in fact, FDA enforcement actions against compounders were already underway.

This is not a hypothetical commercial risk. Novo Nordisk filed litigation against compounders specifically on trademark and safety grounds. The company’s regulatory and commercial teams were deeply invested in the distinction between branded Ozempic/Wegovy and compounded semaglutide.

If AI systems were systematically treating these products as equivalent — or even subtly blurring the distinction — that was a commercial and regulatory problem that existed entirely outside traditional monitoring frameworks. Companies that built AI-specific surveillance caught this. Companies that relied on traditional brand monitoring didn’t.

The Biosimilar Challenge: AI as a Generic Equalizer

Biosimilar competition is the single most significant commercial risk for large biologic franchises. The patent cliff for many blockbuster biologics — including adalimumab (Humira), etanercept (Enbrel), and the oncology checkpoint inhibitors — has generated a wave of biosimilar entrants that are transforming the economics of immunology and oncology.

AI may accelerate biosimilar substitution in ways that the industry has not fully modeled.

Traditional biosimilar adoption required physician-level acceptance, payer step-edit policies, and pharmacy-level substitution protocols. These are slow processes. Physician conservatism and patient loyalty to established brands built significant switching friction.

AI-generated drug information may erode that friction in two ways.

First, AI systems tend to describe biosimilars as therapeutically equivalent to their reference products — because that’s what the clinical and regulatory literature says. A patient asking an AI system whether it’s okay to switch from Humira to Hadlima (Samsung Bioepis’s adalimumab biosimilar) will likely receive a response that characterizes them as clinically equivalent, which is the FDA’s position and the scientific consensus.

For AbbVie’s commercial team, that AI-generated equivalence framing is commercially costly. The company has invested billions in Skyrizi and Rinvoq precisely because those are differentiated molecules that AI can’t treat as equivalent to adalimumab biosimilars. But to the extent patients ask AI about biosimilar switching before talking to their physician, the AI response shapes the conversation.

Second, AI has dramatically reduced the information asymmetry between biosimilar manufacturers and reference product sponsors. A small biosimilar company without a large MSL force can now reach prescribers through AI-indexed scientific literature that gets surfaced to the same clinical AI tools large pharma companies are trying to influence.

The practical implication for reference product sponsors: monitor what AI says about the equivalence (or non-equivalence) of your biologics and their biosimilar competitors. Ensure that nuances in the clinical data — subgroup analyses, immunogenicity profiles, formulation differences — are captured in AI-accessible scientific literature.

Sales Force Transformation: AI as the New Rep

The pharmaceutical sales force has been contracting since its peak in the mid-2000s. The industry employed roughly 102,000 sales representatives in the U.S. in 2005. By 2023, that number had fallen to approximately 60,000, with further contraction expected.

AI is not simply accelerating headcount reduction. It’s changing what the remaining sales force does.

The standard rep-physician interaction is increasingly rare. Physicians in large health systems are often subject to no-see policies. Hospital-based prescribers work in environments where pharmaceutical reps have limited or no access. The average number of face-to-face rep-physician encounters per physician per year has declined by more than 50% since 2015.

AI-native commercial models — where AI handles routine product information, formulary questions, and medical information follow-up, while human specialists focus on complex clinical conversations — are already operating in pilot form at several large pharma companies. AstraZeneca, Pfizer, and Novartis have each announced AI commercial pilots in the past two years, though specifics vary.

The medical science liaison function is particularly interesting. MSLs have traditionally been high-cost, high-expertise field personnel who handle scientific questions too complex for commercial reps. AI can now handle a significant fraction of the queries that previously required an MSL — standard clinical questions, comparative data requests, dosing scenarios.

What AI cannot do, for now, is replace the trusted scientific relationship that a senior MSL builds with a key opinion leader over years of substantive engagement. The MSL role is likely to bifurcate: routine inquiries go to AI, complex relationship-driven scientific exchange remains human.

For commercial planning, this means MSL productivity can be dramatically increased if organizations stop deploying them on queries that AI can handle. The companies that figure this out will redeploy MSL resources to the highest-value scientific conversations and see measurable impact on formulary positioning and KOL advocacy.

Competitive Intelligence in the AI Age

Traditional competitive intelligence in pharma relied on conference monitoring, publication tracking, pipeline databases like Clarivate’s Cortellis, and field force reporting from rep-to-manager observations. Good, but slow. And blind to AI-layer dynamics.

AI-era competitive intelligence adds a new layer: monitoring what AI says about competitor drugs.

If a competitor’s new drug launches and AI systems immediately characterize it as a class-leading option based on Phase III data — even before the brand team has built its counter-narrative — you need to know that in week one, not month six. The AI’s representation of comparative efficacy will shape HCP expectations before your medical affairs team can arrange advisory board discussions.

This is the specific use case that tools like DrugChatter are designed to address. Systematic, repeatable querying of AI systems across competitive therapeutic landscapes, with structured analysis of how drugs are characterized in relation to each other, provides competitive intelligence that traditional methods cannot generate.

It’s also intelligence that feeds upstream into clinical development strategy. If AI systems in a particular indication consistently prioritize certain endpoints or patient populations when evaluating drug options, that’s a signal about how your Phase III design should be optimized to generate evidence that AI systems will surface prominently.

Privacy, Ethics, and the Guardrails That Need to Exist

Any serious discussion of pharmaceutical AI commercial strategy has to include the guardrails.

Pharma’s history with aggressive marketing practices — direct-to-consumer advertising that overstated efficacy, off-label promotion settlements, manipulation of clinical trial publication — creates real obligations when thinking about AI commercial applications.

The ethical framework is not complicated, but it requires explicit commitment.

Monitoring what AI says about drugs is legitimate commercial intelligence. Attempting to manipulate AI outputs — gaming training pipelines, coordinating with AI developers to bias system responses, or creating artificial content designed to shift AI characterizations — is not. It’s also, depending on the mechanism, potentially a violation of FTC regulations on deceptive advertising and FDA regulations on drug promotion.

The line between legitimate scientific communication and AI manipulation is real. Publishing rigorous clinical evidence, ensuring that evidence is accessible in well-indexed scientific repositories, and engaging transparently with AI developers on medical accuracy are all legitimate activities.

Seeding AI systems with promotional content disguised as scientific information, or creating large volumes of web content designed to bias AI training data, are not.

Privacy is the other critical dimension. Patient data generated through AI health interactions is sensitive health information. Any system that aggregates, analyzes, or acts on this data needs to satisfy HIPAA where applicable, state-level health data privacy laws (several of which are stricter than HIPAA), and emerging AI-specific state privacy frameworks. Washington’s My Health MY Data Act and similar legislation in several other states create obligations that reach beyond what pharma regulatory teams have historically needed to track.

Companies building AI commercial monitoring programs need privacy counsel engaged from the start, not as a compliance review at the end.

Building the AI Commercial Function: A Practical Framework

Pharma companies asking ‘where do we start’ have four concrete areas to address.

AI brand monitoring is the immediate priority. Stand up a systematic program to query major AI platforms — ChatGPT, Claude, Gemini, Perplexity, and the AI-assisted search tools embedded in Google and Bing — with the questions your patients and physicians are actually asking about your drugs. Analyze outputs for accuracy, completeness, competitive positioning, and safety information. Make this a monthly cadence at minimum for priority brands.

Medical affairs AI literacy is the cultural investment. Medical affairs teams need to understand how AI systems find, weight, and synthesize scientific literature. Publication strategy, congress communication, and data generation should all account for AI-layer visibility. That means working with medical communications agencies that understand how content gets indexed and surfaced by AI systems.

Regulatory surveillance is the compliance function. Track FDA and FTC regulatory developments on AI in drug promotion with the same rigor you apply to traditional promotion guidance. The regulatory landscape will shift, and companies that have documented their AI commercial practices — and can demonstrate they’ve been thoughtful about the ethical lines — will be better positioned when guidance arrives.

Competitive AI intelligence is the strategic function. Systematic monitoring of what AI says about competitor drugs, treatment guidelines, and clinical evidence should feed into brand planning, formulary strategy, and clinical development prioritization.

These aren’t four separate initiatives requiring four separate headcounts. In a mid-size pharma company, one well-resourced brand team with the right tools and a clear mandate can run all four as an integrated AI commercial monitoring function.

The Companies Already Getting This Right

Several companies have moved beyond exploration and are running operational AI commercial programs.

Pfizer built out AI commercial monitoring capabilities through its partnership with several AI analytics vendors beginning in 2023. The focus was initially on patient-facing AI interactions related to Paxlovid during the COVID-19 antiviral period — a high-stakes case because misinformation about drug-drug interactions was a genuine patient safety risk, and AI systems were sometimes getting those interactions wrong.

Genentech/Roche has invested in AI-assisted medical information response systems that help patients and physicians get accurate answers to questions about their oncology portfolio. The commercial and scientific rationale is intertwined: a physician who gets an accurate AI-assisted response to a clinical question about Tecentriq or Avastin is more likely to retain confidence in the brand than one who receives a muddled or outdated response from a general-purpose AI.

Pfizer’s Paxlovid experience also illustrates the regulatory dimension: when AI systems provided incorrect information about Paxlovid’s drug-drug interactions with common medications, including statins and certain blood thinners, there was meaningful patient risk. Having a system that detected and could correct these AI errors was not just commercial — it was a patient safety function.

Smaller specialty pharma companies — particularly in rare disease, where patients are desperate for information and AI is often one of their first stops — are finding that AI monitoring is proportionately even more valuable than in large markets. A rare disease with two thousand annual diagnoses doesn’t have the luxury of the awareness-building that blockbuster-scale marketing provides. Getting the AI-generated narrative right matters enormously when your total addressable physician population is a few hundred specialists.

What the Next Three Years Look Like

The trajectory is clear even if the specifics aren’t.

AI will become a primary drug information channel for patients and physicians over the next three years. The percentage of clinical questions answered by AI — currently significant and growing — will increase as AI tools become more deeply embedded in electronic health records, pharmacy systems, and patient-facing platforms.

Regulatory frameworks will catch up, but slowly. FDA will issue guidance on AI in drug promotion, probably in 2026. The guidance will likely distinguish between direct pharma engagement with AI systems (subject to existing promotion regulations) and third-party AI-generated content about drugs (currently outside FDA’s regulatory authority). Companies that have built defensible AI commercial practices before the guidance issues will be in better shape than those scrambling to retrofit.

The competitive advantage of early movers will compress as the market matures. AI commercial monitoring will shift from a differentiated capability to a table-stakes function, the way digital marketing and CRM became table stakes over the course of the 2010s. The companies that build this capability now will have a two-to-three-year window where it generates outsized commercial return.

After that window closes, it’s the cost of doing business.

Key Takeaways

AI has already become a meaningful drug information channel for patients and physicians, and most pharma companies have no systematic visibility into what AI systems are saying about their drugs.

The commercial risks are specific: AI-generated mischaracterization of drug efficacy, safety, or competitive positioning; training data lags that disadvantage drugs with more recent evidence; biosimilar equivalence framing that erodes brand loyalty; and AI-assisted clinical decision tools that underweight your drug’s outcomes data.

The regulatory risks are emerging and not fully resolved: FDA’s existing promotion frameworks don’t cover third-party AI-generated drug content, but that gap is closing. Companies that document thoughtful AI commercial practices now will be better positioned when regulatory guidance arrives.

Medical affairs is the natural organizational home for AI commercial monitoring, because the core activity — ensuring accurate scientific representation of drugs in information channels — is the scientific mission medical affairs has always served.

The ethical lines are real and must be held: monitoring is legitimate, manipulation is not. The pharma industry’s credibility with regulators, physicians, and patients depends on maintaining that distinction clearly.

Tools like DrugChatter represent the early infrastructure for systematic AI brand monitoring. The companies investing in this capability now are solving a problem that will only grow as AI becomes more deeply embedded in clinical decision-making.

The question isn’t whether AI will reshape drug commercialization. It already is. The question is whether your brand team knows what the AI is saying about your drug today.

FAQ

Q: Can a drug company legally ask AI developers like OpenAI or Anthropic to correct inaccurate information about their drugs?

A: Yes, and several have. This is generally treated as a medical accuracy inquiry rather than a promotional one, and AI developers have technical processes for flagging systematic errors in medical content. The key is that requests must be grounded in documented factual inaccuracies — wrong dosing, contraindications that don’t reflect the approved label — rather than promotional preferences. Asking an AI developer to characterize your drug more favorably than the evidence supports would be a different matter entirely, and would likely violate FTC deceptive advertising standards. The distinction between ‘please correct a factual error’ and ‘please promote our drug’ is the same line that governs medical affairs-to-KOL interactions in traditional settings.

Q: How should pharma companies think about AI-generated content in the context of FDA’s off-label promotion restrictions?

A: This is one of the genuinely unsettled questions in pharmaceutical law right now. FDA’s off-label promotion restrictions apply to manufacturers and their agents — not to AI systems that independently synthesize clinical literature. A language model that describes an off-label use based on published scientific evidence is not legally equivalent to a company-sponsored promotional piece. However, if a company were found to have deliberately influenced AI training data to surface off-label uses, that could fall within FDA’s promotional authority. Companies should monitor AI-generated off-label content carefully, document that they haven’t directed it, and ensure that any company-generated communications that could influence AI outputs are reviewed by regulatory affairs teams applying the same standards as traditional promotional materials.

Q: What’s the difference between AI drug monitoring and traditional pharmacovigilance?

A: Pharmacovigilance is specifically about adverse event detection — collecting, analyzing, and reporting safety signals from post-market drug use. AI drug monitoring in the commercial sense described here is primarily about informational accuracy, brand positioning, and patient/physician information quality. The two can intersect. If an AI system is generating incorrect information about contraindications or drug interactions, that’s both a commercial monitoring concern and potentially a pharmacovigilance concern, since misinformation could lead to adverse events. Some companies are beginning to integrate AI monitoring outputs into their broader safety surveillance functions precisely because patient-facing AI misinformation represents a potential safety pathway. FDA has not yet issued guidance on whether AI-generated adverse event signals trigger the same pharmacovigilance reporting obligations as traditional AE reports, but this question is on the agency’s agenda.

Q: How do small and mid-size pharma companies access AI commercial monitoring capabilities without the budget of a Pfizer or Novartis?

A: The economics are actually more favorable than they look. Purpose-built AI drug monitoring tools — including DrugChatter — are priced as SaaS subscriptions rather than enterprise analytics platforms, which makes them accessible to companies with a single priority brand and limited commercial budgets. The alternative — running manual AI queries across multiple platforms on an ad hoc basis — is possible but unreliable, because AI outputs vary by query phrasing, query timing, and model version. The value of systematic monitoring comes from consistency and comparability over time, not from a single query. For a specialty pharma company with a drug generating $200M or more in annual revenue, the commercial intelligence value of knowing what AI is saying about the drug — and catching mischaracterizations early — is worth the subscription cost many times over.

Q: What should a brand team actually do when they discover that AI systems are characterizing their drug inaccurately?

A: The response depends on the nature of the inaccuracy. For factual errors — wrong dosing, outdated safety information, incorrect mechanism of action — the first step is documenting the specific error with timestamped query logs, then engaging the AI developer’s medical accuracy team through official channels. Most major AI developers have processes for this. For competitive characterization issues — the AI consistently describing a competitor’s drug more favorably — the response is more nuanced. If the AI’s characterization reflects the current evidence base, the answer is to generate better evidence and ensure it’s accessible to AI training pipelines. If it reflects an evidence gap, that’s a signal for clinical development and publication strategy. Medical affairs teams should treat AI characterization gaps the same way they treat gaps in KOL awareness: as a scientific communication problem with a scientific communication solution.