The pharmaceutical industry has spent decades building brand reputations through clinical trials, physician detailing, and tightly controlled messaging. That infrastructure still matters. But a new variable has entered the equation: AI-generated responses that millions of patients, caregivers, and clinicians consult daily, often without knowing the source.

When a patient asks ChatGPT whether Ozempic causes thyroid cancer, or when a nurse queries an AI assistant about Eliquis interactions before a procedure, the answer they receive is not drawn from a pharmaceutical company’s approved labeling. It is synthesized from web-crawled data, forum posts, news archives, and published literature, weighted by algorithms that no brand manager has access to. The answer may be accurate. It may be outdated. It may reflect a competitor’s framing. And unlike a Google search, the user does not see a list of sources to cross-reference.

This is the drug brand reputation problem of 2025, and most pharmaceutical companies are not yet equipped to track it, let alone influence it.

Why AI Responses Are Not Neutral

Search engine optimization is a mature discipline. Pharmaceutical companies know how to structure their web presence to appear prominently in Google results. They know how to monitor social media sentiment and track share of voice across digital channels. None of that preparation directly translates to managing how large language models (LLMs) represent their products.

LLMs like GPT-4o, Claude 3.5, Gemini 1.5, and Perplexity’s AI answer engine do not retrieve a ranked list of documents. They generate probabilistic text based on patterns in training data. That training data is enormous, diverse, and temporally bounded — meaning the model’s knowledge reflects a snapshot of the world that may predate recent label updates, new clinical data, or resolved safety signals.

When semaglutide (Ozempic, Wegovy) became the most-searched weight-loss drug in 2023, AI platforms were fielding questions about it using training data that predated the FDA’s 2023 label update on ileus risk. Patients asking about side effects were receiving incomplete information — not because AI was malicious, but because training cutoffs lag real-world regulatory activity by months to years.

For brand managers, this matters for two distinct reasons. First, incomplete or outdated AI responses can shape patient expectations before they speak to a physician, creating misaligned perceptions that affect adherence and satisfaction. Second, if an AI consistently describes a drug in terms that align more closely with a competitor’s positioning — say, framing it as a ‘last resort’ rather than a ‘first-line option’ — that framing seeps into how patients and providers conceptualize the product without any corresponding paid media buy.

The Regulatory Dimension Pharma Cannot Ignore

The FDA’s Office of Prescription Drug Promotion (OPDP) has not yet issued formal guidance on AI-generated drug information. But the agency has been watching. In 2024, OPDP published a research report examining how consumers interpret AI-generated promotional content, and the findings were clarifying: users frequently cannot distinguish AI-generated drug information from FDA-approved labeling.

This creates a compliance surface that extends beyond what pharmaceutical companies publish directly. If a company’s own chatbot or AI tool generates promotional content about a drug, that content is subject to OPDP review standards. The regulatory expectation is that AI-generated promotional content must be:

- Fair-balanced (presenting risks alongside benefits)

- Consistent with FDA-approved labeling

- Not misleading through omission

What remains unresolved is whether third-party AI platforms — those not operated or sponsored by pharmaceutical companies — carry any regulatory liability when they generate inaccurate drug information. The current posture from OPDP is that third-party AI outputs are not the manufacturer’s responsibility to control, but that posture could shift as the agency develops its AI policy framework. A draft guidance on AI in drug promotion is expected to emerge from OPDP in 2025 or 2026, according to individuals familiar with the agency’s planning process.

Savvy regulatory affairs teams are not waiting for guidance to drop. They are already documenting how their products appear in AI responses, building evidence files that could support either proactive correction or regulatory defense. That documentation requires systematic monitoring — something that cannot be done manually at scale.

How AI Models Describe Drugs: A Taxonomy of Risk

Not all AI misrepresentation is equal. Based on the types of queries pharmaceutical brand teams are beginning to track, the risks cluster into four categories.

Outdated safety information. AI models trained before a labeling revision may describe a drug’s safety profile in terms that no longer reflect current FDA-approved language. The ileus risk added to GLP-1 receptor agonist labels in 2023 is one example. The 2024 updates to SGLT-2 inhibitor labels around serious infection risk are another. When patients ask AI about these drugs, models trained before those updates generate responses using outdated safety language.

Competitive repositioning by omission. LLMs draw on a vast training corpus that includes competitor press releases, advocacy group messaging, and media coverage. When asked to compare drugs, models may emphasize attributes that one competitor has historically promoted, inadvertently reproducing that competitor’s positioning. A model that consistently describes apixaban (Eliquis) as having ‘fewer food interactions’ than warfarin is technically accurate — but if the model also consistently describes dabigatran (Pradaxa) as ‘requiring dose adjustments in renal impairment’ without equivalent nuance about other agents, that selective emphasis shapes prescriber perception.

Litigation overhang. AI models trained on news archives capture the full arc of litigation coverage. Drugs that were the subject of mass tort litigation — even if the cases settled or were dismissed — carry that litigation history into AI responses. Zantac (ranitidine) remains a case study. Despite the FDA recalling ranitidine in 2020 and despite subsequent scientific review casting doubt on the NDMA contamination theory, AI responses about Zantac frequently surface litigation-associated language that conflates regulatory action with proven causation. For brands that faced litigation and moved on, AI represents a persistent reputation liability.

Off-label use misdirection. LLMs can generate responses about off-label uses of drugs that pharmaceutical companies cannot legally promote. When a patient asks an AI about using a drug for an unapproved indication, the model may produce a medically plausible response drawing on published case studies or investigator-initiated research. The company cannot respond to that content in a promotional context. But if the AI response is inaccurate or incomplete, patients may pursue that use without appropriate medical supervision.

Measuring AI Share of Voice: The New Brand Metric

Traditional pharmaceutical brand tracking uses metrics like: total prescriptions written (TRx), new-to-brand prescriptions (NBRx), share of voice in medical journals, and detailing coverage among target prescribers. These metrics are well-established and give commercial teams a grounded view of brand performance.

None of them capture what AI platforms say about drugs.

A new category of measurement is emerging. Call it AI share of voice: the proportion of AI-generated responses about a therapeutic area in which a given brand appears favorably, unfavorably, or not at all. Unlike traditional share of voice, AI share of voice is not purchased — it reflects the aggregate weight of training data and how models interpret it.

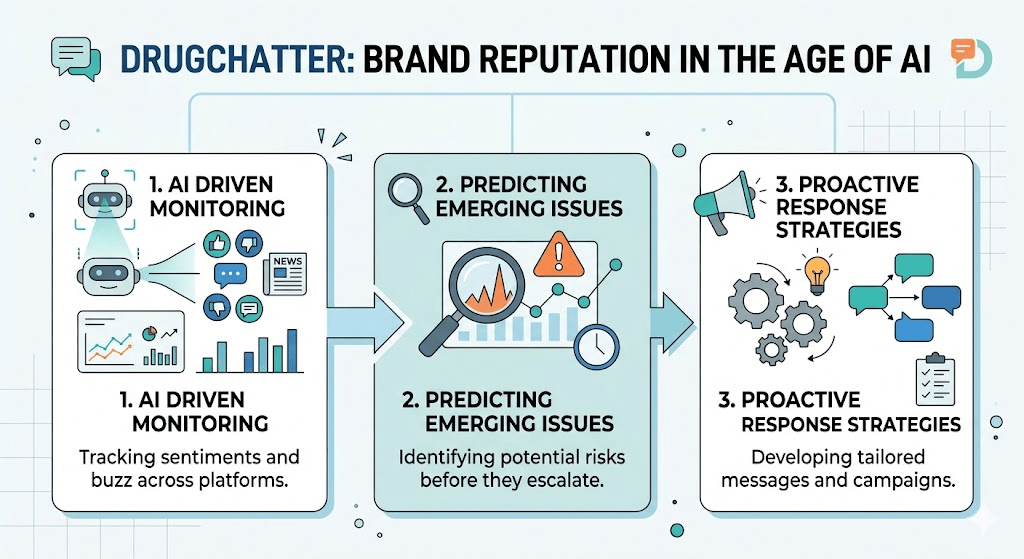

Platforms like DrugChatter are designed specifically to track this. By systematically querying AI platforms with the kinds of questions patients and providers actually ask — ‘What is the best treatment for atrial fibrillation with low bleeding risk?’ or ‘What GLP-1 is covered by most insurance plans?’ — and analyzing the generated responses, pharmaceutical companies can build a quantitative picture of how their brands are represented across the AI ecosystem.

The output of that tracking is actionable. If Brand X consistently appears in AI responses about treatment-naive patients but not in responses about patients who have failed first-line therapy, that tells a commercial team something specific about where AI is and is not working for them. It may indicate that published data on second-line use is sparse, that competitor data is more prominent in the training corpus, or that the brand’s own digital content is not structured in a way that AI models parse effectively. <blockquote> ‘Roughly 60% of U.S. adults now use AI tools for health-related queries, up from 38% in 2022, according to a 2024 Rock Health Digital Health Consumer Adoption Survey — making AI-generated drug information a de facto first touchpoint for a majority of patients before they speak to a physician.’ </blockquote>

The Patient Journey Has Changed: AI Is Now the Front Door

Pharmaceutical companies have invested heavily in understanding the patient journey — the sequence of touchpoints a patient traverses from symptom awareness to diagnosis to treatment initiation. Digital health mapping firms have built entire practices around instrumenting this journey with data.

That journey now has a new first stop. Before a patient calls their doctor, searches a condition on WebMD, or visits a brand’s patient website, a growing share of them open ChatGPT or Perplexity and ask a question in natural language. The AI response they receive shapes what they believe before they enter any other touchpoint.

This has practical consequences for how pharmaceutical companies should think about content strategy. The traditional model — optimize for search rankings, seed patient communities with approved content, maintain a robust branded patient site — still has value. But it is no longer sufficient as a sole strategy for managing the information environment around a drug.

The reason is structural. LLMs do not crawl patient websites the way Google’s spider does. They ingest training data in bulk, at irregular intervals, through processes that pharmaceutical companies cannot directly influence or track. A brand’s patient site may be the highest-ranking organic result for a given search query while simultaneously being underrepresented in AI training data. The two channels are governed by entirely different logics.

This does not mean pharmaceutical companies are helpless. There are specific, evidence-based steps they can take to improve their representation in AI outputs — but those steps require understanding how LLMs actually work, which most pharmaceutical marketing teams currently do not.

How AI Models Learn About Drugs: The Training Data Problem

To understand why AI represents drugs the way it does, you need a basic grasp of how LLMs are trained. These models are pre-trained on enormous text corpora drawn from the public internet, books, and licensed datasets. The training data is assembled at a point in time and does not update continuously (with some exceptions for retrieval-augmented systems like Perplexity, which layer live search on top of a base model).

The composition of the training data determines what the model ‘knows.’ For pharmaceutical topics, training data includes:

- PubMed abstracts and full-text articles (when available)

- FDA press releases, drug approvals, and safety communications

- News coverage from general and trade publications

- Patient forum discussions on Reddit, PatientsLikeMe, and similar platforms

- Wikipedia drug articles (which are frequently cited in AI responses)

- Legal and litigation databases

- Company press releases indexed by search engines

What is notably absent from most training corpora: FDA-approved full prescribing information in structured form, proprietary patient support program data, real-world evidence datasets held by pharmaceutical companies, and internal medical information databases. The AI’s picture of a drug is therefore a reconstruction from public-domain sources, with all the skews and gaps those sources contain.

Wikipedia is worth special attention. Multiple studies of LLM outputs have found that Wikipedia content has disproportionate influence on AI-generated responses about named entities, including drugs. Wikipedia’s pharmaceutical articles are maintained by volunteer editors with varying levels of clinical training. They tend to over-represent safety concerns relative to efficacy data, because safety information generates more editorial activity (citations, controversies, rewrites). A drug that faced a high-profile safety signal, even one that was subsequently resolved, will have a Wikipedia article that reflects that signal prominently. And because Wikipedia articles are heavily indexed and frequently updated, they remain well-represented in AI training data across model generations.

Pharmaceutical companies can and do edit Wikipedia. Many maintain formal Wikipedia engagement programs, and the FDA has engaged with the Wikipedia community to improve accuracy on drug-related articles. But Wikipedia is not the whole picture, and it is not the only mechanism through which training data shapes AI outputs.

Real-World Cases: When AI Got It Wrong

The risks described above are not hypothetical. Several well-documented cases illustrate how AI misrepresentation of drugs has caused material brand and regulatory problems.

Paxlovid and rebound. When Pfizer’s nirmatrelvir/ritonavir (Paxlovid) launched for COVID-19 treatment in December 2021, early post-marketing reports described a ‘rebound’ phenomenon in which some patients experienced return of symptoms after completing the five-day course. This rebound signal was picked up extensively in news coverage and social media. When patients began querying AI platforms about Paxlovid in 2022 and 2023, responses frequently emphasized the rebound risk in ways that outweighed the established efficacy benefit. Subsequent analysis by the FDA and independent researchers found that rebound occurred at rates not substantially different from untreated patients, and that it did not indicate treatment failure. But by that time, the AI training corpus had already absorbed the early, alarming coverage. Physicians reported patients declining Paxlovid based on AI-sourced concerns about rebound.

SSRI discontinuation syndrome. A class-level example: queries about stopping antidepressants frequently generate AI responses that describe ‘discontinuation syndrome’ in language that conflates normal physiological effects of medication cessation with addiction or dependence. This characterization is medically contested. The FDA uses specific labeling language to distinguish antidepressant discontinuation effects from dependence, and professional guidelines are explicit that antidepressants do not cause addiction in the clinical sense. Yet AI responses consistently reproduce legacy framing from patient forums and early-generation news coverage that did not make this distinction carefully. For brands across the SSRI and SNRI class, this affects both initiation rates (patients reluctant to start a drug they fear they cannot stop) and adherence (patients stopping abruptly without physician guidance because they are afraid of ‘becoming dependent’).

Aduhelm (aducanumab) and the approval controversy. Biogen’s aducanumab received accelerated approval from FDA in June 2021 under controversial circumstances, including overriding the recommendation of the FDA advisory committee. The coverage was extensive, critical, and has remained in the training corpus. When patients or family members of Alzheimer’s patients query AI about aducanumab, they receive responses that prominently feature the approval controversy, the advisory committee dissent, and the CMS decision to restrict Medicare coverage to clinical trial participants only. That is factually accurate — but it is also a compressed version of a complex regulatory history that does not give equivalent weight to the subsequent clinical data or the eventual approval of lecanemab (Leqembi) and donanemab, which validated the amyloid hypothesis. The AI framing of the Alzheimer’s drug class remains colored by the Aduhelm episode in ways that affect brand perception for all anti-amyloid therapies.

The Competitive Intelligence Angle

AI monitoring is not just about protecting your own brand. It is also a legitimate competitive intelligence tool.

If a competitor’s drug is consistently described by AI platforms in terms that reflect an unproven clinical advantage — say, a model that repeatedly characterizes a rival’s JAK inhibitor as ‘better tolerated’ than your product without citation to head-to-head data — that is actionable intelligence. It tells your medical affairs team where to direct publication efforts. It tells your market access team what narratives physicians may be encountering. And it tells your regulatory affairs team whether that characterization constitutes promotional language that could be flagged with OPDP.

The reverse is also true. Your own drug may be receiving favorable AI characterization that your commercial team has not fully capitalized on. If AI platforms are describing your cardiovascular drug as ‘preferred in patients with chronic kidney disease’ based on renal outcome trial data, and your detailing messages have not yet incorporated that positioning, there is a gap between what AI is telling physicians and what your sales force is.

DrugChatter and similar platforms surface both of these patterns. The output is not simply a sentiment score — it is a structured analysis of how AI describes comparative effectiveness, safety profiles, dosing convenience, insurance coverage, and patient population fit, across the full range of AI platforms that a target audience might use.

Medical Information and AI: A Compliance Gray Zone

Medical information (MI) departments handle unsolicited requests from healthcare providers and patients for information about off-label uses, drug interactions, and clinical data beyond the prescribing information. The legal framework governing MI responses is well-established: pharmaceutical companies can respond to unsolicited requests with balanced, non-promotional information.

AI complicates this in two ways.

First, some pharmaceutical companies are exploring AI-powered MI systems that can handle high-volume, routine inquiries — questions about dosing in special populations, drug-drug interaction profiles, storage requirements. These systems are not inherently problematic, but they raise the question of what happens when the AI generates a response that is factually incorrect or that the user could interpret as promotional. If the AI system is operated by the pharmaceutical company, OPDP’s promotional standards apply. If it is a third-party system, the liability picture is less clear.

Second, AI systems developed by health plans, pharmacy benefit managers, or healthcare systems may generate MI-equivalent responses about pharmaceutical products without any oversight from the manufacturer. A health plan’s AI that responds to a member’s question about whether their Humira (adalimumab) prescription could be switched to a biosimilar is operating in territory that pharmaceutical companies have historically managed through physician detailing and patient support programs. AI removes the human intermediary.

What Pharmaceutical Companies Can Actually Do

There is a practical limit to how directly pharmaceutical companies can influence LLM training data. They cannot submit content directly to OpenAI or Anthropic for inclusion in future training runs. They cannot modify what GPT-4o says when a patient asks about their drug. But there are specific, documented strategies that affect AI outputs over time.

Structured content production. LLMs ingest structured data more reliably than unstructured prose. Pharmaceutical companies that produce content in formats optimized for machine parsing — structured clinical summaries, data tables in consistently formatted publications, standardized drug fact sheets — are more likely to see that content reflected accurately in AI outputs. The medical and scientific literature is the highest-credibility source available to AI models, and companies that invest in publishing real-world evidence, registry data, and head-to-head analyses create a richer substrate for AI to draw from.

Wikipedia engagement. Maintaining accurate, well-cited Wikipedia articles about your products is not glamorous, but it has outsized impact on AI outputs. This requires a formal program with medical and legal review, because Wikipedia’s policies on conflict of interest are strict and violations are tracked. Companies that have done this effectively — Pfizer, J&J, and Roche all have documented Wikipedia engagement programs — treat it as a legitimate medical affairs function, not a marketing one.

Retrieval-augmented AI strategies. A growing number of AI platforms use retrieval-augmented generation (RAG), which means they supplement base model knowledge with real-time search results. Perplexity and Microsoft Copilot operate this way. For these platforms, traditional SEO still matters, because the AI’s response is partially determined by what it retrieves from the web. Maintaining rich, authoritative, well-structured content at your domain improves the probability that RAG-based AI systems retrieve and surface your content in their responses.

Monitoring cadence. Systematic AI monitoring is the foundational capability. Without it, all other strategies are unguided. Platforms like DrugChatter allow pharmaceutical companies to run structured query sets against multiple AI platforms on a defined schedule, tracking how responses change over time as models are updated or retrained. This is the equivalent of brand tracking research for the AI channel — a repeatable, quantitative methodology for understanding what the AI ecosystem says about your drug.

Voice of the Customer in an AI-Mediated World

Pharmaceutical market research has long used qualitative and quantitative methodologies to capture ‘voice of the customer’ (VoC) — what patients and providers actually think about a drug, in their own words. AI creates a new VoC data source that is both richer and more problematic than traditional channels.

When patients post questions to AI platforms, they use natural language that reveals unaided perceptions, specific concerns, and information gaps. A patient who asks ‘Is it safe to take Jardiance if I have a UTI history?’ is revealing a barrier to adherence that a structured survey might not capture. The aggregate pattern of questions asked about a drug across AI platforms — when systematically monitored — constitutes a high-fidelity picture of what patients actually want to know, as opposed to what pharmaceutical companies assume they want to know.

This is not theoretical. Pharmaceutical companies that have begun querying AI platforms systematically have discovered consistent patterns: patients ask about Humana and UnitedHealthcare formulary coverage of expensive drugs in ways that suggest prior authorization denials are a more common experience than reported through traditional channels. Patients ask about biosimilar interchangeability in ways that suggest they are being switched by pharmacists without physician awareness. These signals have commercial and regulatory implications that traditional VoC methodologies are too slow and too structured to surface.

The AI query pattern is not a perfect research instrument. Users self-select (people who use AI for health information skew toward higher digital literacy), and the question itself shapes the answer in ways that differ from traditional survey design. But as a complement to existing VoC research, AI query analysis is uniquely capable of surfacing unaided, unprompted patient concerns at scale.

Regulatory Risk Tracking: The Early Warning System

One of the clearest applications of AI brand monitoring is regulatory risk detection. The FDA’s MedWatch adverse event reporting system is public, but it is large, noisy, and structured in ways that make signal detection difficult without specialized tools. The FDA’s Sentinel system processes claims data to detect safety signals, but it operates on a timeline that lags real-world events.

AI platforms, by contrast, reflect what is being discussed in the public domain in near real-time. If a safety signal begins circulating in patient communities — whether or not it has been reported to FDA — it will show up in AI-generated responses as that content gets indexed and incorporated into retrieval-augmented systems. A pharmaceutical company that is monitoring AI responses about its drug can detect emerging safety narratives before they crystallize into formal adverse event reports or, worse, news coverage.

This is not a hypothetical capability. The GLP-1 receptor agonist class demonstrated it clearly. Reports of gastroparesis associated with semaglutide began circulating in online patient communities in mid-2022. They reached mainstream media coverage by late 2022. The FDA issued a safety communication about GLP-1-associated ileus risk in 2023. A pharmaceutical brand team monitoring AI responses in mid-2022 would have seen the emerging gastroparesis narrative in AI-generated responses before it became a regulatory action — giving medical affairs months to prepare, evaluate the published evidence, and develop a communication strategy.

The Biosimilar Challenge: When AI Blurs Brand Lines

For pharmaceutical companies with biologics facing biosimilar competition, AI creates a specific problem: models frequently treat originator biologics and biosimilars as interchangeable in ways that overstate biosimilar equivalence and understate the clinical distinction that originator manufacturers have tried to establish.

When a patient asks an AI whether Humira and Hadlima (an adalimumab biosimilar) are ‘the same drug,’ a typical AI response will describe them as ‘highly similar with no clinically meaningful differences.’ That response is consistent with FDA’s definition of biosimilarity — but it is not consistent with the clinical data packages that originator manufacturers have used to support premium positioning. The AI is not wrong; it is applying the regulatory standard. But the regulatory standard is not the same as the marketing message.

Conversely, AI models sometimes generate responses about biosimilar substitution that are jurisdictionally incorrect. The FDA’s interchangeability designation — which allows pharmacist substitution without physician intervention — does not apply to all biosimilars. When AI tells a patient that their pharmacist can substitute their Enbrel (etanercept) prescription with a biosimilar without calling the doctor, that may be accurate in some states and inaccurate in others. The brand team for etanercept has a legitimate interest in ensuring AI responses on this topic are accurate, not only for competitive reasons but because incorrect substitution information could affect patient safety.

Oncology: The Highest-Stakes Category

In no therapeutic area are the consequences of AI misrepresentation more acute than oncology. Patients diagnosed with cancer frequently use AI platforms to research treatment options, understand clinical trial eligibility, and evaluate drugs their oncologists have recommended or failed to recommend. The information asymmetry between oncologist and patient is enormous in oncology, and AI is increasingly the tool patients use to close that gap.

The oncology AI information environment is shaped by several factors that amplify risk. Clinical trial results in oncology are frequently presented at conferences before publication, creating a period during which AI models may have indexed news coverage of conference presentations without having access to the peer-reviewed data. A drug that showed benefit in an interim analysis presented at ASCO may be described by AI in terms of that interim data even after the final publication modified the conclusions. Second, the progression of oncology treatment lines is complex, and AI models frequently generate responses about first-line versus second-line versus later-line therapy that do not reflect current NCCN guidelines or recent approvals.

The FDA approval rate in oncology is high relative to other therapeutic areas, but it creates a continuous stream of label updates, new indication expansions, and new combination approvals that AI training data lags. A patient asking about pembrolizumab (Keytruda) combination therapy options is likely to receive a response that reflects an earlier slice of Keytruda’s indication portfolio than its current one — which, as of 2025, encompasses more than 30 approved indications across more than 20 tumor types.

Building the Internal Capability: What the Organization Needs

AI brand monitoring is not a project that can be assigned to an existing team without structural change. It requires a cross-functional capability that does not exist in most pharmaceutical organizations today.

The core team should include representatives from four functions: medical affairs, regulatory affairs, market research, and digital marketing. Medical affairs owns the factual accuracy question — identifying where AI-generated information diverges from approved labeling or published evidence. Regulatory affairs owns the compliance surface — determining whether AI outputs implicate OPDP standards or create documentation obligations. Market research owns the insight generation function — translating AI query patterns into actionable VoC intelligence. And digital marketing owns the content strategy response — ensuring the company’s owned content is structured in ways that improve AI representation over time.

This team needs tools. Manual monitoring of AI platforms is not scalable. A team member querying ChatGPT once a month and noting the response is not monitoring — it is anecdote collection. Systematic monitoring requires automated query execution, response storage, natural language processing to categorize and quantify response attributes, and trend analysis to detect shifts over time. That is the infrastructure that platforms like DrugChatter are built to provide.

The team also needs a reporting cadence that connects AI monitoring outputs to commercial and regulatory decision-making. Monthly AI brand reports should feed into the same brand performance review processes that receive TRx data and field force feedback. Quarterly AI regulatory risk reports should feed into pharmacovigilance review. Annual AI share of voice analysis should feed into brand planning.

What Best-in-Class Looks Like in 2025

A handful of pharmaceutical companies have moved from awareness to systematic action on AI brand reputation. They share several characteristics.

They have defined a query library: the specific, representative set of questions that patients, caregivers, nurses, and physicians are most likely to ask AI platforms about their drugs. This library is developed in collaboration with medical affairs and validated against patient community data and call center logs. It is updated quarterly.

They run those queries across a defined panel of AI platforms — typically ChatGPT, Claude, Gemini, Perplexity, and at least one healthcare-specific AI tool — on a weekly or biweekly basis. Response data is stored and analyzed systematically.

They have established baseline metrics: what percentage of responses mention their drug first when a therapeutic area query is asked, what percentage of responses on safety include the currently approved risk language, what percentage of responses on comparative effectiveness align with published head-to-head data versus competitor-derived claims. These baselines are tracked over time to detect meaningful shifts.

They have a response protocol: when AI responses deviate from approved information in ways that create patient risk or regulatory exposure, they have a defined process for determining whether and how to respond. This may include accelerating publication of relevant clinical data, updating owned web content, engaging with healthcare-specific AI platforms that accept manufacturer data submissions, or, in cases of serious safety misinformation, notifying the relevant AI platform directly.

The Question of AI Platform Accountability

The pharmaceutical industry’s relationship with AI platforms is not yet adversarial, but the conditions for conflict are present. When AI companies train models on publicly available content, they do not notify pharmaceutical companies whose products are described in that content. When models generate responses about drugs, they do not provide citations that would allow brand teams to audit the underlying sources. And when AI-generated drug information is inaccurate, there is no formal mechanism for pharmaceutical companies to request correction equivalent to a right-of-reply in traditional media.

Some AI platforms are beginning to address this. OpenAI and Google have both indicated they are developing frameworks for healthcare-specific AI governance that may include mechanisms for authoritative source verification. Perplexity’s citation model at least makes the retrieval sources visible, allowing brand teams to identify which specific pieces of content are driving AI responses. But these are early-stage, inconsistent across platforms, and not yet governed by any regulatory standard.

The FDA’s 2024 AI action plan explicitly identifies health information accuracy as a priority area. The FTC has issued warnings about deceptive AI health claims. Neither agency has yet defined a clear enforcement framework for AI-generated drug information, but both are moving in a direction that will eventually produce one.

Pharmaceutical companies that have already built AI monitoring capabilities will be better positioned when that regulatory environment crystallizes. They will have documentation of how AI platforms have represented their products, a record of accuracy deviations, and a demonstrated good-faith effort to ensure accurate information is available to AI systems. Companies that have not built this capability will be starting from scratch under regulatory pressure.

The ROI Case for AI Brand Monitoring

Investment decisions in pharmaceutical marketing are governed by ROI models that are well-developed for traditional channels: cost per detail, cost per sample, cost per point of reach, return on medical education spend. AI brand monitoring does not yet have an established ROI methodology, and that makes budget justification difficult in organizations accustomed to channel-specific metrics.

The ROI case is real, but it requires framing across multiple value streams.

Regulatory risk avoidance is the most direct value stream. A single warning letter from OPDP costs pharmaceutical companies not just the legal and remediation expense, but the reputational damage of public disclosure and the operational disruption of pulling promotional materials. If AI monitoring detects a compliance risk early enough to allow remediation before it reaches OPDP’s attention, the value of that detection is asymmetric with the cost of the monitoring program.

Competitive intelligence is the second value stream. Market research firms charge between $150,000 and $400,000 for annual competitive intelligence programs that use traditional methods — physician surveys, claims data analysis, trade publication monitoring. AI monitoring adds a channel that traditional competitive intelligence does not cover, at a marginal cost that is small relative to the value of the additional insight.

Brand protection in litigation is the third value stream. In drug liability litigation, plaintiff attorneys increasingly use AI-generated information as evidence of what ‘reasonable’ information about a drug was available to patients and prescribers. A pharmaceutical company that has actively monitored and addressed inaccurate AI representations of its drug is in a stronger evidentiary position than one that has not. The value of that evidentiary advantage in active litigation can be substantial.

Patient safety is the fourth value stream, and the hardest to quantify but the most important. When AI misrepresents a drug’s safety profile or efficacy in ways that lead patients to discontinue effective therapy, initiate contraindicated combinations, or seek inappropriate off-label uses, the human cost of that misinformation is real. A monitoring program that detects and addresses those inaccuracies before they reach scale produces patient safety value that is morally significant even when it is difficult to monetize.

Key Takeaways

AI platforms are now a primary touchpoint in drug brand perception. A majority of U.S. adults use AI for health information. What LLMs say about drugs shapes patient and provider expectations before any other commercial touchpoint.

Training data lags regulatory reality. LLMs trained before a label update, safety communication, or approval decision will generate outdated responses — sometimes for years. Pharmaceutical companies cannot control training timelines but can structure content to improve AI representation over time.

Four specific risk types require monitoring. Outdated safety information, competitive repositioning by omission, litigation overhang, and off-label misdirection are the categories where AI consistently diverges from approved information.

AI share of voice is a measurable, actionable metric. Systematic query-and-response analysis across AI platforms produces quantitative brand performance data in a channel that traditional brand tracking does not cover.

Building internal capability requires four functions. Medical affairs, regulatory affairs, market research, and digital marketing each own distinct components of an AI brand monitoring program.

Regulatory accountability for AI drug information is coming. FDA’s OPDP is developing AI-specific guidance. Companies with documented monitoring programs will be better positioned than those starting under regulatory pressure.

Platforms like DrugChatter exist specifically for this problem. Systematic, automated AI monitoring across multiple platforms is not a manual function. Dedicated tools are the practical foundation for this capability.

FAQ

Q1: Does the FDA hold pharmaceutical companies responsible for what third-party AI platforms say about their drugs?

Not yet, under current guidance. OPDP’s existing framework applies to content that pharmaceutical companies create, sponsor, or control. Third-party AI-generated content — responses from ChatGPT, Gemini, or Perplexity that the manufacturer did not commission — is not currently treated as the manufacturer’s promotional material. But that posture is under active review. The FDA’s 2024 AI action plan flagged AI-generated health information as a policy priority, and OPDP has indicated that draft guidance on AI in drug promotion is in development. Pharmaceutical companies that wait for final guidance before building monitoring capabilities will be behind.

Q2: How do pharmaceutical companies improve how their drugs appear in AI responses without violating OPDP guidelines?

The practical strategies that do not implicate OPDP are content-based, not AI-specific. Publishing high-quality peer-reviewed evidence creates a richer, more accurate substrate for AI training data. Maintaining accurate, well-cited Wikipedia articles has documented impact on AI outputs and is permissible when done transparently with medical and legal review. Structuring owned web content for machine readability improves performance in retrieval-augmented AI systems. None of these strategies constitute direct AI model manipulation or promotional activity — they are content operations that improve the quality of publicly available information about a drug.

Q3: What is the difference between AI brand monitoring and traditional social media listening?

Social media listening captures what humans say about drugs in public forums — patient posts, caregiver discussions, provider comments on LinkedIn or X. AI brand monitoring captures what AI models say in response to direct queries. The two data streams are related but distinct. AI responses are shaped by social media content in the training corpus, but they synthesize that content into authoritative-sounding responses that users often cannot distinguish from approved information. A drug might have broadly positive social media sentiment while receiving unfavorable AI characterization based on outdated news coverage or litigation history that dominates the training data. Companies that only run social media listening will miss the AI signal entirely.

Q4: How frequently should pharmaceutical companies query AI platforms as part of a monitoring program?

Frequency should reflect the volatility of the information environment around a specific drug. For drugs in active litigation, under active FDA safety review, or facing major competitive events (biosimilar entry, competitor trial readout), weekly monitoring is appropriate. For stable, mature brands without significant near-term events, monthly monitoring may be sufficient. A quarterly comprehensive analysis — covering a broader query set, more platforms, and deeper trend analysis — is appropriate for all brands regardless of cycle. The key is that monitoring cadence is a deliberate decision, not a default to ‘when someone has time.’

Q5: Can AI monitoring data be used in legal proceedings involving drug liability?

Yes, and it is already being used in both directions. Plaintiff attorneys have used AI-generated drug information as evidence of what a ‘reasonable’ patient could learn about a drug’s risks. Defense attorneys have used AI monitoring data to demonstrate that inaccurate risk characterizations in AI are inconsistent with the FDA-approved safety profile and the available scientific literature. Pharmaceutical companies that have systematically tracked AI representations of their drugs — documenting both accurate and inaccurate characterizations over time — are better positioned to use that documentation defensively. Companies without a monitoring record are effectively missing a category of evidentiary record that has already appeared in drug liability cases.